Add AI Defense Instrumentation

15 minutesNote: this section of the workshop requires changes to multiple files. If you’re not sure where to make the changes, or your application is no longer working, please refer to the expected solution for this section which is in the

~/workshop/agentic-ai/app-with-ai-defensefolder.

Splunk Observability Cloud integrates with Cisco AI Defense to provide a consolidated view of security and privacy risks detected at runtime for your AI agents, allowing you to monitor performance and risks in one place.

This is referred to as Splunk AI Security Monitoring, which helps you to:

- Identify which agents, interactions, and services involve detected or blocked security and privacy risks, such as prompt injection and PII leakage

- Track risk trends alongside latency, errors, and other performance metrics over time

- Investigate risky interactions in trace context, down to specific prompts and responses

In this section, we’ll add the AI Defense integration to our Agentic AI application and review the resulting data in Splunk Observability Cloud.

How It Works

Splunk AI Security Monitoring provides an instrumentation library,

opentelemetry-instrumentation-aidefense,

to automate security and privacy risk tracing for Python-based AI agents.

This library captures and attaches security telemetry to calls that your

AI agents make to LLMs (such as OpenAI) and orchestration frameworks

(such as LangChain) to ensure that every prompt and response can be

audited against security guardrails and recorded within a unified

OpenTelemetry trace. It does this by adding the

gen_ai.security.event_id attribute to LLM or workflow spans.

SDK vs. Gateway Mode

The opentelemetry-instrumentation-aidefense library can operate in either SDK mode or gateway mode:

- With the SDK mode, the developer adds explicit security checks using

inspect_prompt(). This option is best for developers that want full control how security checks are implemented and how issues are addressed. - With Gateway mode, LLM calls proxied through Cisco AI Defense Gateway so application code changes are not required. This mode is supported for popular commercial LLMs such as OpenAI, Anthropic, etc.

This workshop utilizes Gateway mode with Azure OpenAI.

Setup the Cisco AI Defense Integration

The first step is to Set up an integration with Cisco AI Defense.

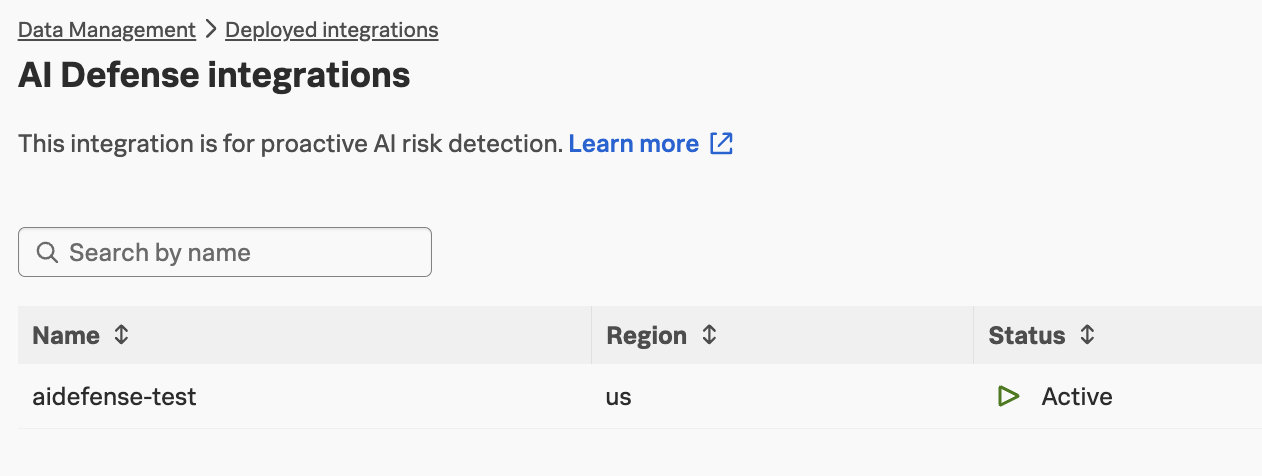

If you navigate to Data Management -> Deployed integrations and search for AI Defense,

you’ll see that this integration has already been configured:

Note: the

aiDefenseIntegrationfeature flag must be enabled to see this integration

Add Instrumentation Packages

Next, we need to install several instrumentation packages. We can achieve this by

opening the ~/workshop/agentic-ai/base-app/requirements.txt for editing and adding

the following packages:

Hint: run the following command to compare your changes with the expected solution:

diff ~/workshop/agentic-ai/base-app/requirements.txt ~/workshop/agentic-ai/app-with-ai-defense/requirements.txt

Build an Updated Docker Image

Build an updated Docker image with a new tag:

Tip: if the image is taking too long to build, consider using the pre-built image instead. To do so, update the image name in the

~/workshop/agentic-ai/base-app/k8s.yamlfile toghcr.io/splunk/agentic-ai-app:app-with-ai-defenseinstead oflocalhost:9999/agentic-ai-app:app-with-ai-defense.

Create a Secret for the AI Defense Gateway

The document provided by the workshop instructor contains a kubectl create secret

command to create a secret to store the AI Defense Gateway URL.

Copy and paste this kubectl create secret command from the document

and run it in your ssh terminal.

Update the Kubernetes Manifest

Open the ~/workshop/agentic-ai/base-app/k8s.yaml file for editing and

replace the definition of the AZURE_OPENAI_ENDPOINTenvironment variable

as follows, which ensures that any requests destined for Azure OpenAI are

instead sent through the AI Defense gateway:

In the same file, update the image to ensure we’re using the one with the instrumentation:

Hint: run the following command to compare your changes with the expected solution:

diff ~/workshop/agentic-ai/base-app/k8s.yaml ~/workshop/agentic-ai/app-with-ai-defense/k8s.yaml

Deploy the Updated Application

We can deploy the updated application using the manifest file as follows:

Test the Application in Kubernetes

Ensure the new application pod has started successfully and the old pod is no longer present:

Then, run the following command to test the application:

For now, just ensure that the application is still working. In the next section, we’ll add a security risk and then show how it can be detected.