Table of Contents

Getting Started ↵

About Splunk for SAP LogServ¶

v0.0.5 release: AI Assistant LLM functionality intentionally disabled pending review

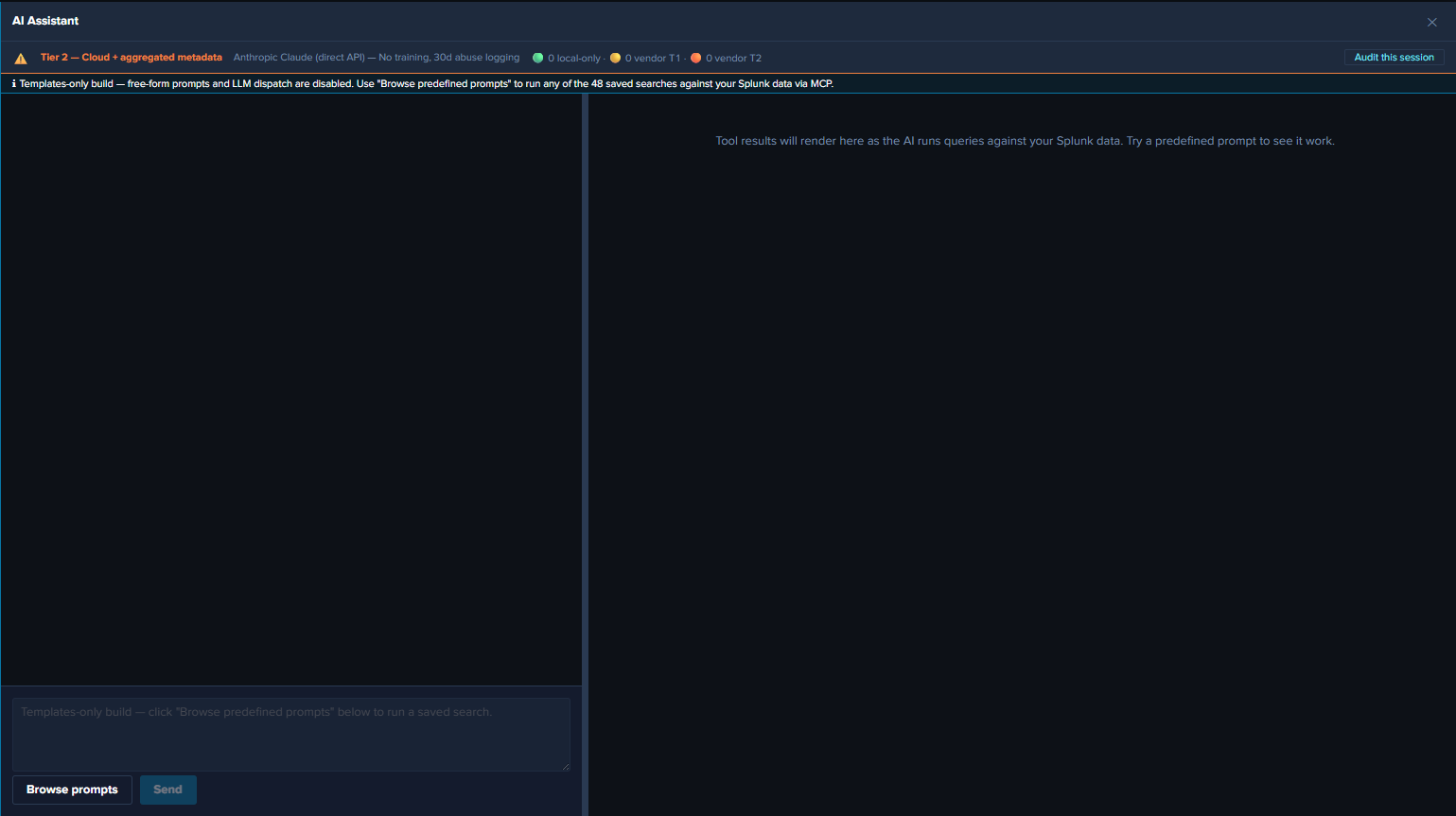

The v0.0.5 release ships with the AI Assistant’s LLM-driven path disabled at compile time pending internal review of the OWASP LLM Top 10 controls. The predefined-prompt path + Splunk MCP Server integration + 20 dashboards + Environment Topology view + audit log are all fully active. The free-form chat input is disabled, the model picker is hidden, and the Provider Credentials Settings tab is hidden. See Templates-only Build for the build mechanism. The LLM-driven path will be re-enabled in a future release once review concludes.

Introduction¶

SAP offers its customers ECS (fka RISE) with SAP S/4HANA Cloud Private Edition. This is an IaaS model (on a very basic level) from SAP’s vendor perspective, where SAP hosts customers’ SAP S/4HANA and other SAP systems in the customer’s choice of public cloud providers (AWS, Microsoft Azure, GCP, etc.), in accounts owned and managed by SAP itself. SAP LogServ provides logs from all SAP systems and layers (OS, database, etc.), and the logs can be integrated to be available to the customer’s security information and event management (SIEM) solution.

Splunk for SAP LogServ provides multiple mechanisms to access the logs from LogServ, ingest them into Splunk, and map the various log types to Splunk sourcetypes — plus a React-based UI App with 20 dashboards, a graph-based Environment Topology view, and an LLM-aware AI Assistant that lets analysts run pre-canned investigations without leaving Splunk.

Two Packages¶

The solution is delivered as two separately installable packages:

| Package | App ID | Install On | Role |

|---|---|---|---|

| Data TA | splunk_ta_sap_logserv |

Deployment Server, Heavy Forwarders, Indexer (or single instance) | Data collection, sourcetype routing, index-time filtering, DS automation, ships the indexes.conf for sap_logserv_logs + _ai_assistant_audit |

| LogServ App | splunk_app_sap_logserv |

Search Head only (or single instance) | Dashboards, AI Assistant, Environment Topology view, search-time extractions |

The Data TA ingests log data, routes it to the right sourcetype, and ships the indexes.conf for both the SAP data index (sap_logserv_logs) and the AI Assistant audit index (_ai_assistant_audit) — Splunk auto-creates them when the Data TA loads on an indexer, no separate Index App required. The LogServ App provides the analytics layer the user interacts with. Both index names are configurable via search macros (sap_logserv_idx_macro, sap_logserv_audit_idx_macro).

For details on which package goes where, see Architecture.

Key Features¶

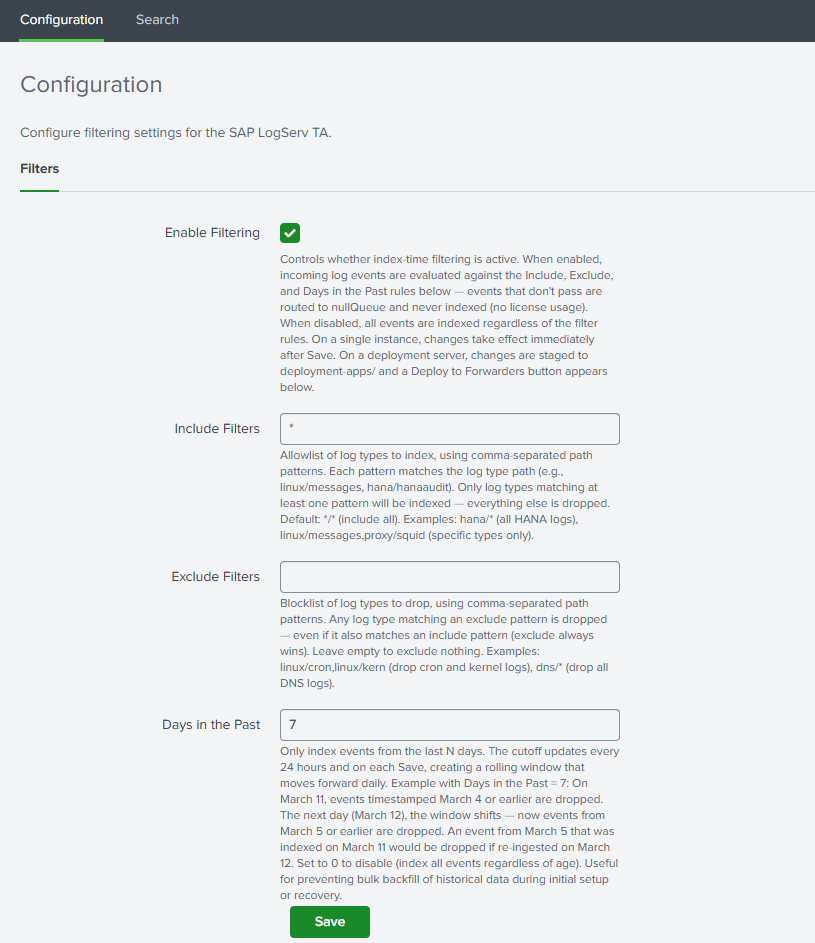

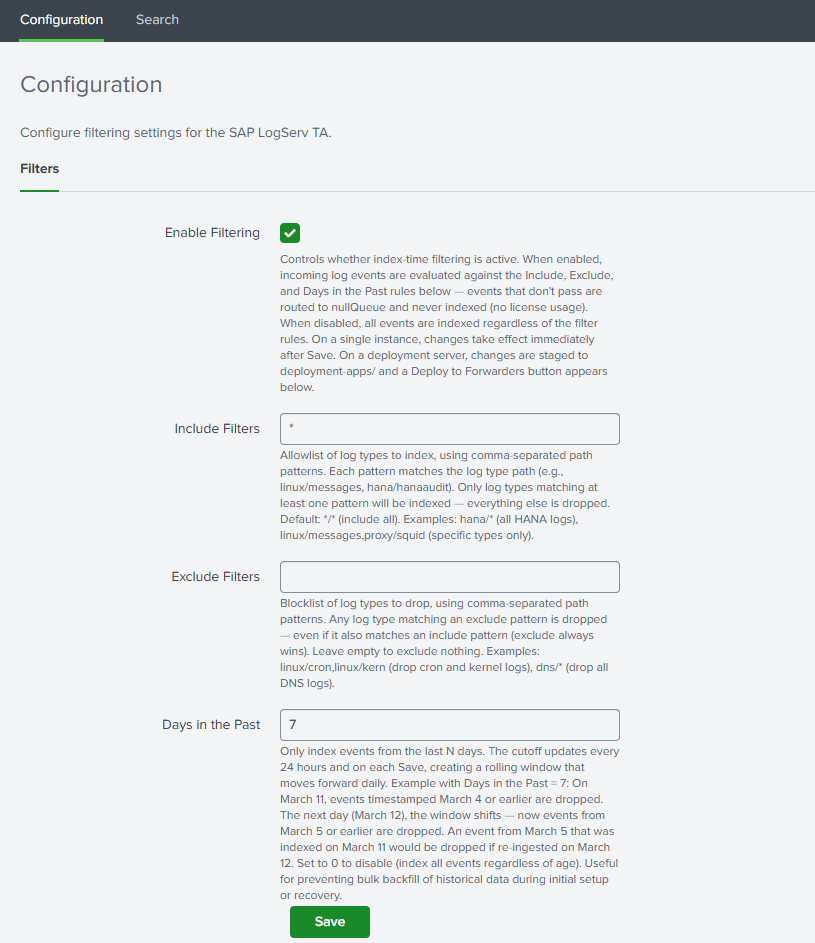

- Index-time filtering — control which log types are indexed and drop stale data, all configured through Splunk Web with zero license cost for filtered events.

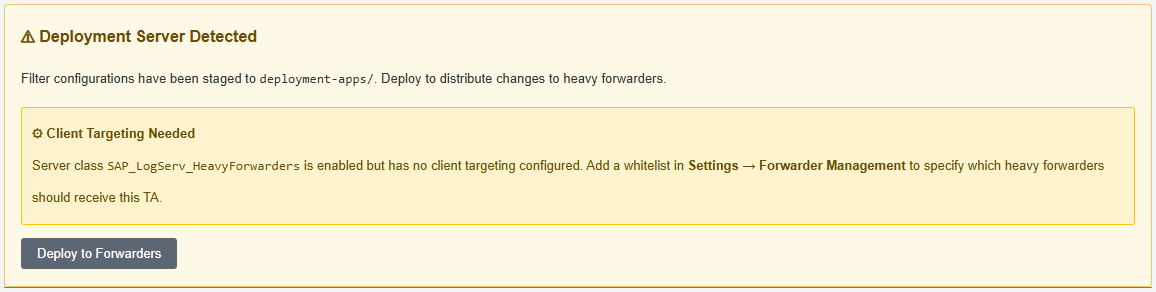

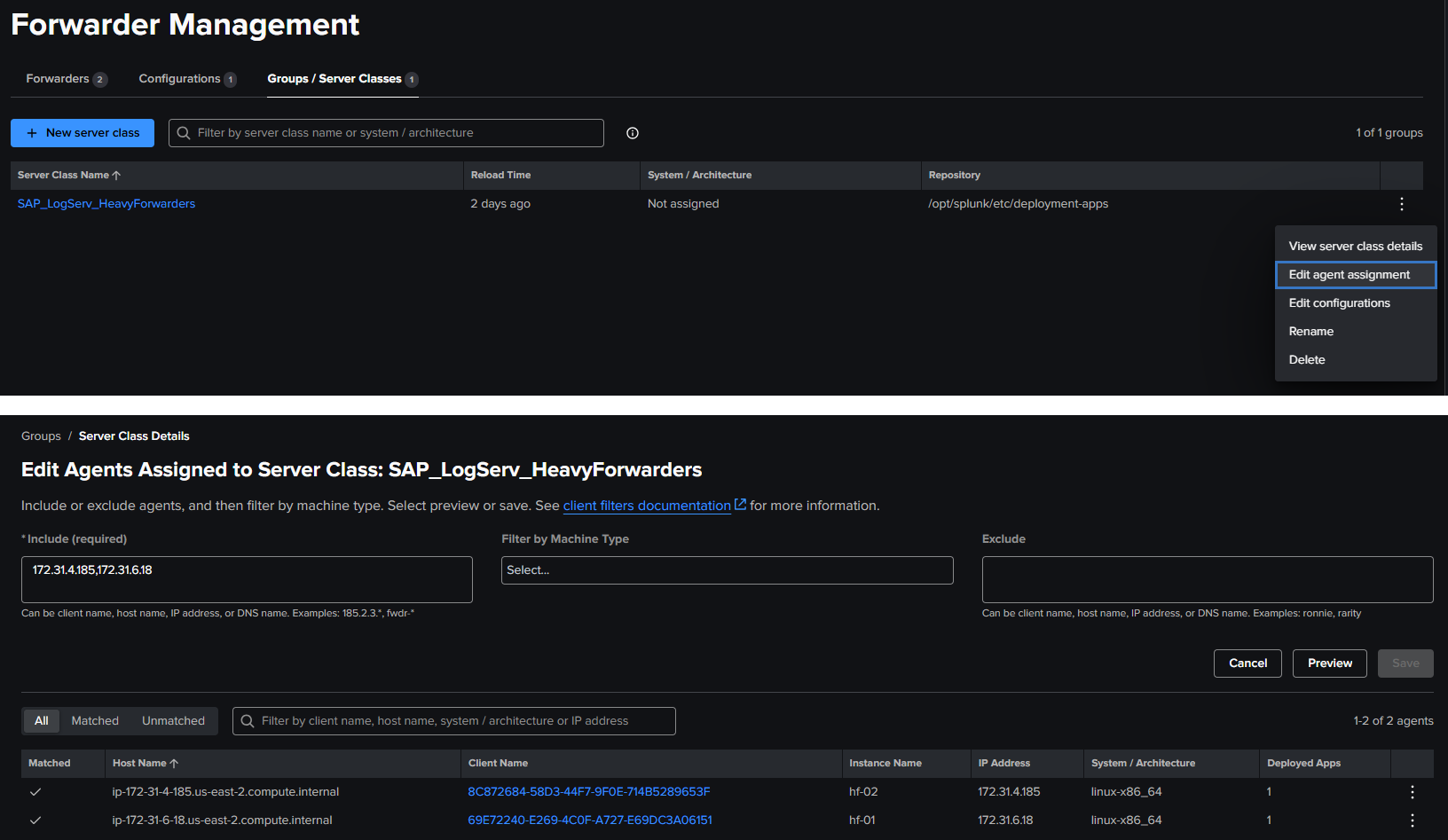

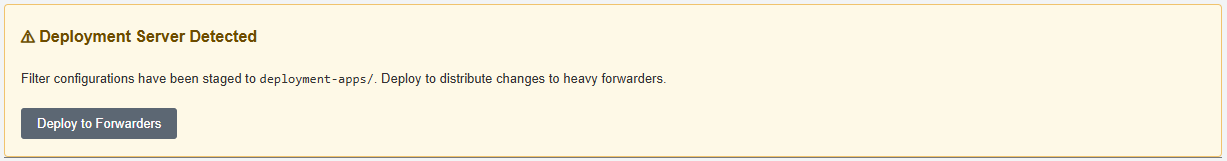

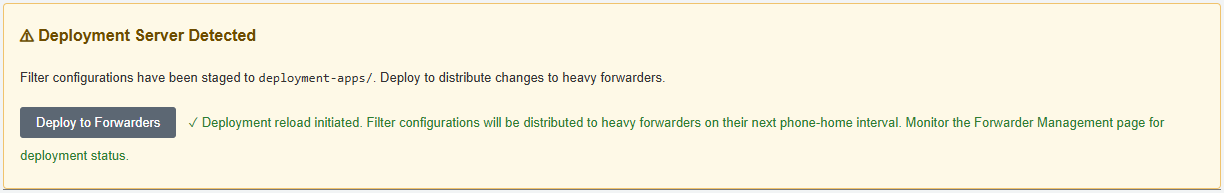

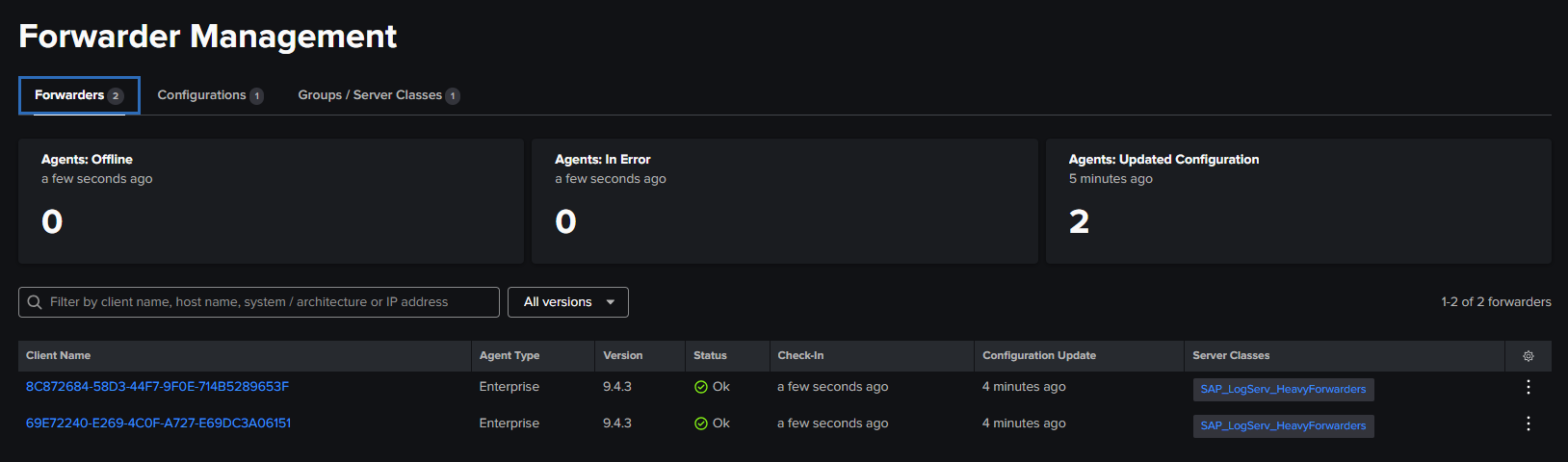

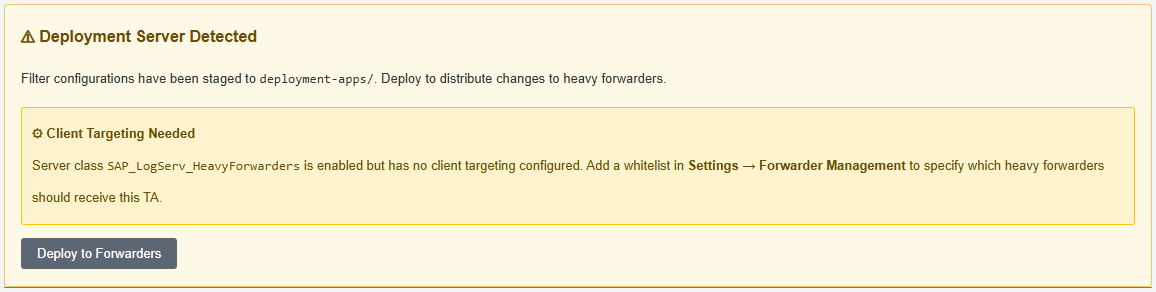

- Deployment Server automation — automatically stages filter configurations for distribution to Heavy Forwarders with a one-click deploy button.

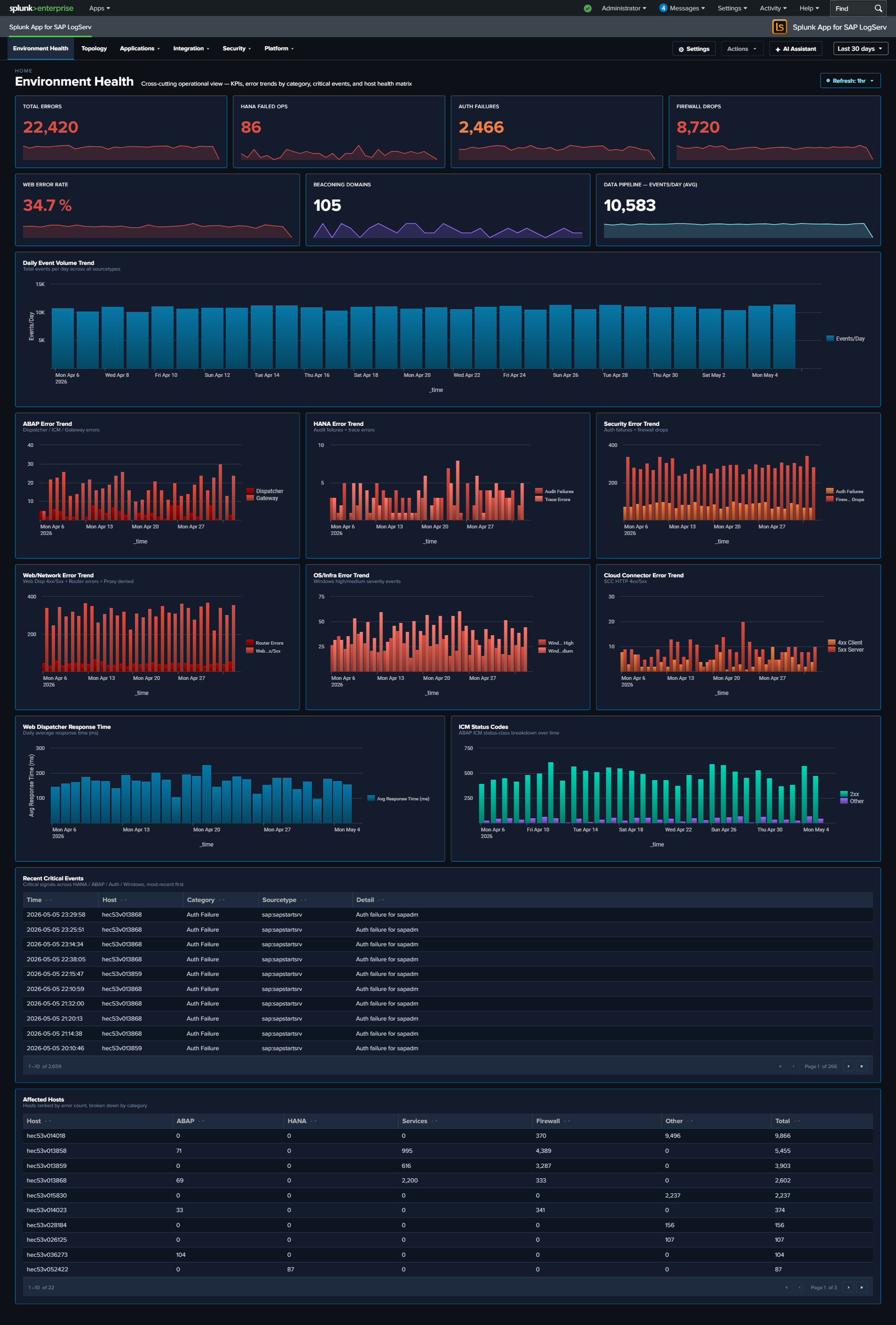

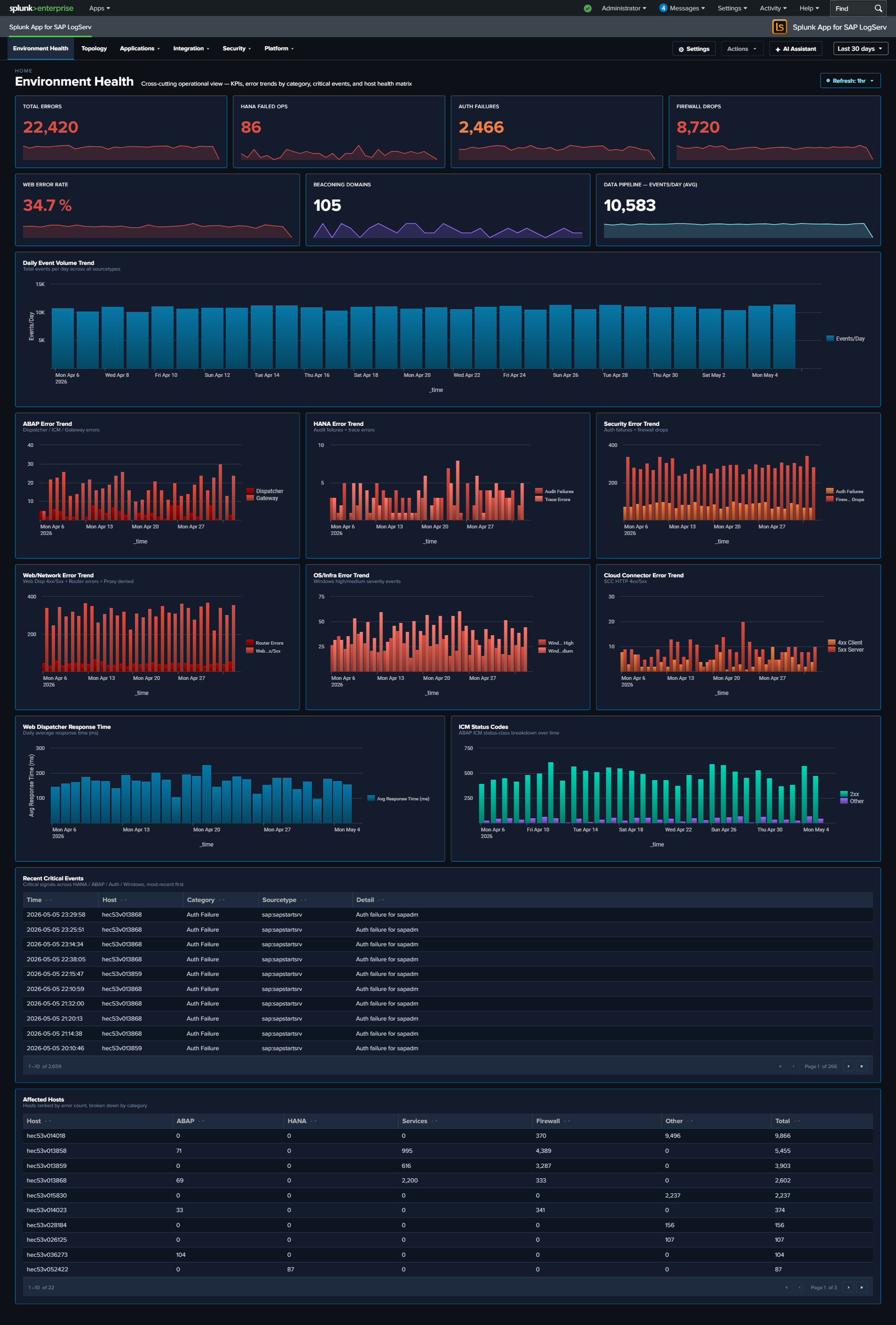

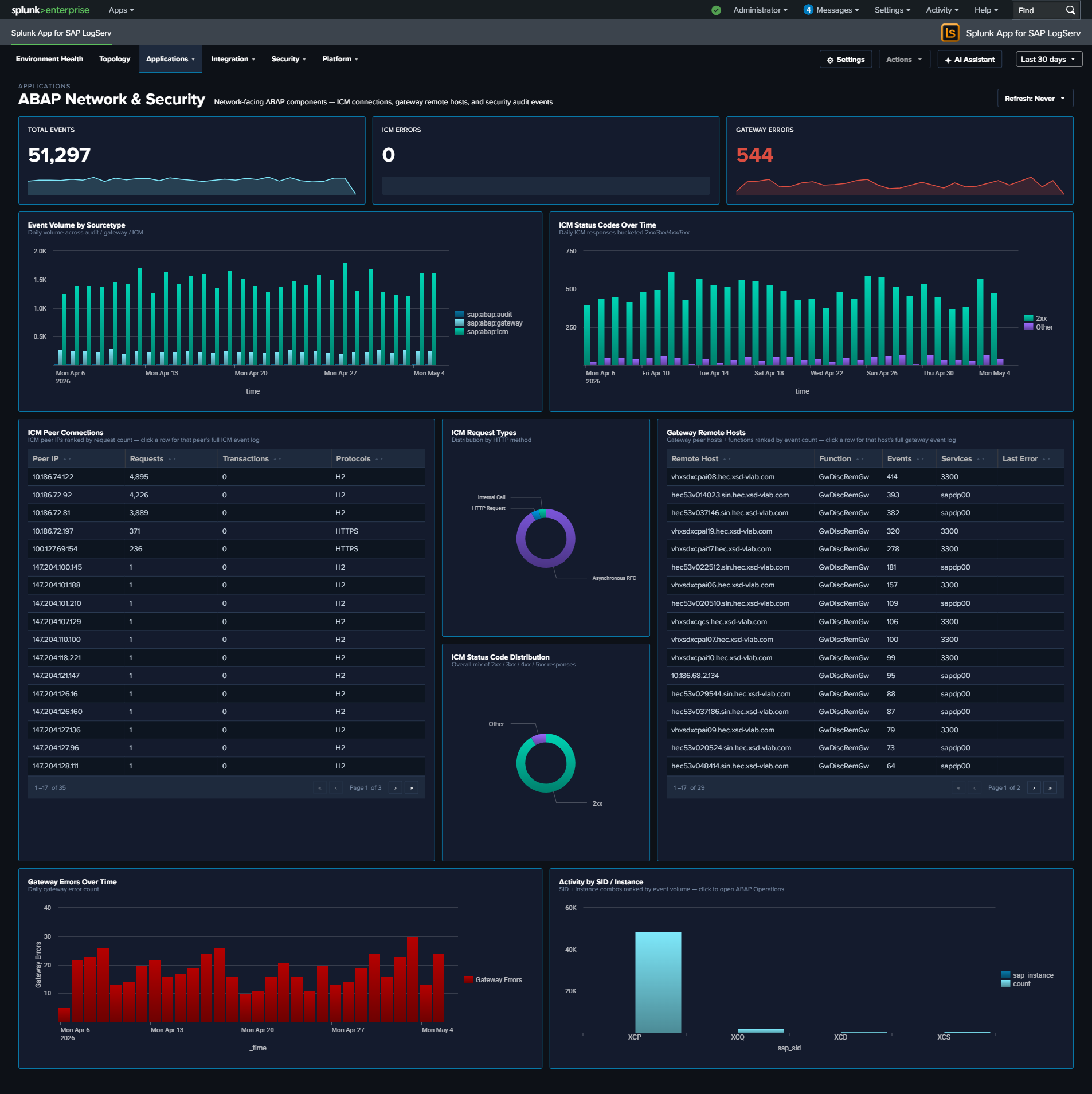

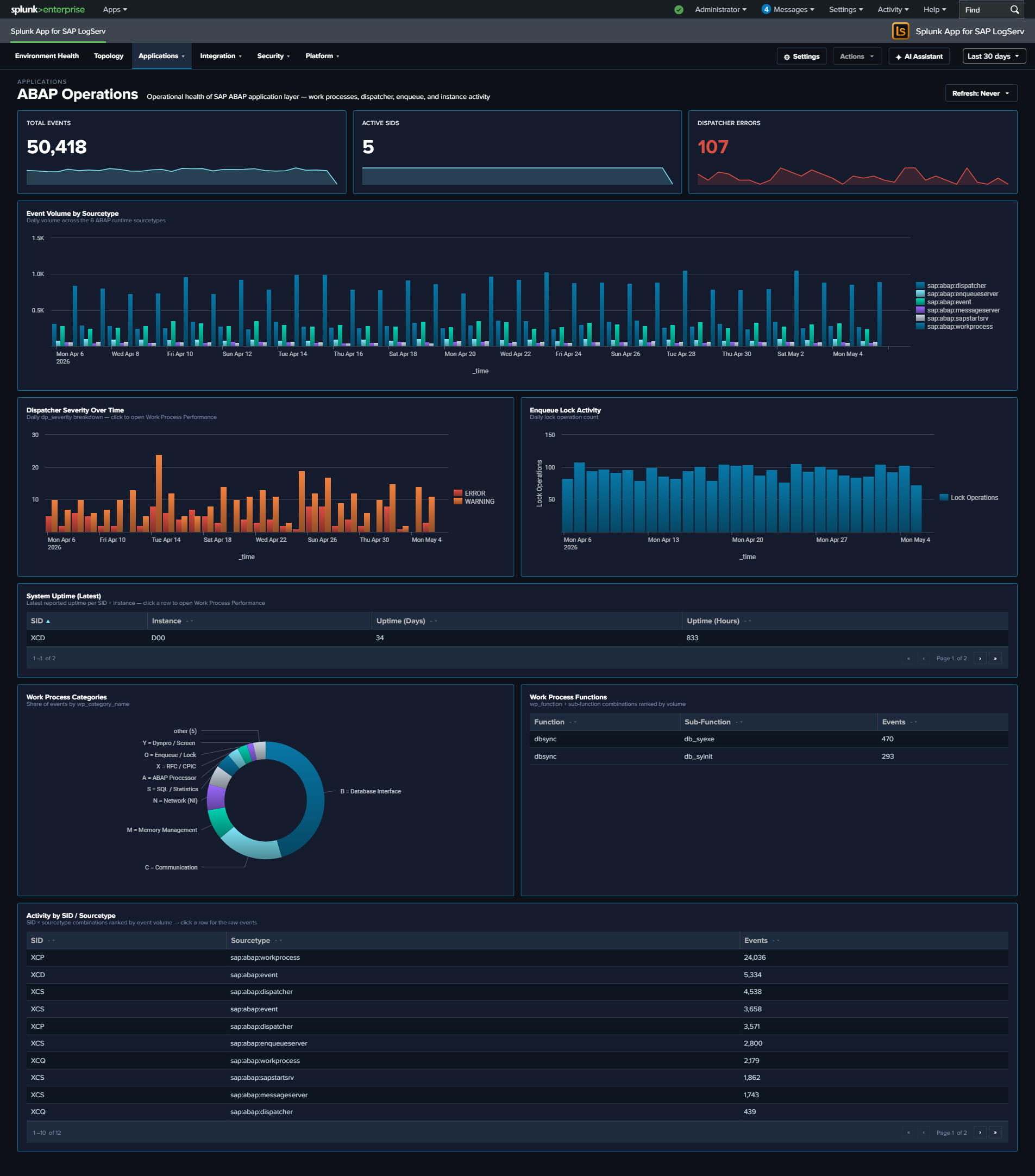

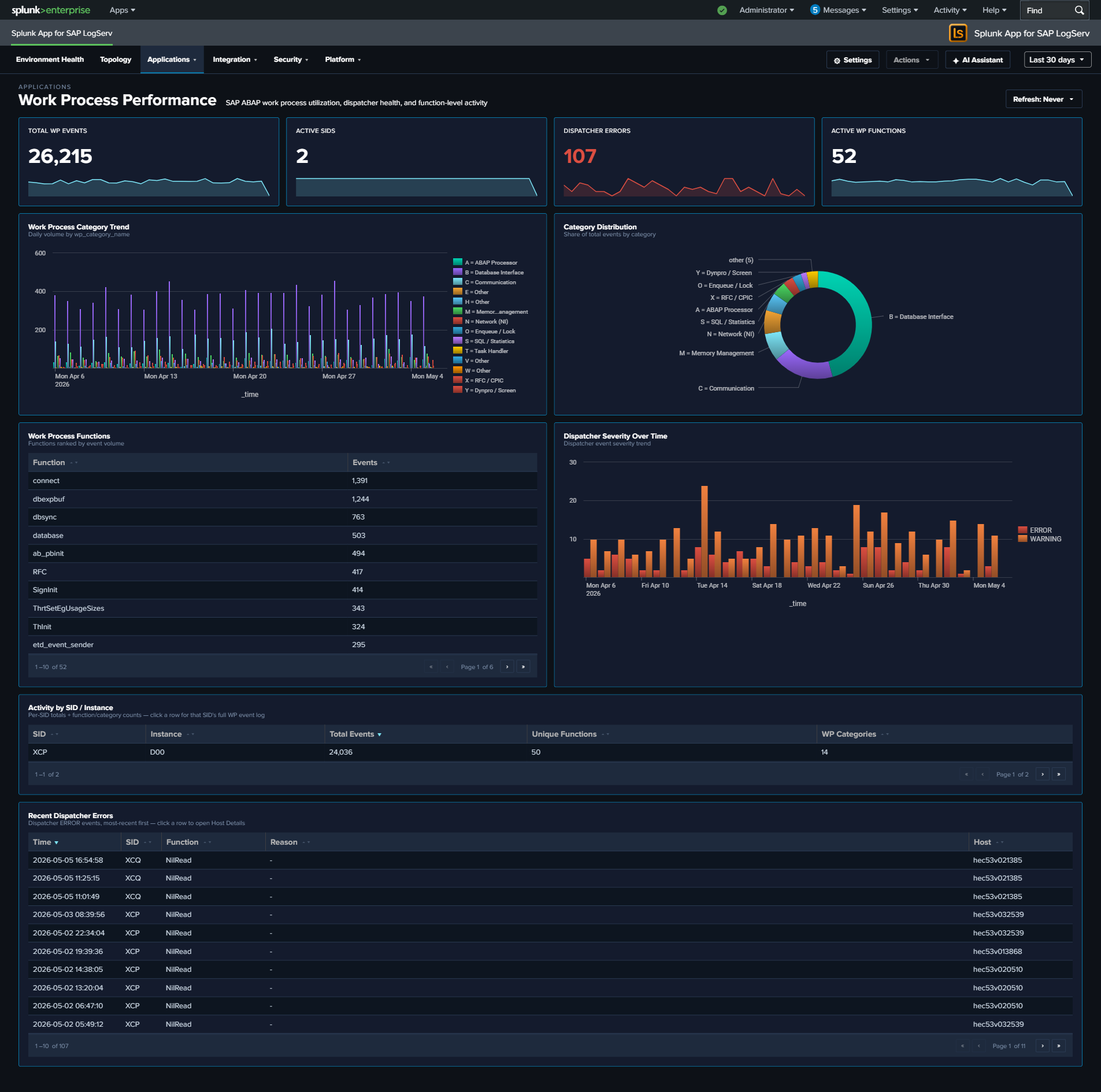

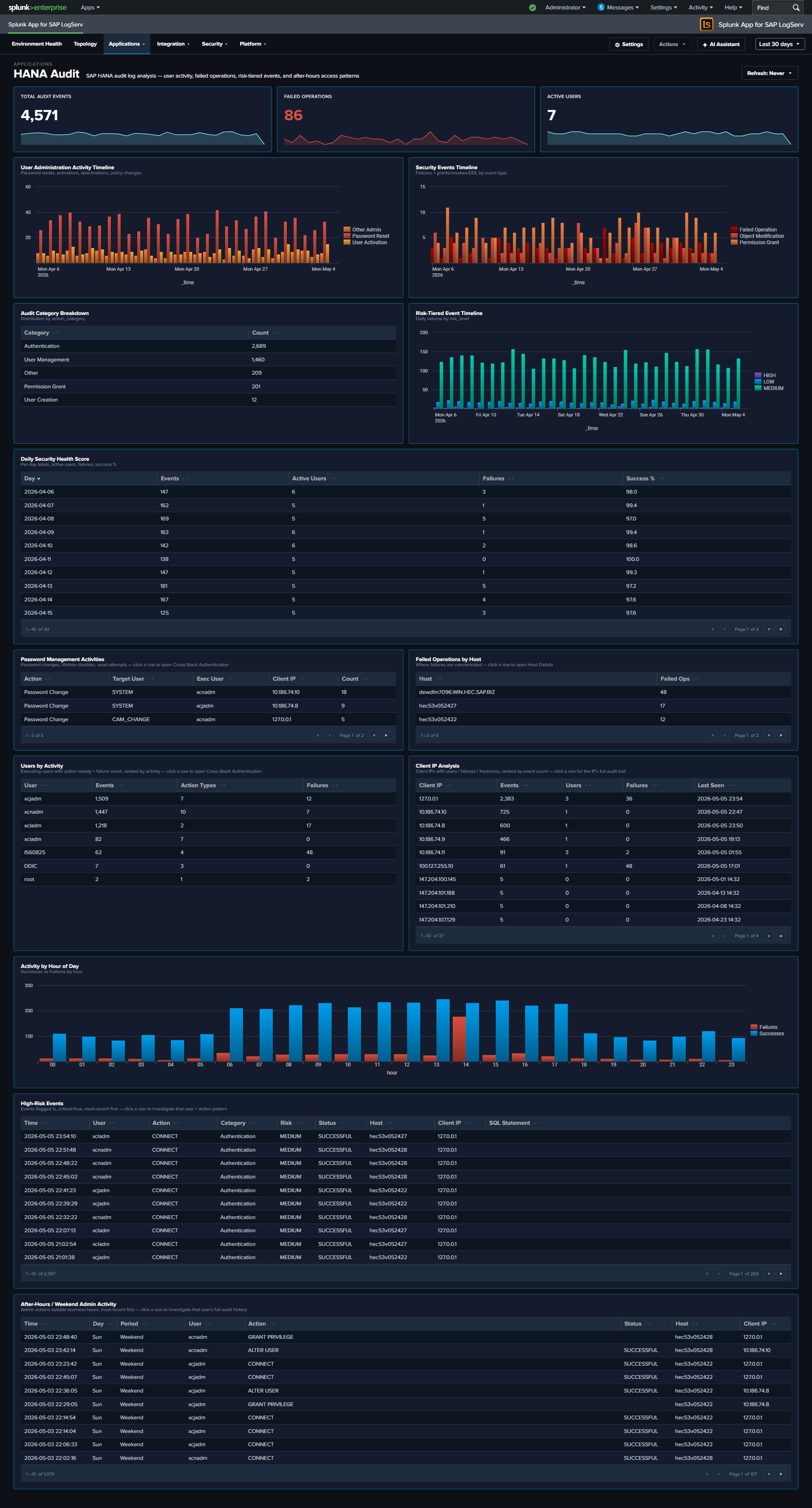

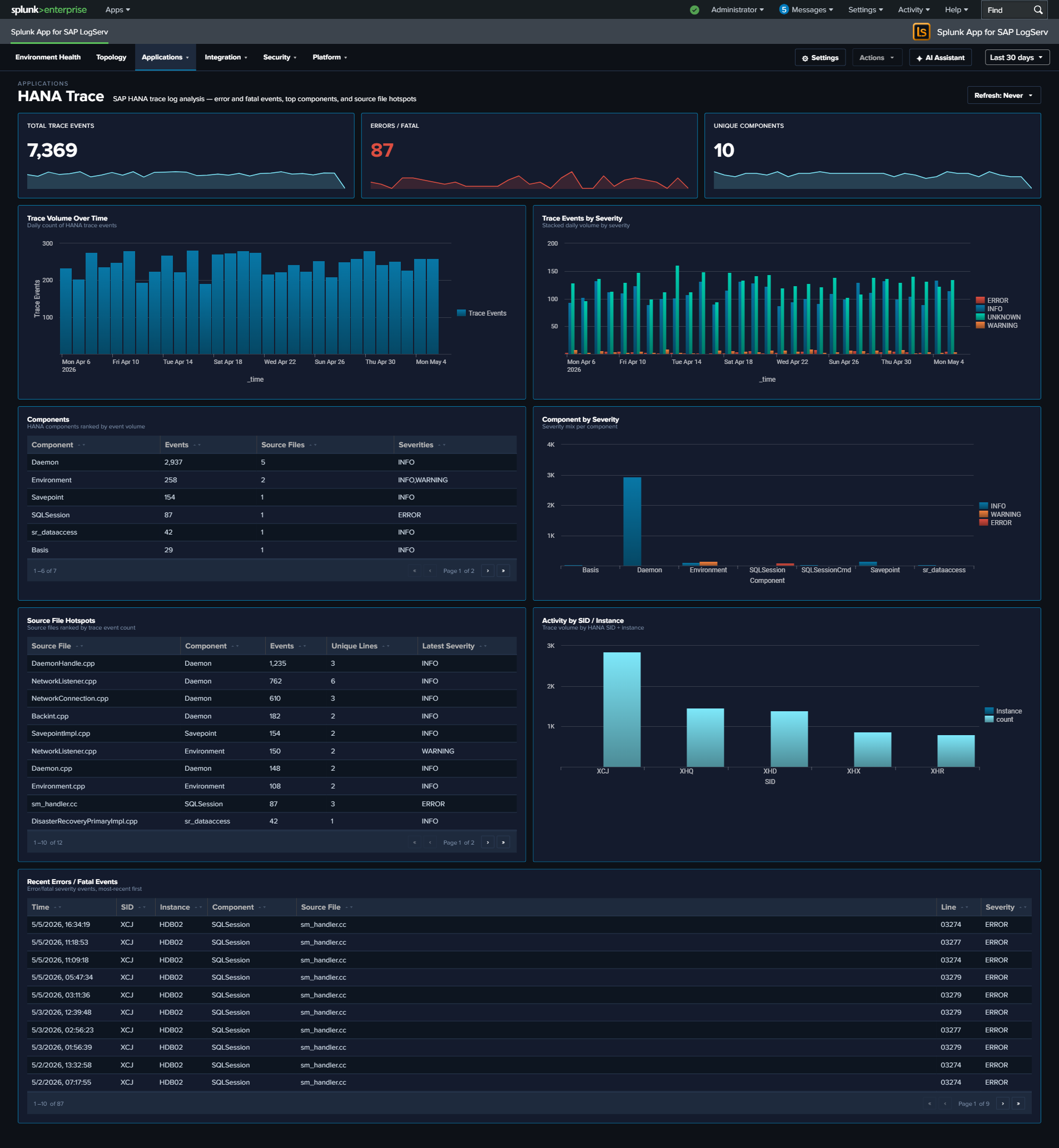

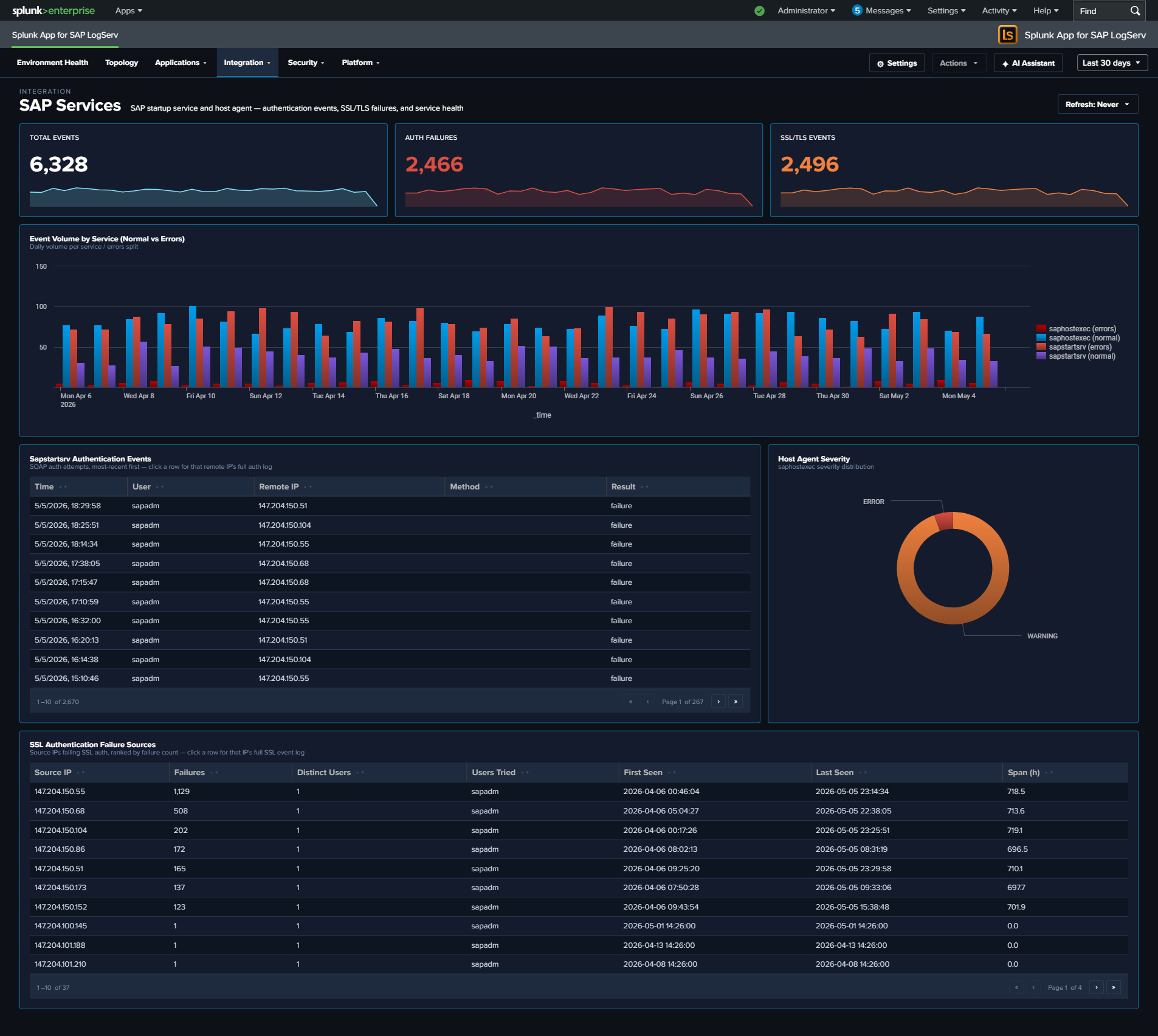

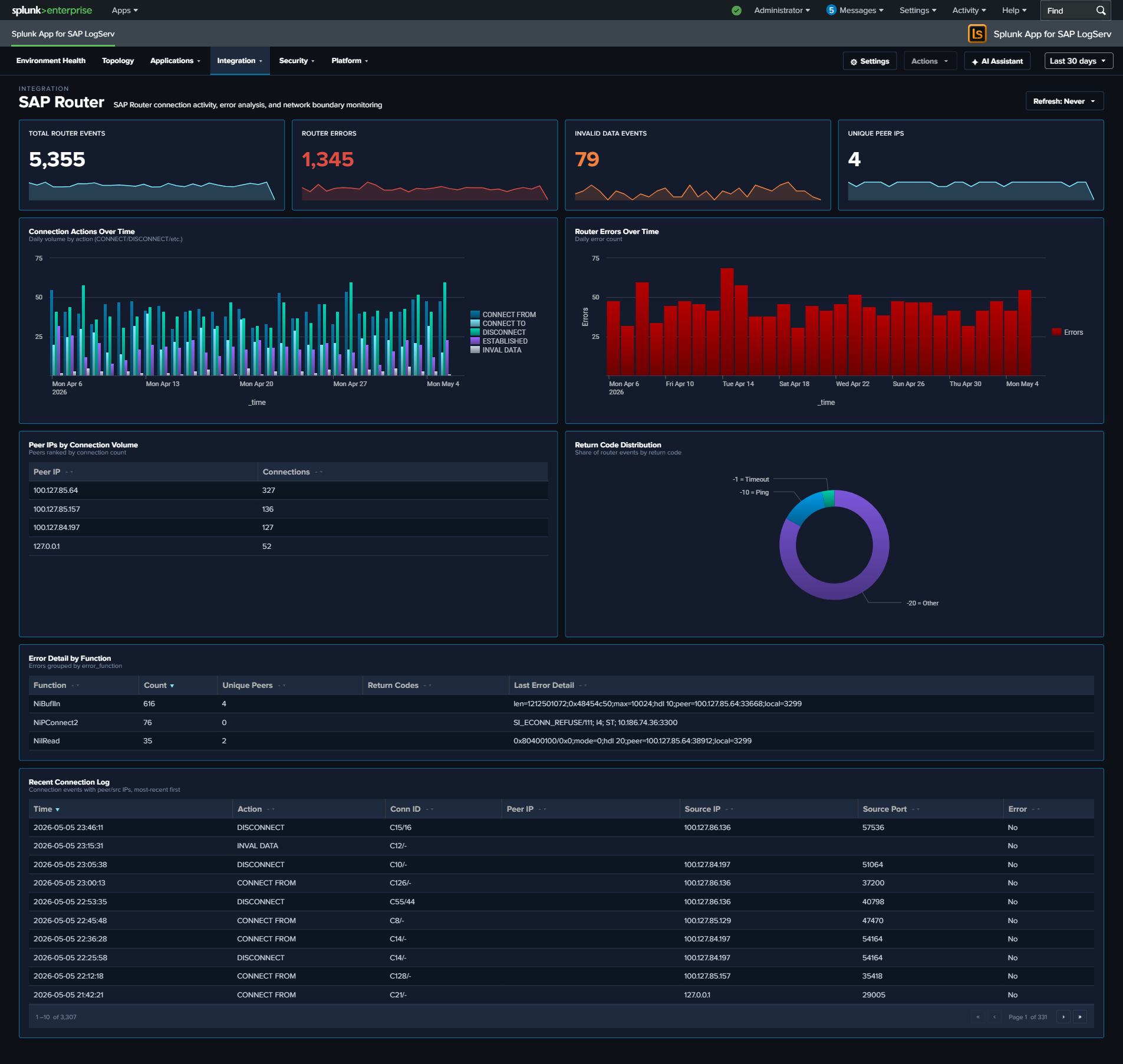

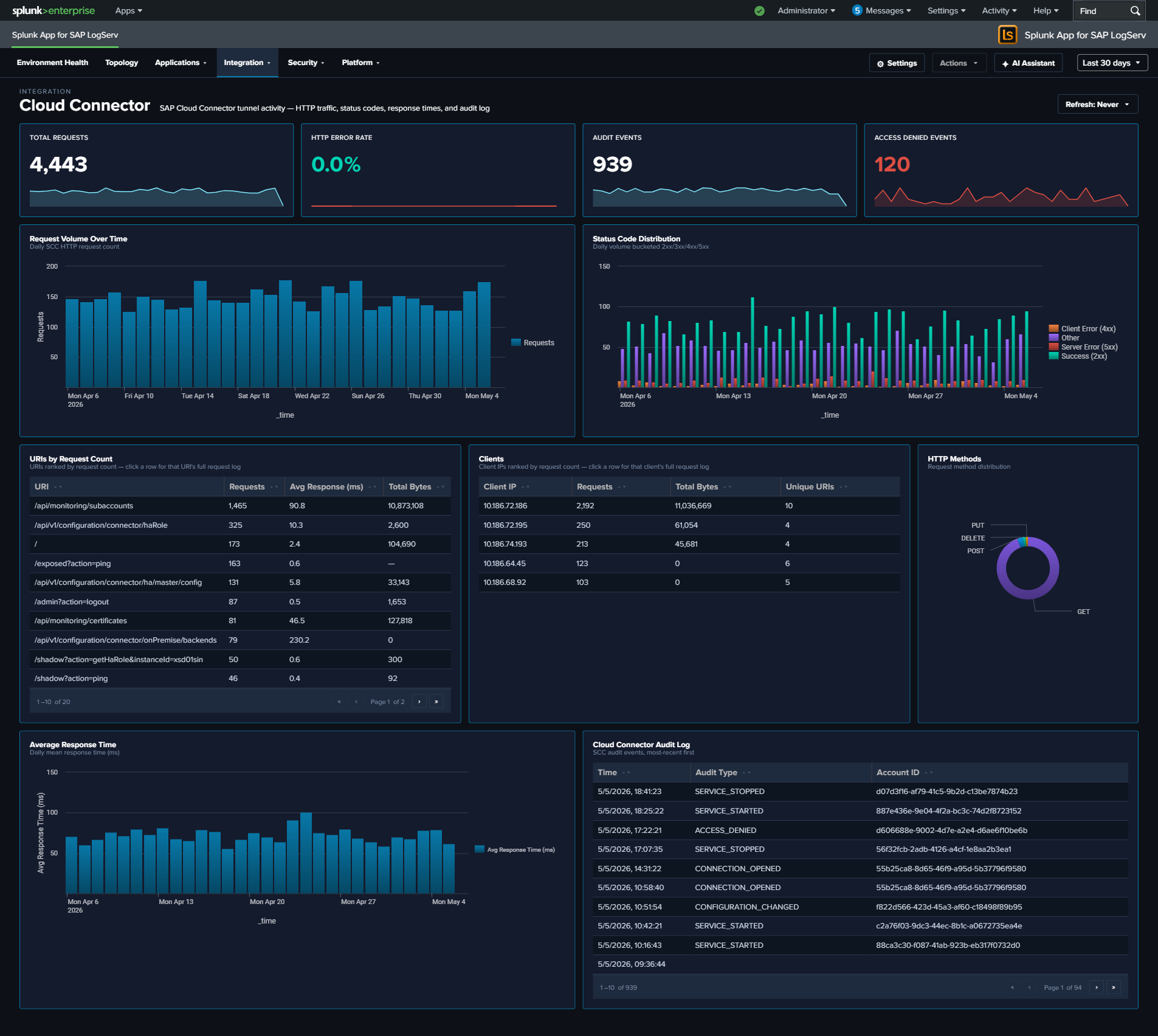

- 20 React-based dashboards — organized as one top-level Environment Health landing page plus four purpose-driven navigation groups: Applications (5 dashboards: ABAP runtime, work-process performance, HANA audit + trace), Integration (5 dashboards: SAP Services, Router, Cloud Connector, Web Dispatcher, Web/API Performance), Security (3 dashboards: Network Perimeter, Cross-Stack Authentication, Change & Configuration), and Platform (6 dashboards including the multi-tab Host Details view + multi-tab Data Pipeline Overview). Every dashboard ships with cross-dashboard drill-downs (time range preserved), per-dashboard auto-refresh picker, and built-in Download PNG export.

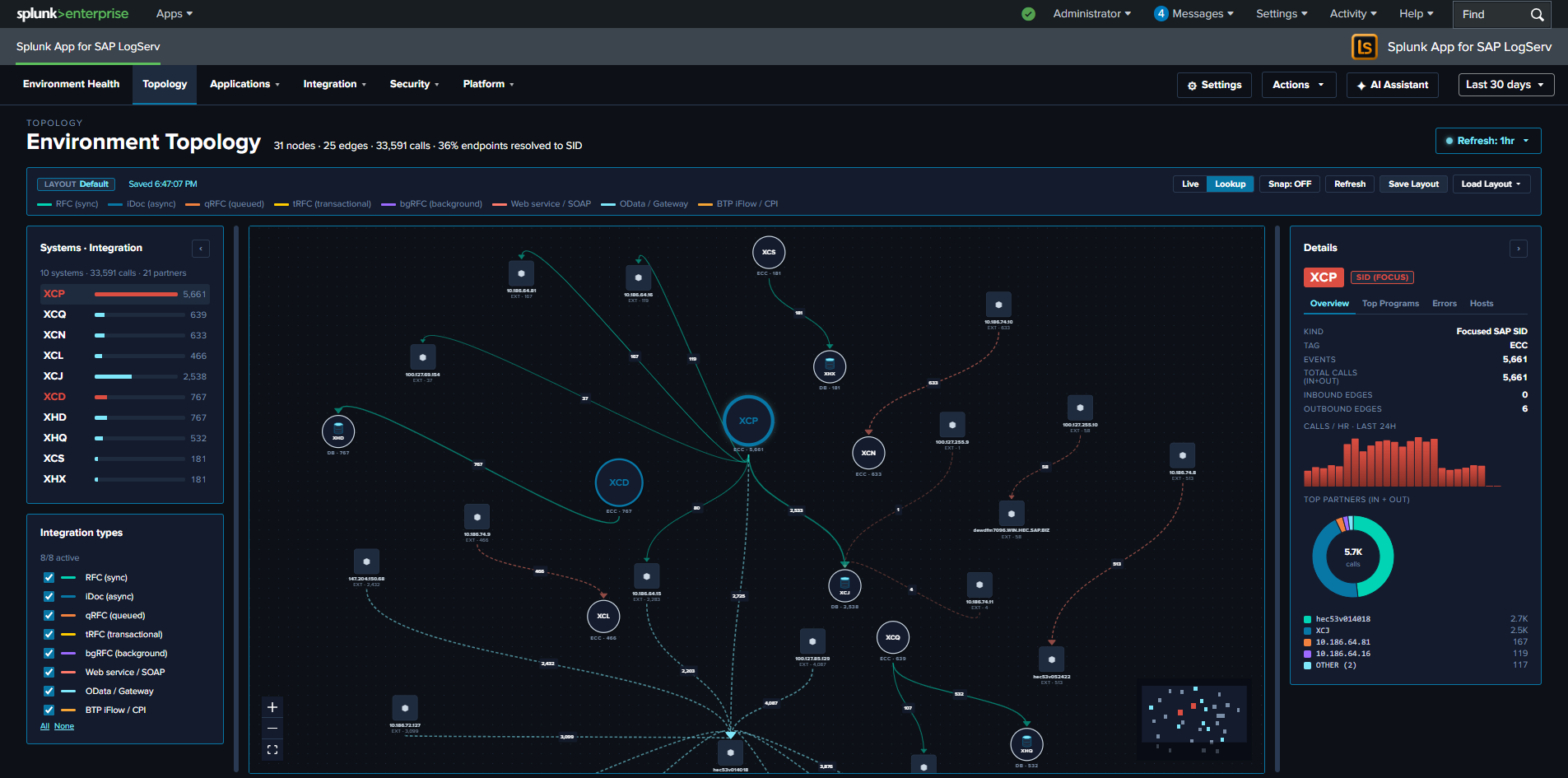

- Environment Topology — interactive graph view of SAP systems, integration partners, and endpoints; built on

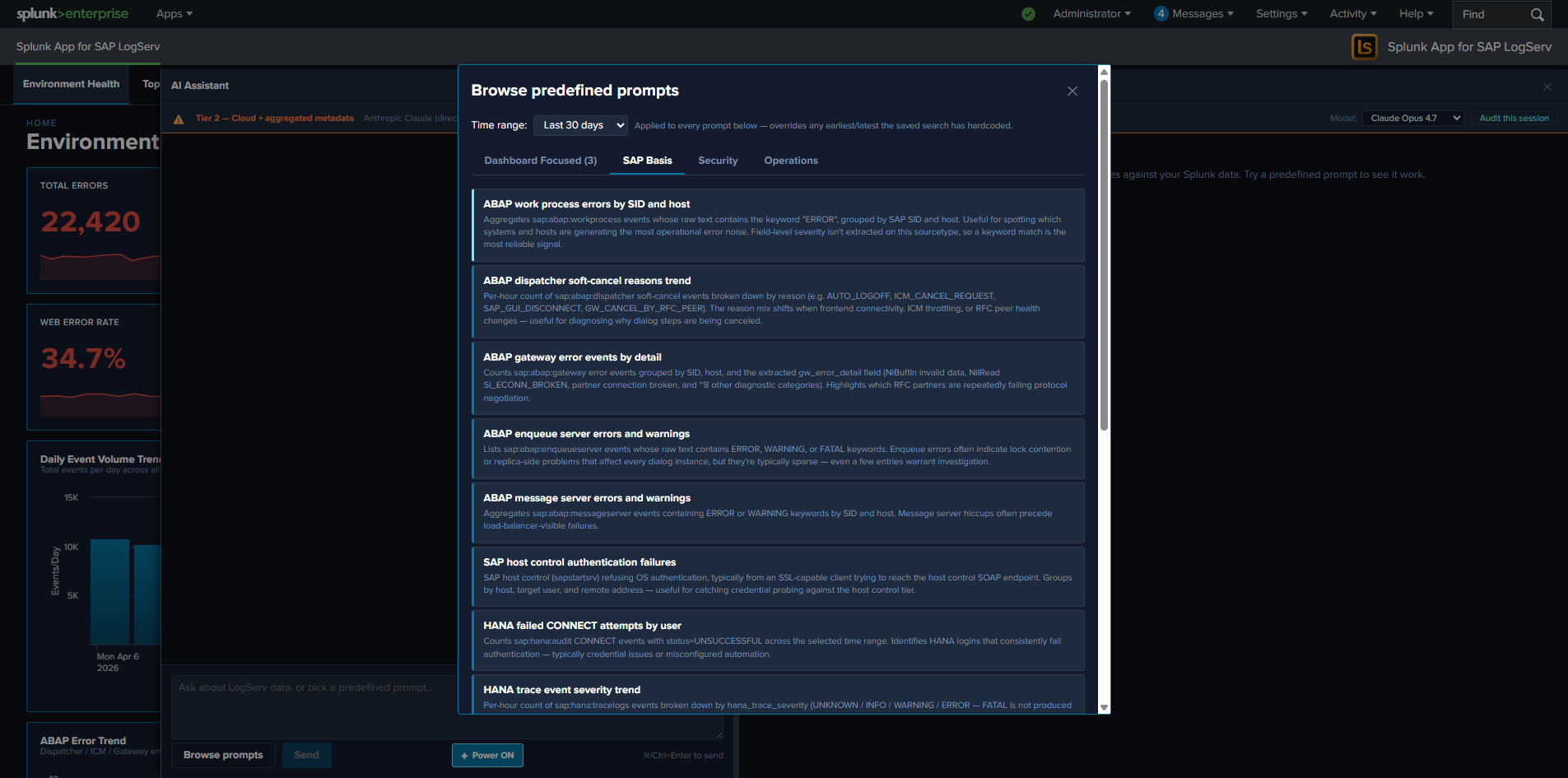

@xyflow/reactwith self-derived IP→SID inventory, saved layouts (KV Store), and Live mode auto-refresh. - AI Assistant — Splunk-aware chat panel with two paths: a predefined-prompt browser (48 saved searches across sap_basis / security / operations packs, dispatched via the Splunk MCP Server with no LLM call), and a free-form prompt path that adds vendor-LLM synthesis on top. Three privacy tiers (Tier 0 air-gapped, Tier 1 default, Tier 2 admin opt-in). The privacy invariant is type-system-enforced: no event data from your Splunk instance is ever transmitted to any AI vendor.

- OWASP LLM Top 10 (2025) compliance — every item in the top-10 has a matching control: prompt-injection sanitization, type-bounded data redaction, supply-chain SBOM, audit hash-chain, per-user rate limit, USD spend cap, SPL static-analysis guard, jailbreak pattern detection, PII redaction, and a tamper-evident audit log forwarder over HEC.

- Templates-only build variant — a deployable variant of the LogServ App that disables the LLM-driven flow at compile time. The MCP path + canned prompts stay fully active so the solution can be demonstrated end-to-end without enabling any LLM provider.

- Search-time field extractions — ~176 search-time directives (EXTRACT, EVAL, FIELDALIAS) across 31 SAP-specific sourcetypes.

| Version | 0.0.5.0-beta |

| Supported vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

| Splunk platform versions | 9.4.3 and later |

| CIM | 5.1.1 and later |

Architecture¶

Two-Package Model¶

The SAP LogServ solution for Splunk is delivered as two separately installable packages:

| Package | App ID | Purpose |

|---|---|---|

| Data TA | splunk_ta_sap_logserv |

Data collection, index-time filtering, deployment server automation, configuration UI, ships the indexes.conf for sap_logserv_logs (SAP data) and _ai_assistant_audit (AI Assistant audit log) |

| LogServ App | splunk_app_sap_logserv |

Dashboards, AI Assistant, Environment Topology view, search-time field extractions, macros |

The Data TA handles everything that happens at index time: ingesting data from S3, routing events to the correct sourcetype, applying index-time filters, and defining the two indexes the solution writes to. It includes Python scripts, REST handlers, a configuration UI built with Splunk’s UCC framework, and default/indexes.conf so Splunk auto-creates sap_logserv_logs and _ai_assistant_audit on first install. Both index names are macro-configurable via sap_logserv_idx_macro (SAP data) and sap_logserv_audit_idx_macro (audit log); customers who rename either index update the matching macro definition. The _ai_assistant_audit index is required for the AI Assistant’s audit log to function — without it, audit events have no destination index.

The LogServ App handles everything that happens at search time: field extractions, field aliases, computed fields, and the dashboards you use to visualize and analyze the data. It contains no Python code and no data collection components.

Why two packages?

The split follows Splunk best practices for distributed deployments. The Data TA runs at the ingest tier (Heavy Forwarders for distributed deployments, plus the Indexer where the bundled indexes.conf provisions storage) and the LogServ App runs on the Search Head where users interact with dashboards. Keeping these tiers separate means search-only logic (field extractions, dashboards, the AI Assistant chat panel) doesn’t bloat the forwarders, and ingest logic (sourcetype routing, REST handlers, UCC config UI) doesn’t leak into the Search Head.

Install Matrix¶

Where you install each package depends on your Splunk topology:

| Topology | Data TA | LogServ App |

|---|---|---|

| Single instance | Same instance | Same instance |

| DS + HFs + on-prem SH | Deployment Server + each HF + Indexer | Search Head only |

| DS + HFs + Splunk Cloud | Deployment Server + each HF (Splunk Cloud admin handles the indexer tier — Data TA installed there provides the index defs) | Splunk Cloud SH only |

Important

- The Data TA is never installed directly on Heavy Forwarders when using a Deployment Server – the DS distributes it automatically.

- The LogServ App is never installed on Heavy Forwarders or the Deployment Server.

- On Splunk Cloud, the customer’s Splunk Cloud admin handles the indexer tier separately. The Data TA installed on that indexer provides the bundled index definitions.

- For single-instance deployments, both packages are installed on the same instance and Splunk merges their configurations at runtime.

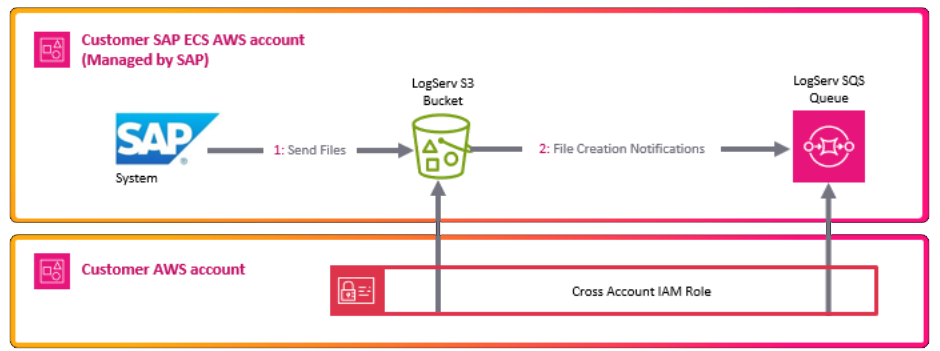

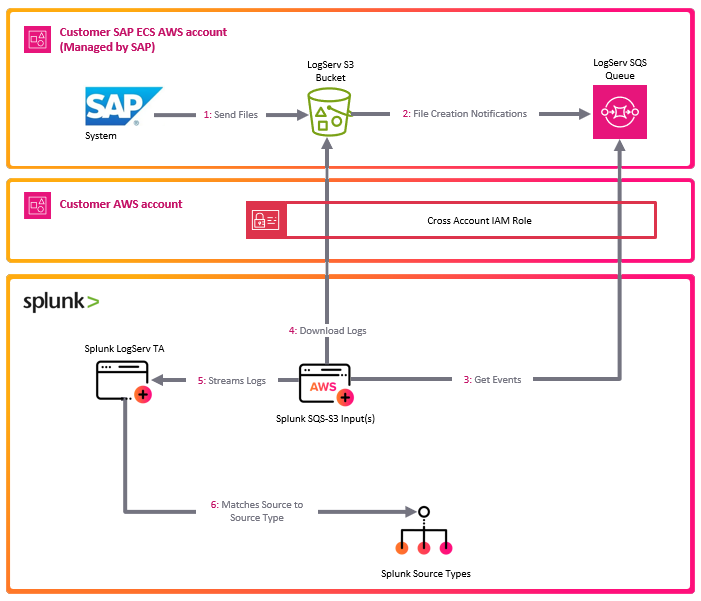

Data Flow¶

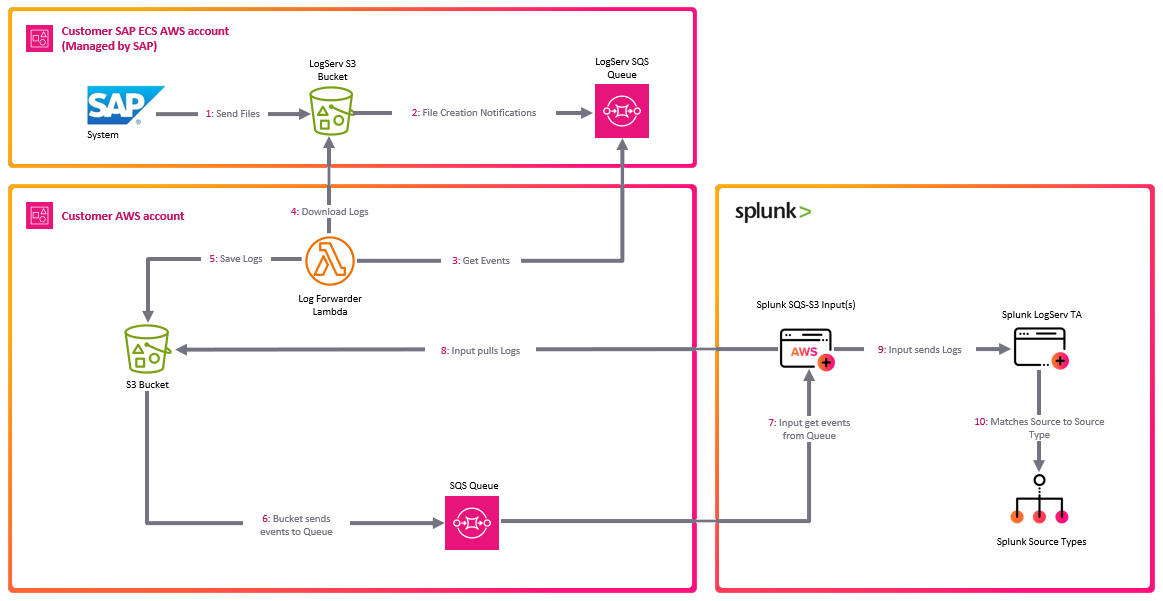

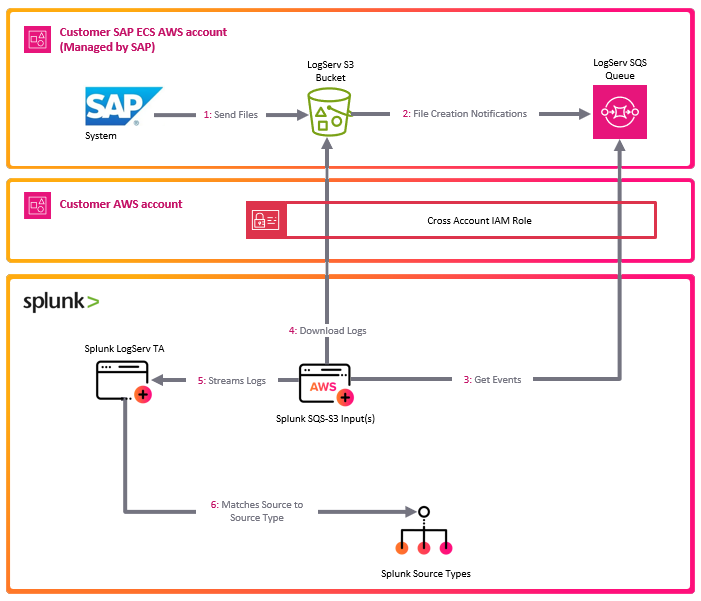

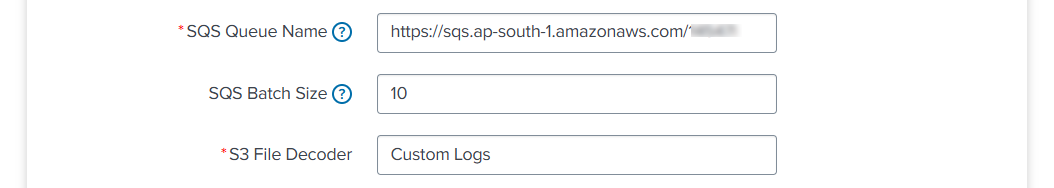

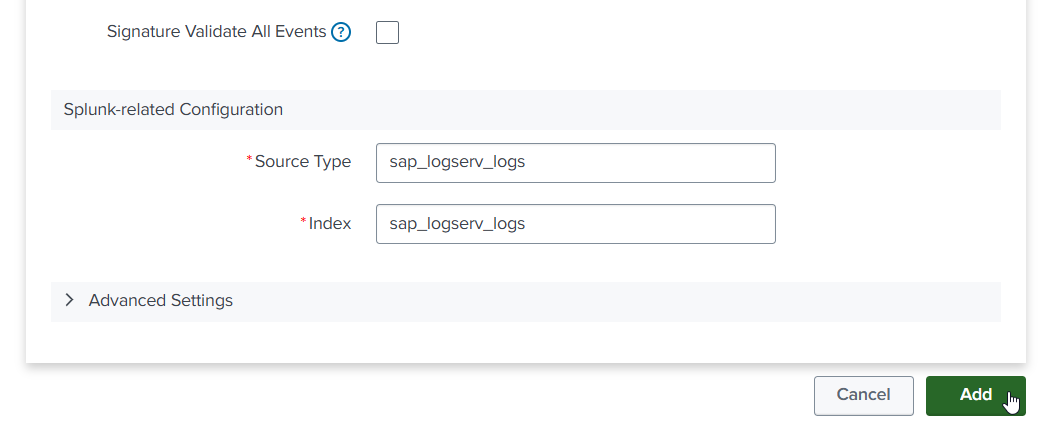

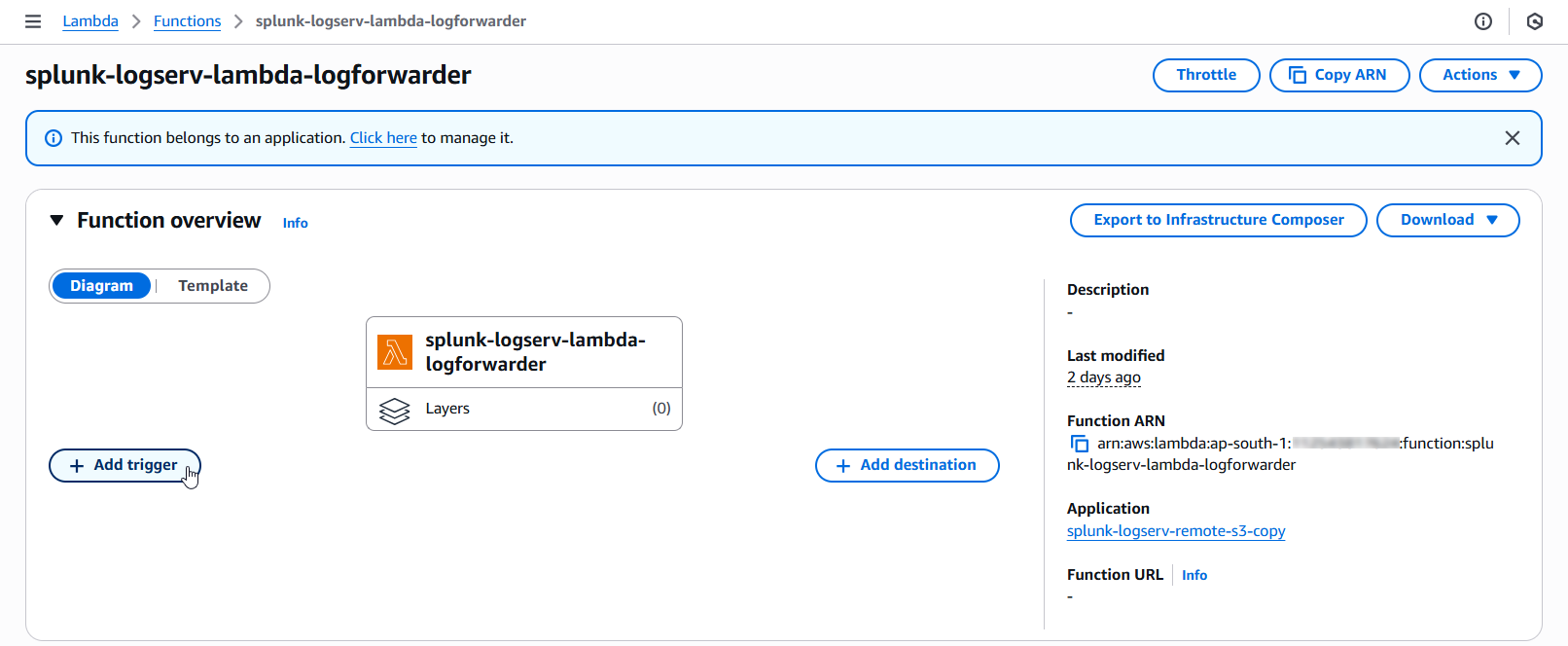

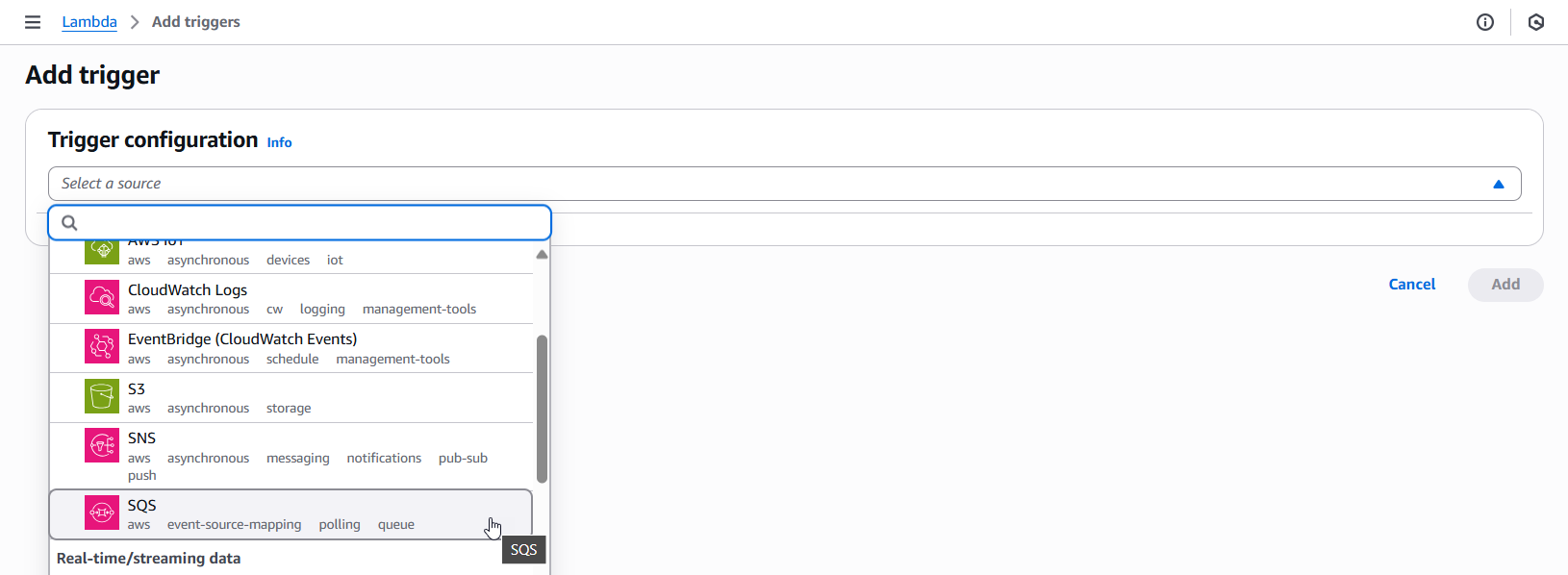

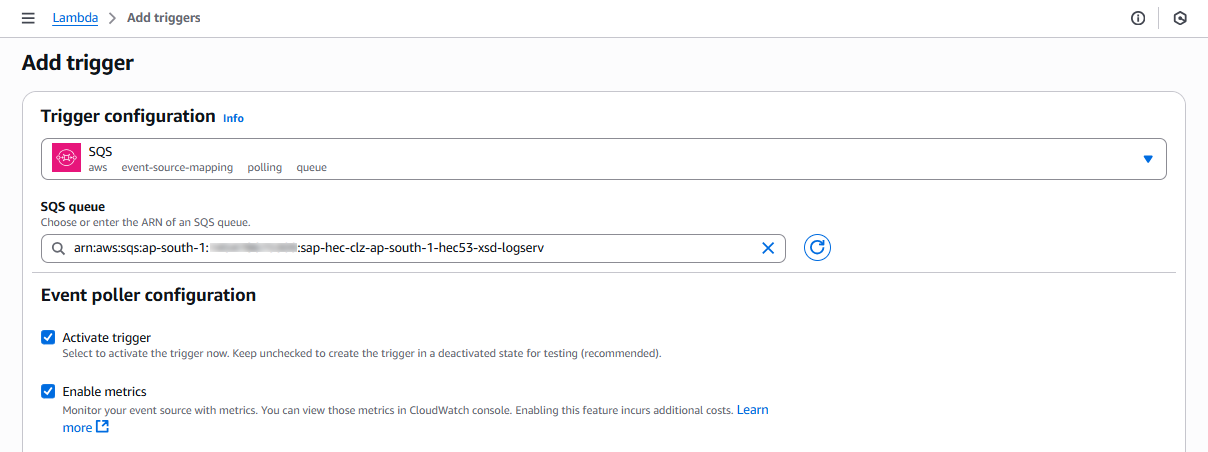

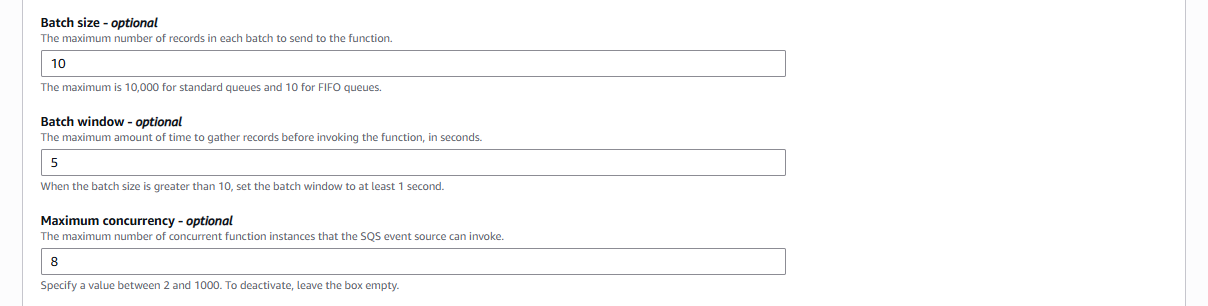

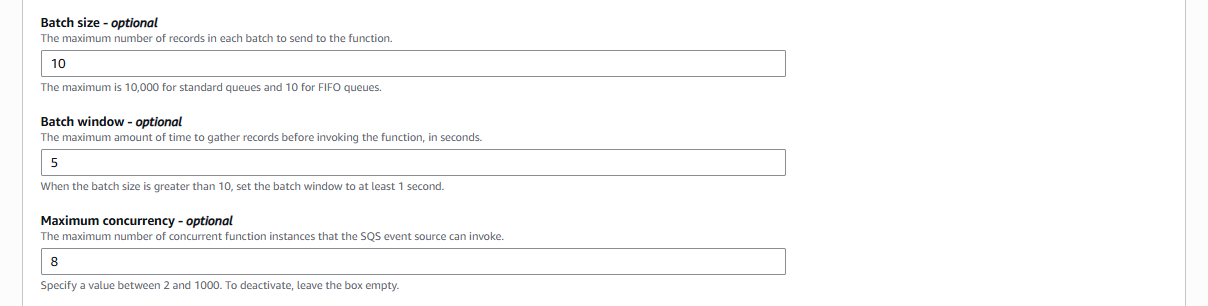

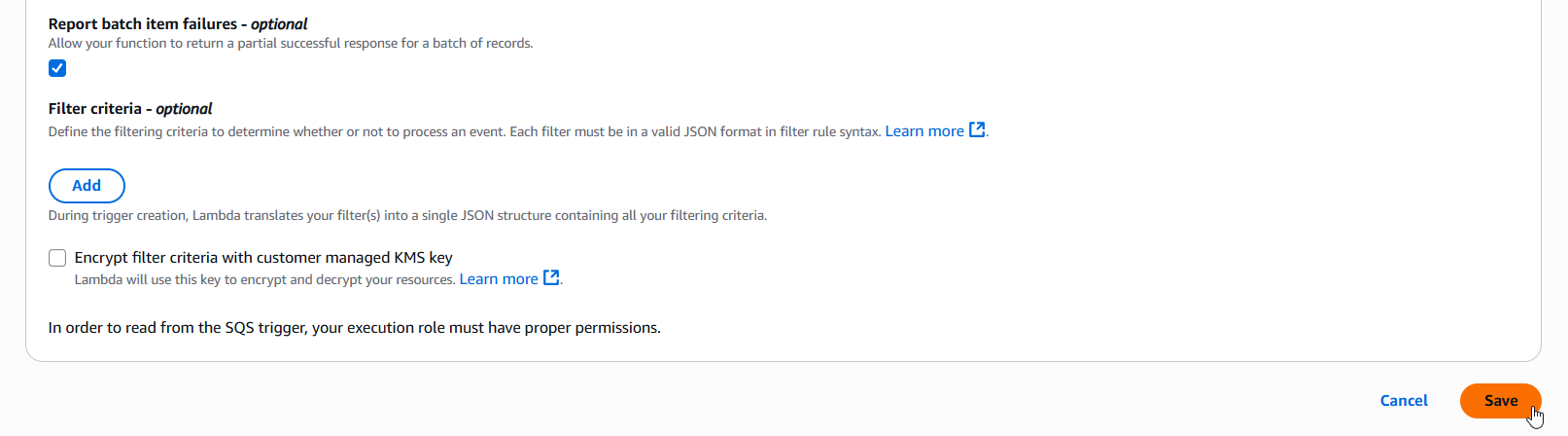

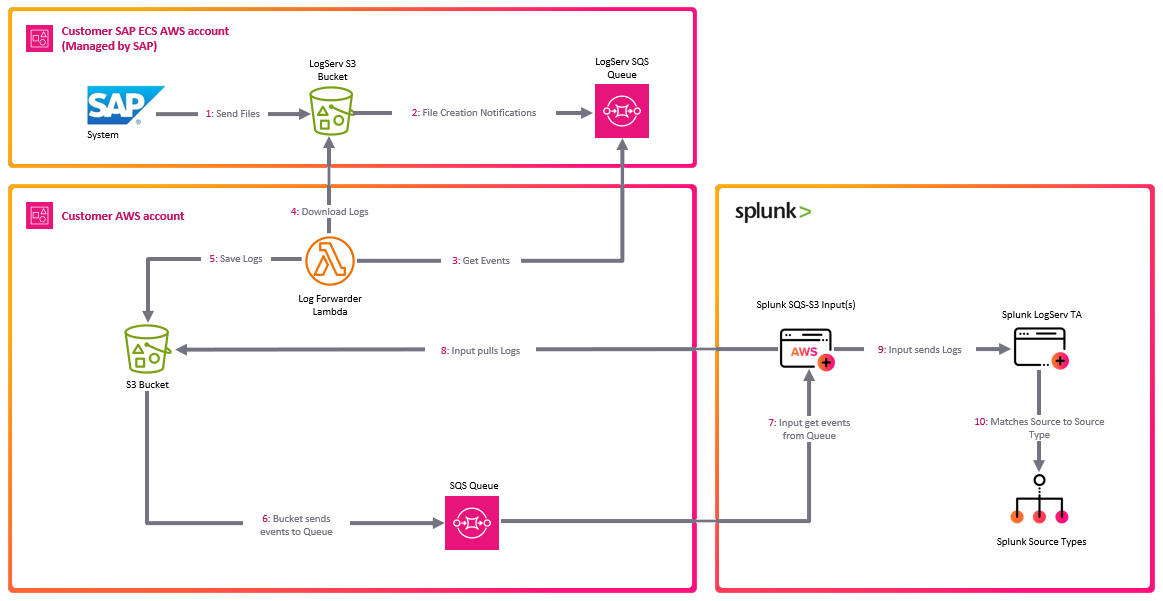

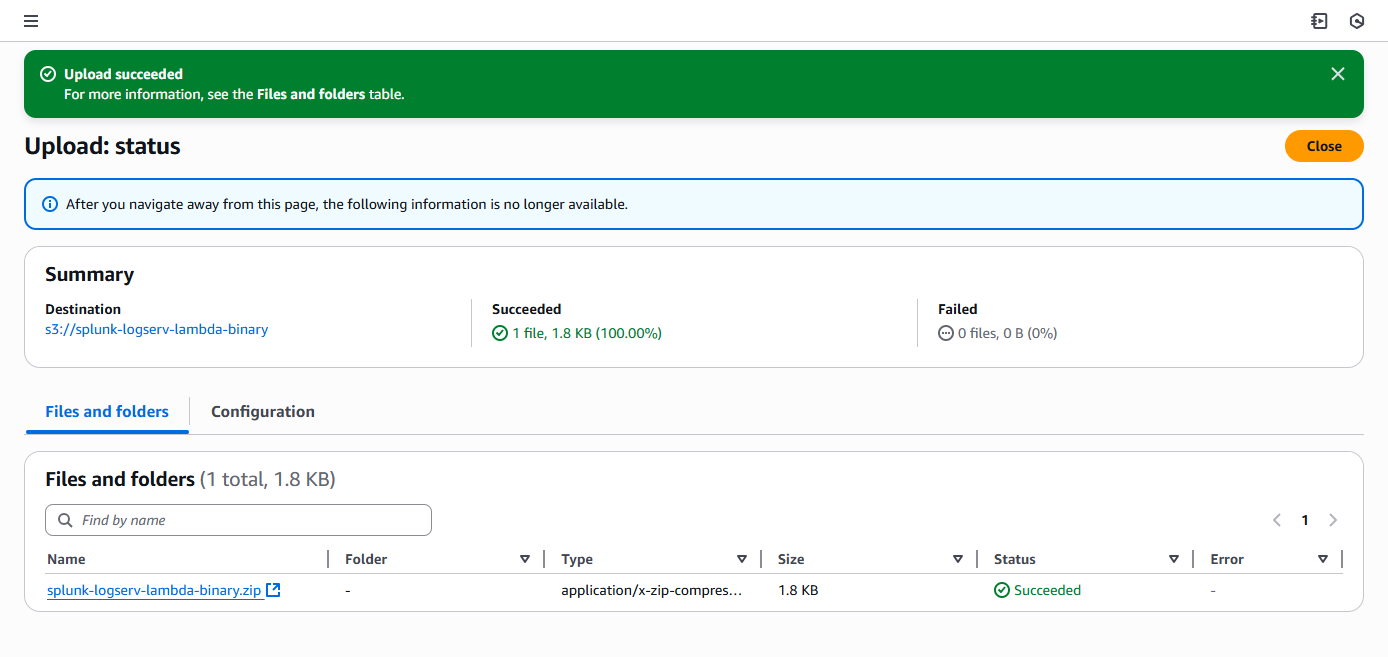

The diagram below shows how SAP LogServ data flows from the SAP ECS environment into Splunk:

SAP ECS Environment

|

v

SAP LogServ S3 Bucket (SAP-managed)

|

v (S3 event notifications via SQS)

Customer's AWS Account

+-----------------------------------------------+

| Destination S3 Bucket --> SQS Queue |

| (S3 events trigger SQS messages) |

+-----------------------------------------------+

|

v (Splunk AWS Add-on reads from SQS)

Splunk Heavy Forwarders

+-----------------------------------------------+

| 1. Ingest NDJSON from S3 via SQS |

| 2. Route to sourcetype (TRANSFORMS) |

| 3. Apply index-time filters (nullQueue) |

| 4. Forward to indexer |

+-----------------------------------------------+

|

v

Splunk Indexer

+-----------------------------------------------+

| Stores events in sap_logserv_logs index |

+-----------------------------------------------+

|

v

Splunk Search Head

+-----------------------------------------------+

| LogServ App: dashboards + field extractions |

+-----------------------------------------------+

Index-Time Filtering¶

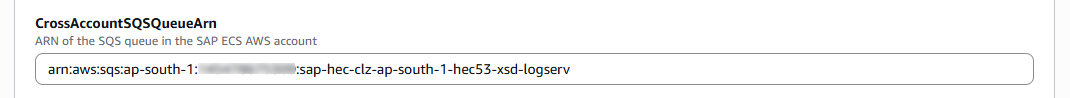

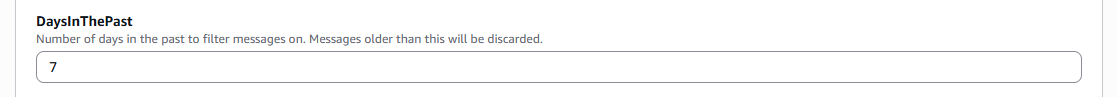

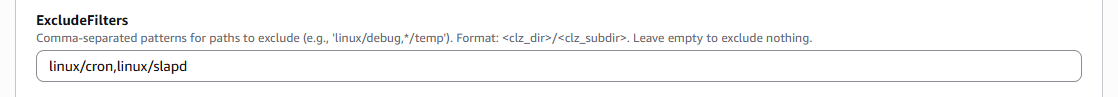

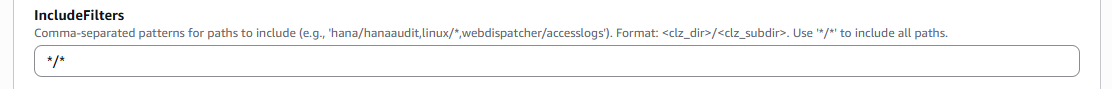

The Data TA provides built-in index-time filtering that lets you control which log types are indexed. Filtering happens on the Heavy Forwarders using TRANSFORMS-based queue routing:

- Include patterns – Only ingest log types that match the pattern (e.g.,

linux/*to include only Linux logs) - Exclude patterns – Drop specific log types (e.g.,

linux/cronto exclude cron logs) - Days in past – Drop data older than a specified number of days based on the S3 object path date

Filtered events are routed to nullQueue and never consume Splunk license. Filter settings are configured on the Deployment Server and pushed to Heavy Forwarders automatically.

See Configuring Filters for detailed setup instructions.

Sourcetype Routing¶

All SAP LogServ data arrives in a single generic format. The Data TA examines each event’s metadata during index-time parsing and routes it to the appropriate Splunk sourcetype. Routing is defined in transforms.conf using regex-based sourcetype assignment.

Two routing strategies are used:

- Source-path matching – For log types with unique

sourcefield values (e.g.,/var/log/messagesfor Linux syslog,/var/log/squid/access.logfor Squid proxy) - Classification field matching – For SAP application logs that share similar source paths, routing matches the

clz_dirandclz_subdirfields in the NDJSON envelope. When the sameclz_subdirvalue appears under multipleclz_dirpaths (e.g.,auditexists under bothabap/andscc/), compound lookahead regexes match both fields simultaneously to avoid collisions.

For the complete list of supported log types and their sourcetype mappings, see Supported Log Types.

v0.0.5.0 React App Architecture¶

The LogServ App was rewritten as a React application in v0.0.5.0. The Data TA architecture is unchanged from v0.0.4.x — only the UI tier changed.

Stack:

- Build pipeline: webpack-based bundle build atop

@splunk/webpack-configs. Each Splunk app page resolves to a single React bundle. - UI primitives:

@splunk/react-ui(forms, tables, modals),@splunk/visualizations(charts),@xyflow/react(Topology graph),styled-componentsfor theming. - State management: React context for cross-cutting concerns (AI Assistant, time range, refresh ticker);

useState/useReducerfor component-local state. No Redux. - Data fetching: custom

useSearchhook wraps@splunk/search-jobfor SPL dispatches; results exposerows / loading / errorto consuming components. - Routing: React Router 7 with

HashRouterso URLs survive Splunk Web’s app-namespace routing; query strings carry time-range hydration (?earliest=...&latest=...).

Build-time feature flags:

The build supports compile-time variants. The first such flag is TEMPLATES_ONLY: when set, the resulting bundle has the AI Assistant’s free-form / LLM-driven flow disabled at compile time, NOT runtime — there is no runtime setting that could re-enable it. See the Templates-only Build page for the user-facing implications.

Static-asset cache busting:

Splunk Web caches the React bundle’s asset URL by an integer [install] build field in app.conf. Every meaningful code change bumps this number; without bumping, browsers serve stale bytes after deploy. The 3-part SemVer in [id] version is independent of the build number and changes only on user-facing version bumps.

AI Assistant Architecture¶

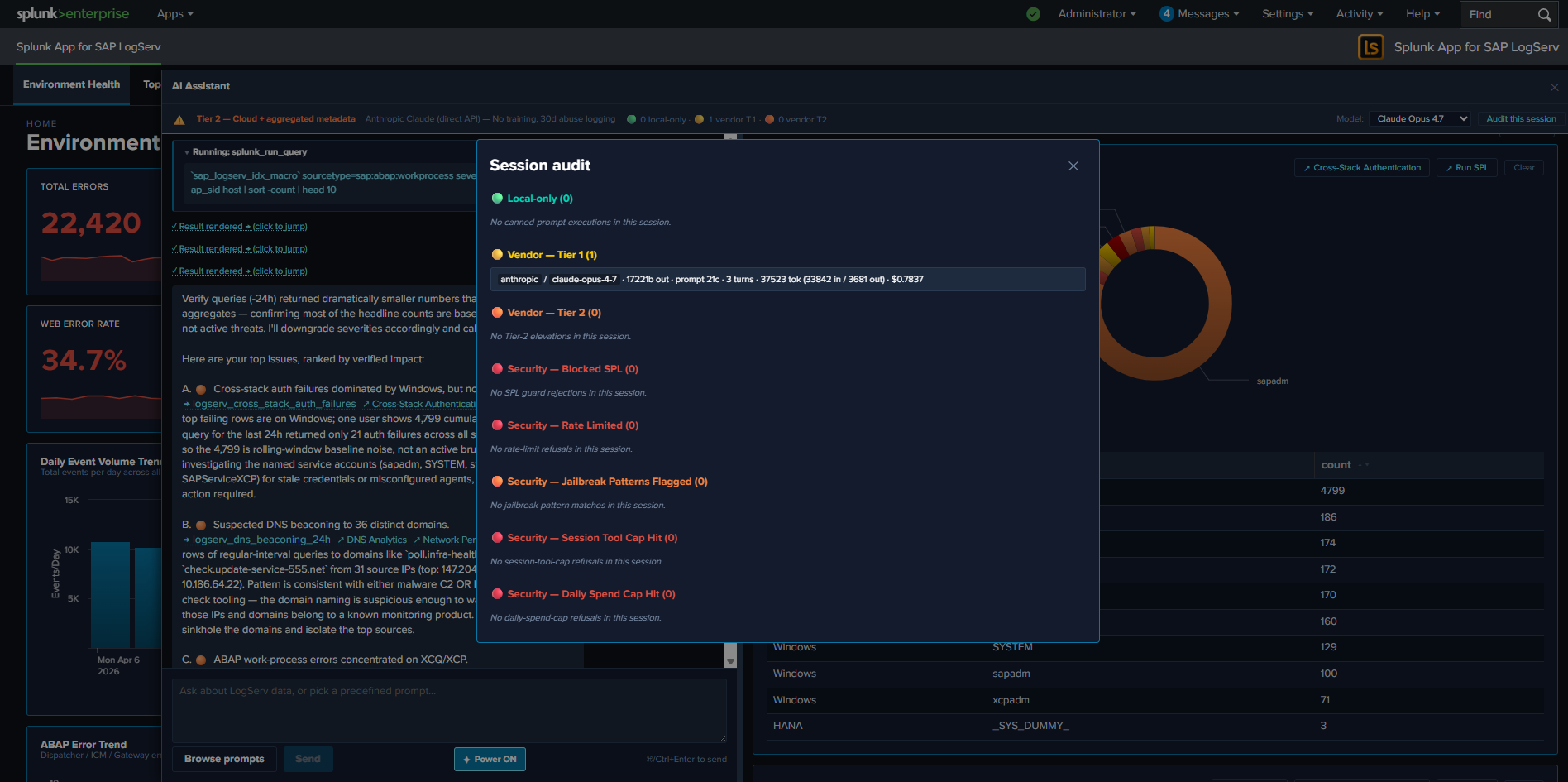

The AI Assistant is a chat-style panel embedded in the React UI App that lets analysts run pre-canned investigations + free-form prompts against their Splunk data. It has two distinct paths and a strong privacy invariant.

Privacy invariant — type-system-enforced, not policy-enforced:

User question --> AI vendor --> AI picks tools --> MCP server --> Splunk

| |

| <---- Hidden<MCPToolResult> <------+

|

v (sanitize chokepoint: count + timing only — Tier 1)

| (or aggregated metadata — Tier 2)

v

AI synthesizes narrative reply

Tool results from the Splunk MCP Server are typed Hidden<MCPToolResult> in TypeScript. The compiler refuses to put a Hidden<T> value into the outbound vendor payload — the only way to convert it is via sanitize(hidden, summarizer), which forces the caller to provide a non-data summary. The summarizer is gated by the active privacy tier:

- Tier 0 (Ollama, future) — air-gapped local LLM; no vendor traffic at all.

- Tier 1 (default) — summary is

count + execution_timeonly. AI sees no values. - Tier 2 (admin opt-in) — summary adds aggregated metadata (per-column cardinality, top-N values + counts for categorical, min/max/avg/sum for numeric, time range when

_timeis present). Still no raw rows.

Two paths:

- Predefined prompts (no LLM call): the user opens the prompt browser and clicks one of the 48 cataloged prompts. The orchestrator dispatches the saved search via the Splunk MCP Server, renders the result tile in the right pane, and appends a static interpretation + suggested-next-steps card. No vendor LLM is invoked. This is the path used in the templates-only build.

- Free-form prompts (LLM-driven): the user types a natural-language question. The orchestrator sends the system primer + user message + tool definitions to the active vendor (Anthropic / OpenAI / Azure OpenAI / AWS Bedrock). The vendor picks tools, the orchestrator dispatches them in parallel via MCP, the vendor sees only the privacy-tier summary, and the vendor synthesizes a narrative response.

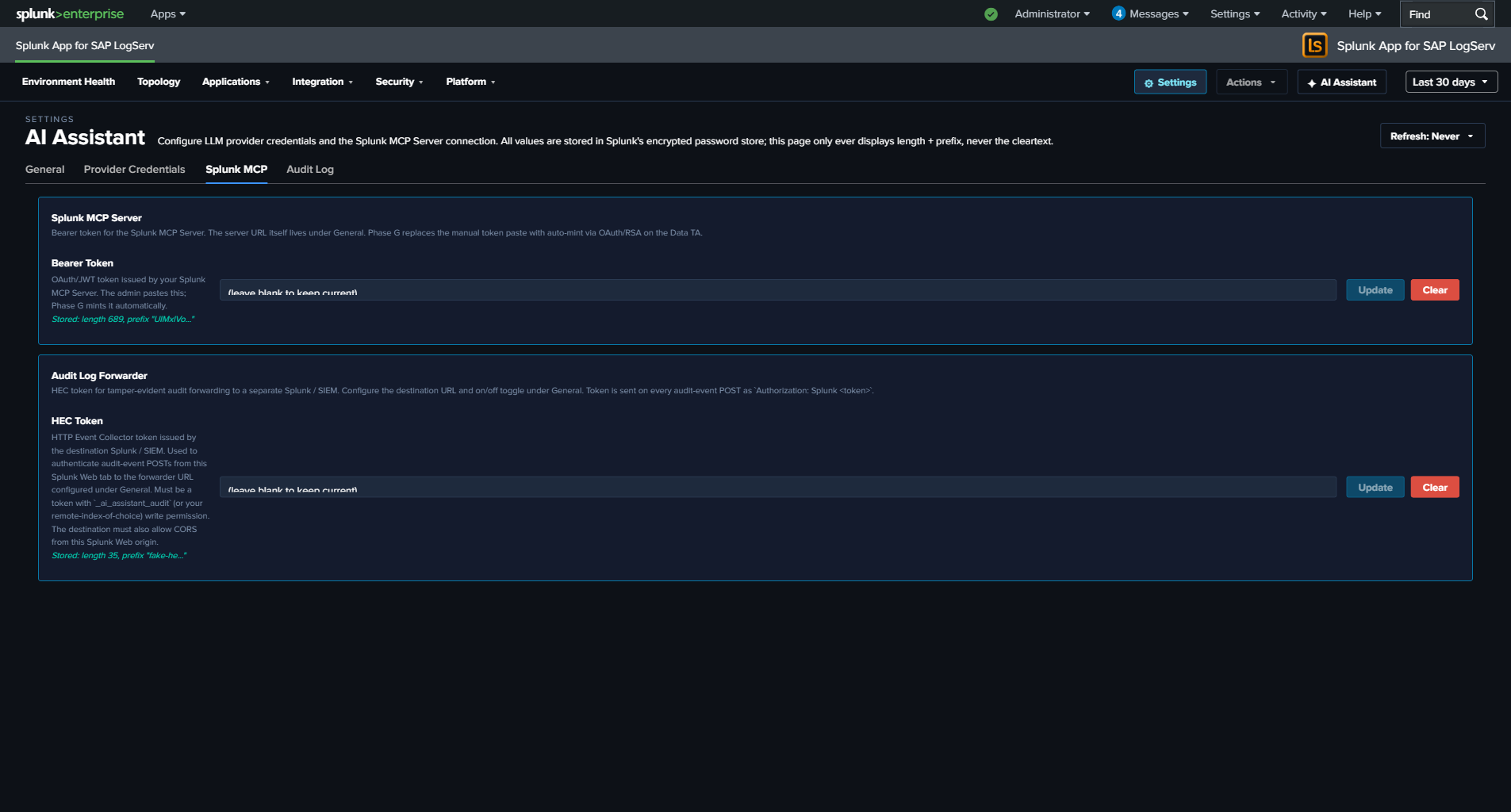

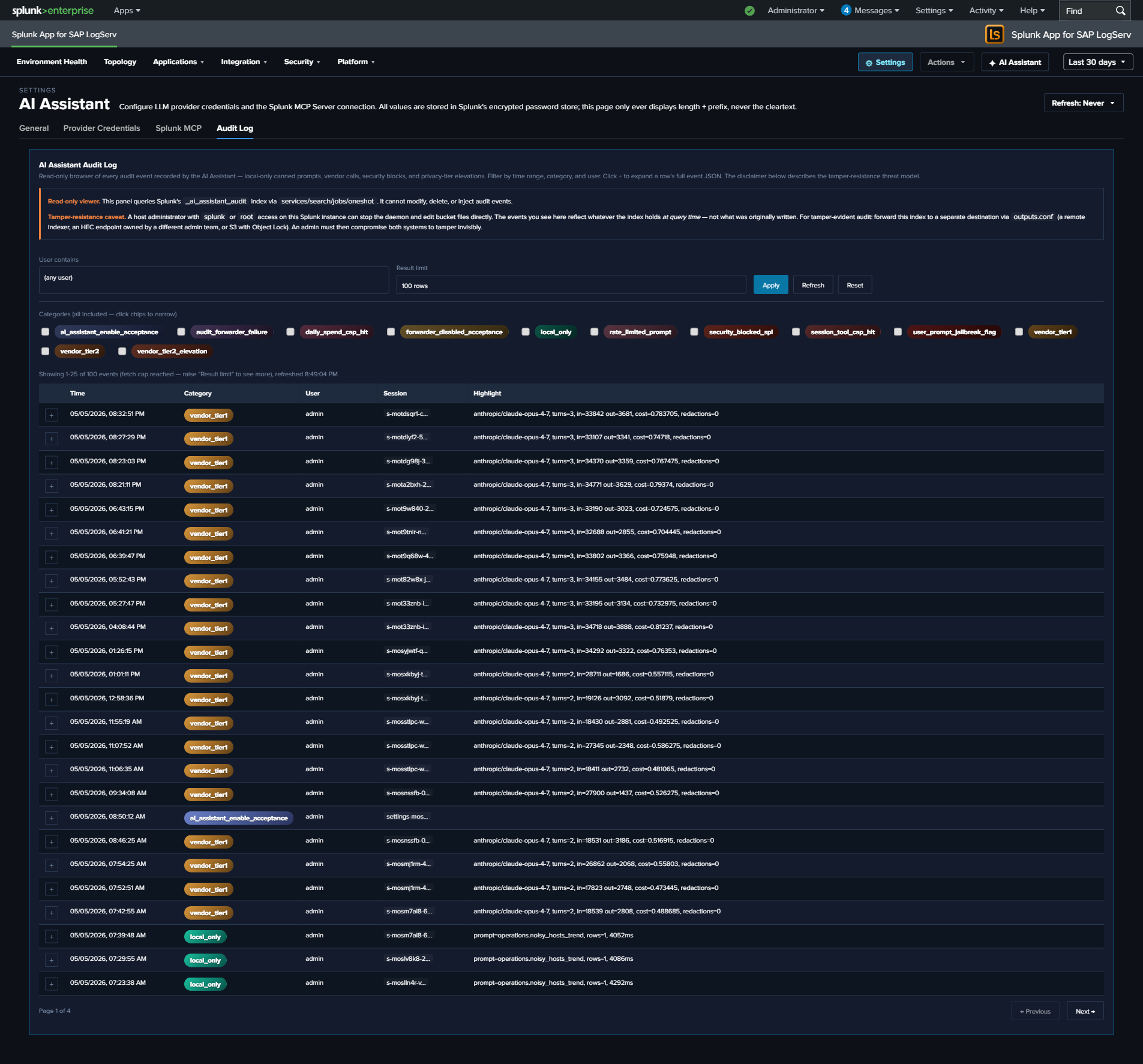

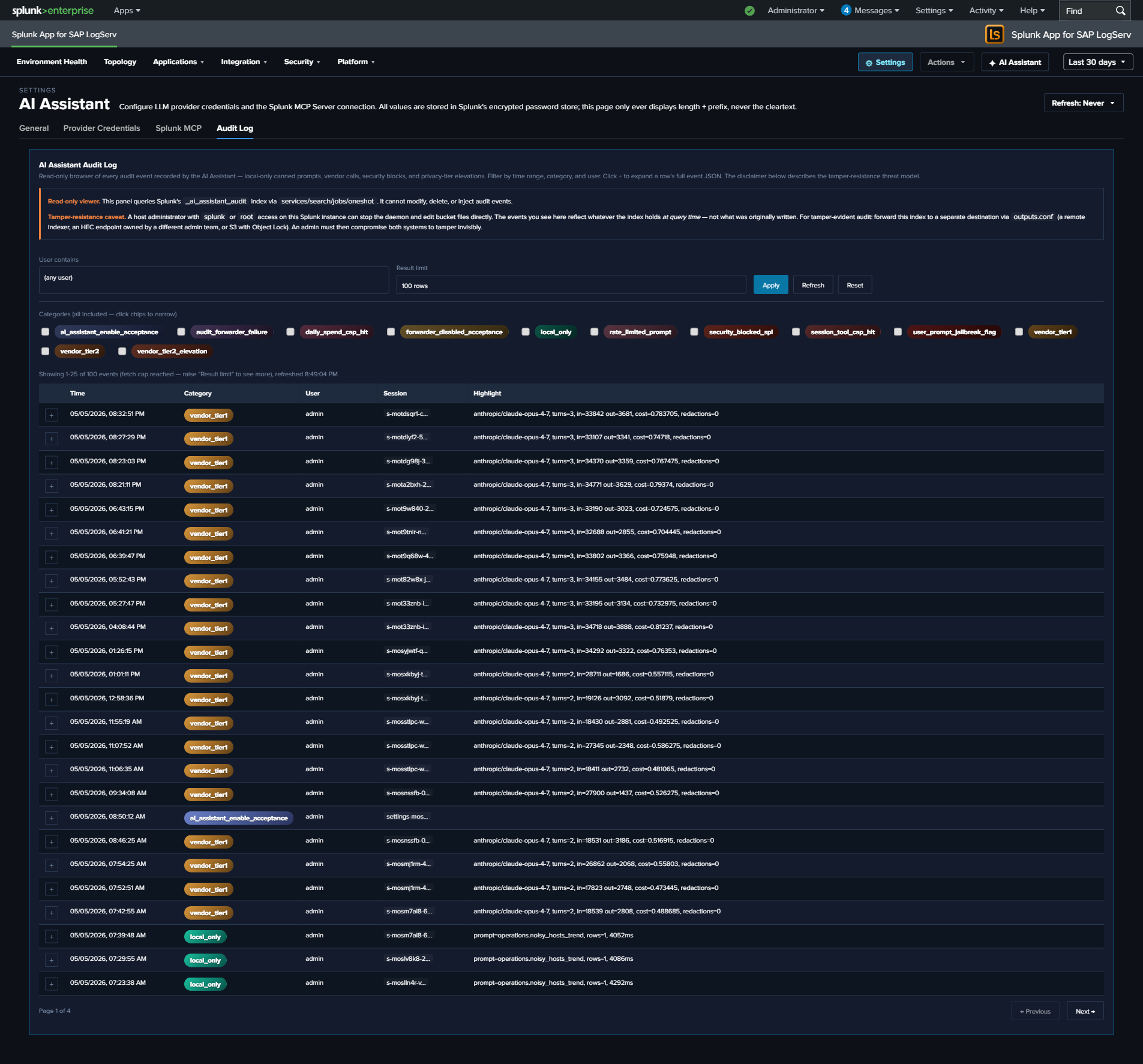

Audit log:

Every AI Assistant action — both paths — produces audit events into a dedicated _ai_assistant_audit index. Categories include local_only (canned-prompt dispatches), vendor_tier1 / vendor_tier2 (LLM calls with token counts + USD cost estimate), security_blocked_spl, user_prompt_jailbreak_flag, session_tool_cap_hit, daily_spend_cap_hit, audit_forwarder_failure, plus three legal-acknowledgement categories. The Audit Log tab in Settings provides an in-app browser; an optional HEC forwarder can stream events to a separate Splunk / SIEM destination for tamper-evidence.

Splunk MCP Server prerequisite:

The AI Assistant requires Splunk MCP Server (Splunkbase App 7931) v1.1.0 or later, installed on the same search head as the LogServ App. Cookie auth from the same Splunk Web session works by default on HTTP-only Splunk; an optional bearer token can be configured for OAuth-strict environments. See Splunk MCP Setup for end-to-end configuration, troubleshooting, and the auto-mint roadmap.

Environment Topology Architecture¶

The Environment Topology view (also accessible via URL slug integration-topology for backward compatibility) is a graph-based visualization of SAP systems, integration partners, and endpoints across the SAP landscape. It is implemented as a React component on top of @xyflow/react.

Data sources:

The view assembles its node + edge inventory from a union of six SPL searches against the existing sourcetypes (no new ingest required):

sap:abap:gateway— RFC peer/local IPs (P=<peer>/L=<local>fields)sap:abap:icm— ICM peer/local IPssap:hana:tracelogs— HANA host + tenant SID extracted from the source path (/usr/sap/<HANA_SID>/HDB<inst>/<host>/trace/DB_<TENANT_SID>/)sap:saprouter— peer hostname extracted from the parens afterhost <ip>/<service> (<resolved.host>)linux_messages_syslogwith osquery cpu_brand events — host inventory (CPU, RAM, OS, region, AZ, instance ID)- Default Splunk

hostfield — fallback for hosts not surfaced in the above

Self-derived IP→SID inventory:

The “which IP belongs to which SID” mapping is derived from a multi-source union SPL with a mvcount(sids)=1 filter — a host whose multiple sourcetypes all agree on a single SAP SID is unambiguously attributed; otherwise it’s surfaced as “unknown”. Resolution depends on what your data exposes: unique hostname/IP appearances across multiple SAP sourcetypes (HANA tracelogs, ABAP gateway L=, ICM peer fields, saprouter peer hostnames) attribute cleanly, while shared NAT IPs and external partners typically remain unknown. Additional inventory sources can be added by appending another union arm — the inventory framework is extensible per-customer without new ingest.

Saved layouts:

User-arranged graph layouts are persisted via Splunk KV Store collection logserv_topology_layouts. The schema (currently v4) carries node positions, panel state, viewport zoom + pan, enabled integration types, selected node, active right-sidebar tab, and snap mode. Layouts are per-user-named (an admin can save a default layout that other users see; users can save their own variants). Schema migration is in-memory: v1 / v2 / v3 records still load.

Live mode auto-refresh:

The toolbar’s Live mode toggle drives a 30-second auto-refresh that re-runs all SPL queries on the topology view; saved layouts are preserved across ticks. Coexists with the per-dashboard auto-refresh picker — currently both contribute additively to the refresh nonce; consolidation is planned for a future release.

Release Notes¶

Version 0.0.5.0-beta (latest)¶

AI Assistant LLM functionality intentionally disabled pending review

The v0.0.5 release ships with the AI Assistant’s LLM-driven path disabled at compile time pending internal review of the OWASP LLM Top 10 controls. Every customer running v0.0.5 runs the templates-only build variant — there is no separate “regular” build published in this release. What’s still active: the predefined-prompt path (48 canned prompts via the Splunk MCP Server), tool tiles in the right pane, drill-down chips, audit log, all 20 dashboards + Environment Topology view, per-dashboard auto-refresh picker, Download PNG. What’s disabled: free-form chat input, the model picker, the Power Mode toggle, the Provider Credentials Settings tab, and all vendor (Anthropic / OpenAI / Azure / Bedrock) traffic. The LLM-driven path will be re-enabled in a future release once review concludes — the type-system enforcement, privacy tiers, and OWASP Top 10 hardening are designed and implemented, just gated off via the build flag for now. See the AI Assistant → Templates-only Build docs page and the AI Assistant → OWASP LLM Top 10 Compliance page for the full picture.

Compatibility¶

| Splunk platform versions | 9.4.3 and later |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

| AI Assistant prerequisite | Splunk MCP Server (Splunkbase App 7931) v1.1.0 or later, on the search head where the LogServ App is installed |

Major architecture change¶

The LogServ App is fully rewritten as a React-based application. Dashboard Studio v2 is no longer used for any of the 20 dashboards. The app now ships as a single React bundle built on @splunk/react-ui, @splunk/visualizations, and @xyflow/react. The Data TA architecture is unchanged from v0.0.4.x — only the UI App tier has been rewritten.

Implications for upgraders:

- Search-time field extractions are unchanged — your existing custom searches, alerts, and reports against

sap_logserv_logscontinue to work without modification. - Dashboard URLs have changed — old DS v2 deep links (

/app/splunk_app_sap_logserv/<view>?form.global_time...) are replaced with React Router hash routes (/app/splunk_app_sap_logserv/home#/<route>?earliest=...&latest=...). Time-range query params are preserved. - Splunk 9.4.3+ remains the minimum version. No new floor.

- No data re-ingest required — the upgrade is UI-only.

New features¶

-

AI Assistant — Splunk-aware chat panel with two paths:

- Predefined prompts (no LLM call): browse 48 saved searches across three packs (

sap_basis13,security14,operations13) plus a context-aware Dashboard Focused tab that auto-filters to prompts relevant to the current dashboard. Each prompt dispatches via the Splunk MCP Server and renders a tile in the right pane with a static interpretation + suggested-next-steps card. No vendor LLM is involved in this path. - Free-form prompts (LLM-driven): the same MCP tool path is available to one of four AI providers (Anthropic, OpenAI, Azure OpenAI, AWS Bedrock); the LLM picks tools, the orchestrator dispatches, and the LLM synthesizes a narrative response. Critical privacy invariant — enforced by the TypeScript type system at build time, not by policy: no event data from your Splunk instance is ever transmitted to any AI vendor. The compiler refuses to put any tool-result value into the outbound payload — there is no runtime check, no flag to flip.

- Predefined prompts (no LLM call): browse 48 saved searches across three packs (

-

Three privacy tiers for the free-form path, admin-selectable in Settings:

- Tier 0 — Ollama-based local-only (future release).

- Tier 1 (default) — cloud LLM as SPL generator. Tool result summary fed back is only

count + timing. The AI sees no row data and no aggregates. - Tier 2 (admin opt-in) — adds aggregated metadata: cardinality, per-column top-N values + counts, min/max/avg/sum (numeric), and time range. Still no raw rows.

-

Environment Topology — graph-based view of SAP systems, integration partners, and endpoints. Built on

@xyflow/reactwith a force-directed initial layout, self-derived IP→SID inventory drawn from multiple SAP sourcetypes (gateway L=, HANA tracelogs, ICM peer fields, saprouter peer hostnames), per-node sidebar tabs (Programs, Calls/Hr, Errors, Hosts), Live mode auto-refresh, and named saved layouts persisted via Splunk KV Store (schema v4 — viewport zoom + pan + enabled-types + selected-node + active-tab + snap-mode). -

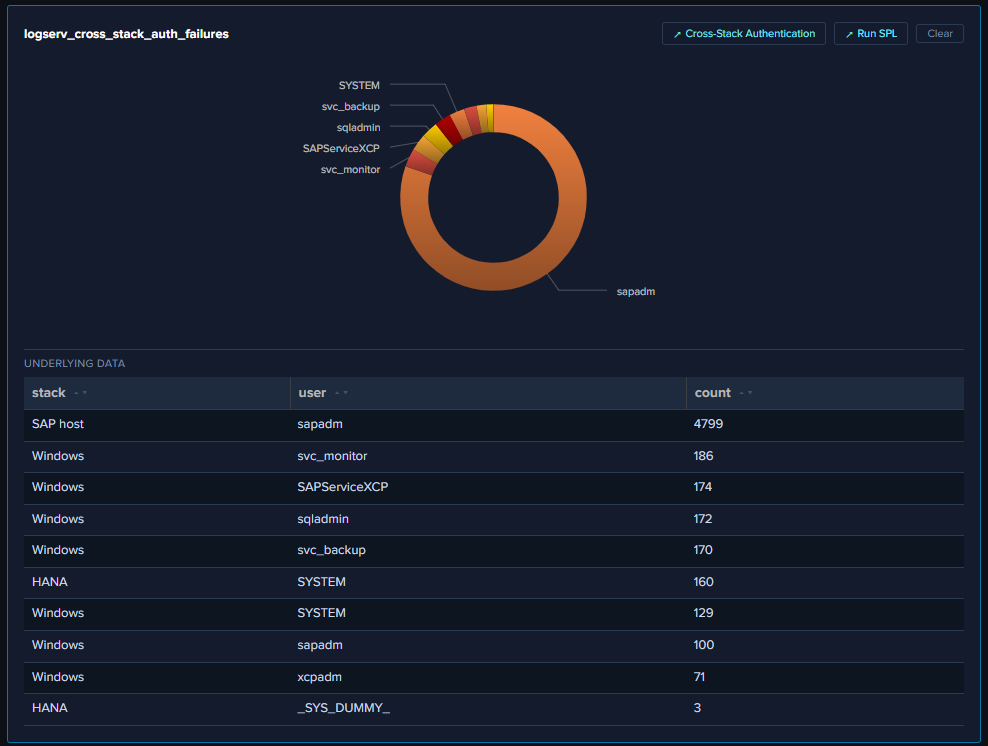

Drill-down chips — every tool result tile in the AI Assistant’s right pane carries a

↗ Dashboardchip (when a related OOTB dashboard is mapped) and a↗ Run SPLchip that opens Splunk’s Search app with the dispatched SPL pre-populated and the dispatch’s exact earliest/latest pre-applied. Same chips render alongside[→ saved_search]citations in the chat narrative on the left pane. Dashboards themselves also got drill-downs: ~70 KPIs / charts / tables / table rows across 19 dashboards open contextual cross-cutting searches with current time range preserved. -

Per-dashboard auto-refresh picker — every dashboard’s title row now carries a Refresh picker (Never / 30s / 1m / 5m / 15m / 30m / 1hr) with per-user-per-dashboard cadence persisted to a new KV Store collection (

logserv_dashboard_refresh). All charts and KPIs re-run on each tick via a shared context nonce. -

OWASP LLM Top 10 (2025) compliance — every item has a matching control. Highlights: prompt-injection sanitization with role-marker + jailbreak-pattern filtering; type-bounded data redaction; SBOM (1416 components, CycloneDX 1.4) shipped with every build; tamper-evident audit log with optional HEC forwarder; per-user rate limit (configurable, default 30/hr); USD spend cap; SPL static-analysis guard blocking write/delete/alert operators; PII redaction for

email/user(name)/*_ip/mac/account(hostname opt-in); session tool-call cap; jailbreak pattern detection on user input. See OWASP LLM Top 10 Compliance for the full controls list per item. -

Templates-only build variant — a deployable variant of the LogServ App that disables the LLM-driven flow at compile time. The MCP path + 48 canned prompts + tool tiles + drill-down chips + audit log all stay fully active so the solution can be demonstrated end-to-end without enabling any LLM provider. UI cues: chat input disabled with explanatory placeholder; Send button disabled; model picker hidden; Power Mode toggle hidden; Provider Credentials Settings tab hidden; cyan info-tone banner explains the build mode. Defense in depth: the LLM dispatch entry point bails immediately with a system notice if reached at runtime.

-

Power Mode — role-gated

✦ Powertoggle in the AI Assistant chat input. Admin assigns a list of Splunk roles (viaservices/authorization/roles) that may see the toggle; when on, every prompt forces a saved-search dispatch before LLM synthesis (forced-RAG). State persists per-tab in sessionStorage. Audit events tag the toggle state for SOC pivot analysis. -

TIME-WINDOW REASONING primer rules — the AI Assistant’s system primer (Tier 1 + Tier 2) now teaches the LLM to: (a) identify the dispatch window before claiming severity, (b) normalize cumulative count to events/hour or events/day before ranking, (c) for any finding ranked

[severity:high]or[severity:critical], dispatch ONE additional verify query withearliest=-24h latest=nowBEFORE writing the narrative, and (d) state the window precisely in narrative (“X events in the last 24h” vs. “X cumulative over the search’s rolling window”). The result: the AI now self-corrects in one turn instead of needing a follow-up prompt to re-rank cumulative-noise findings. -

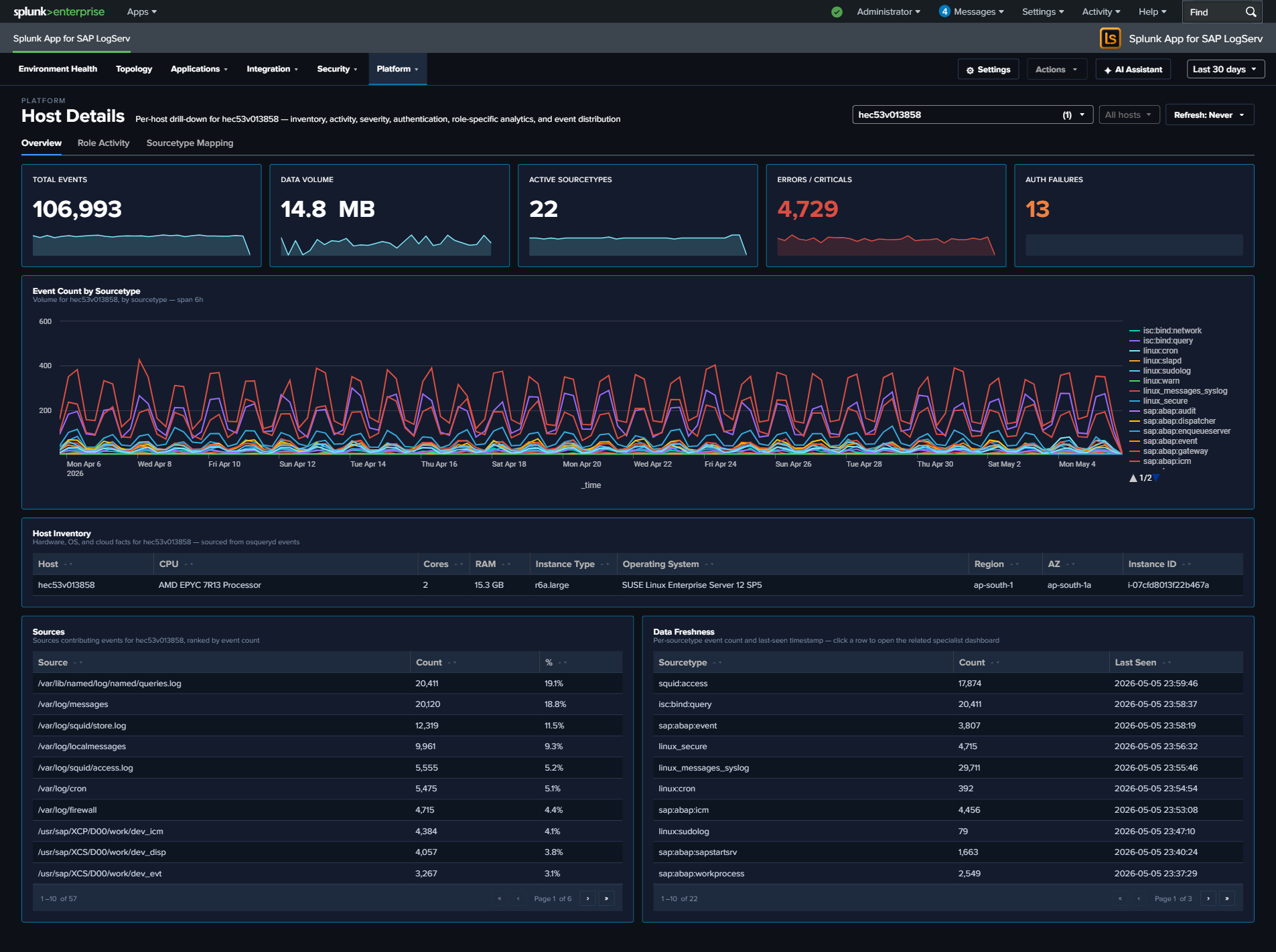

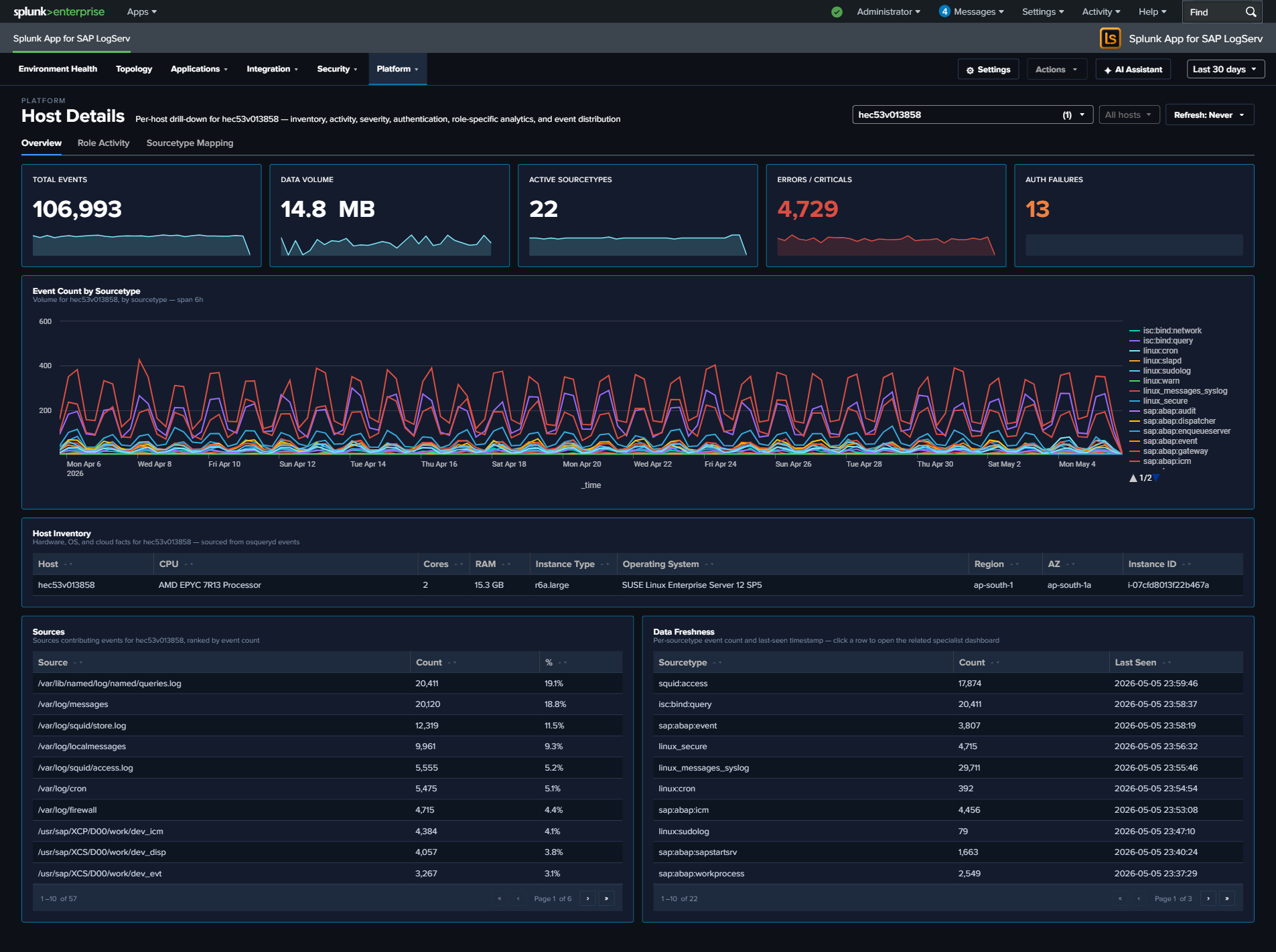

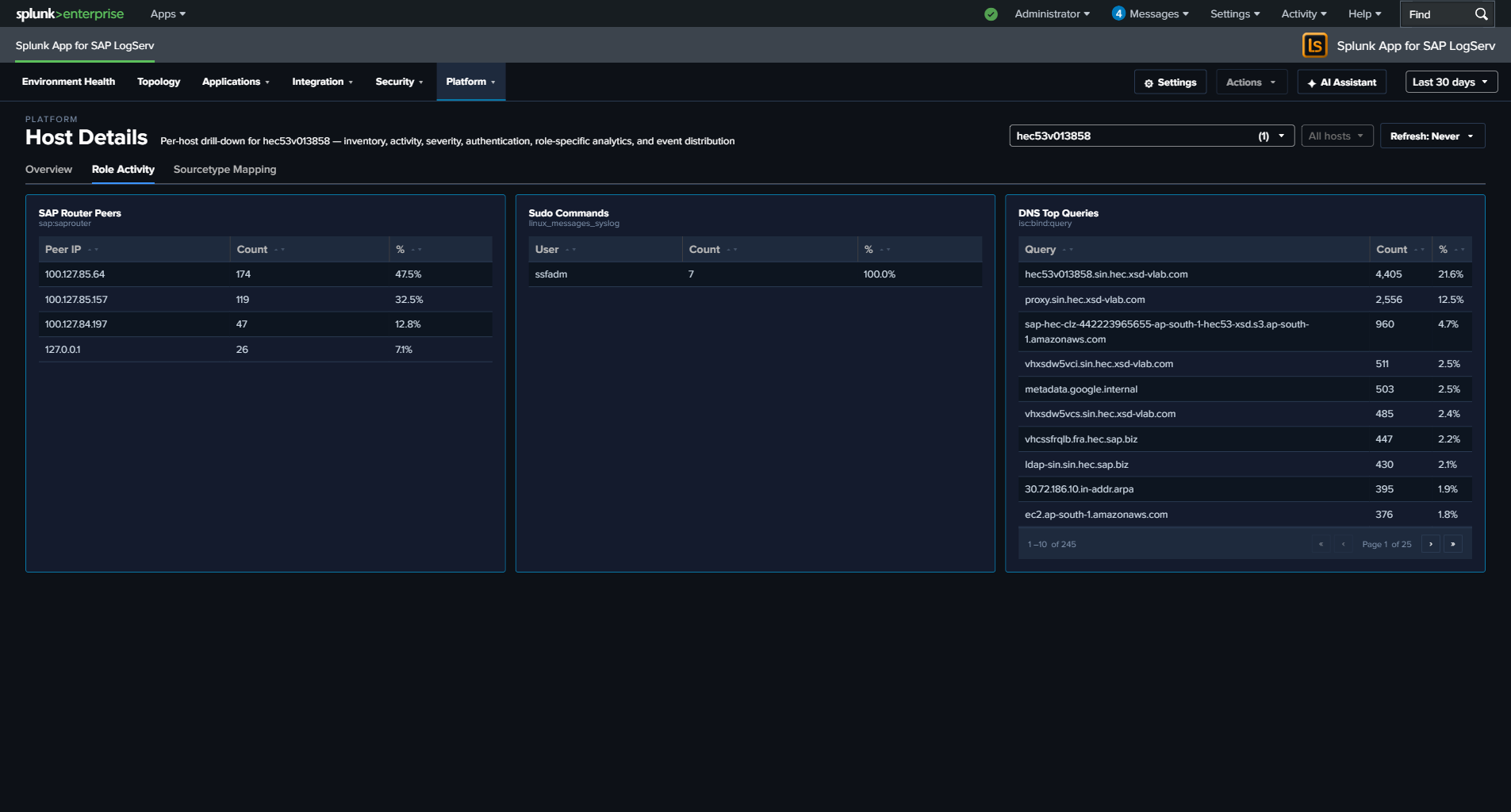

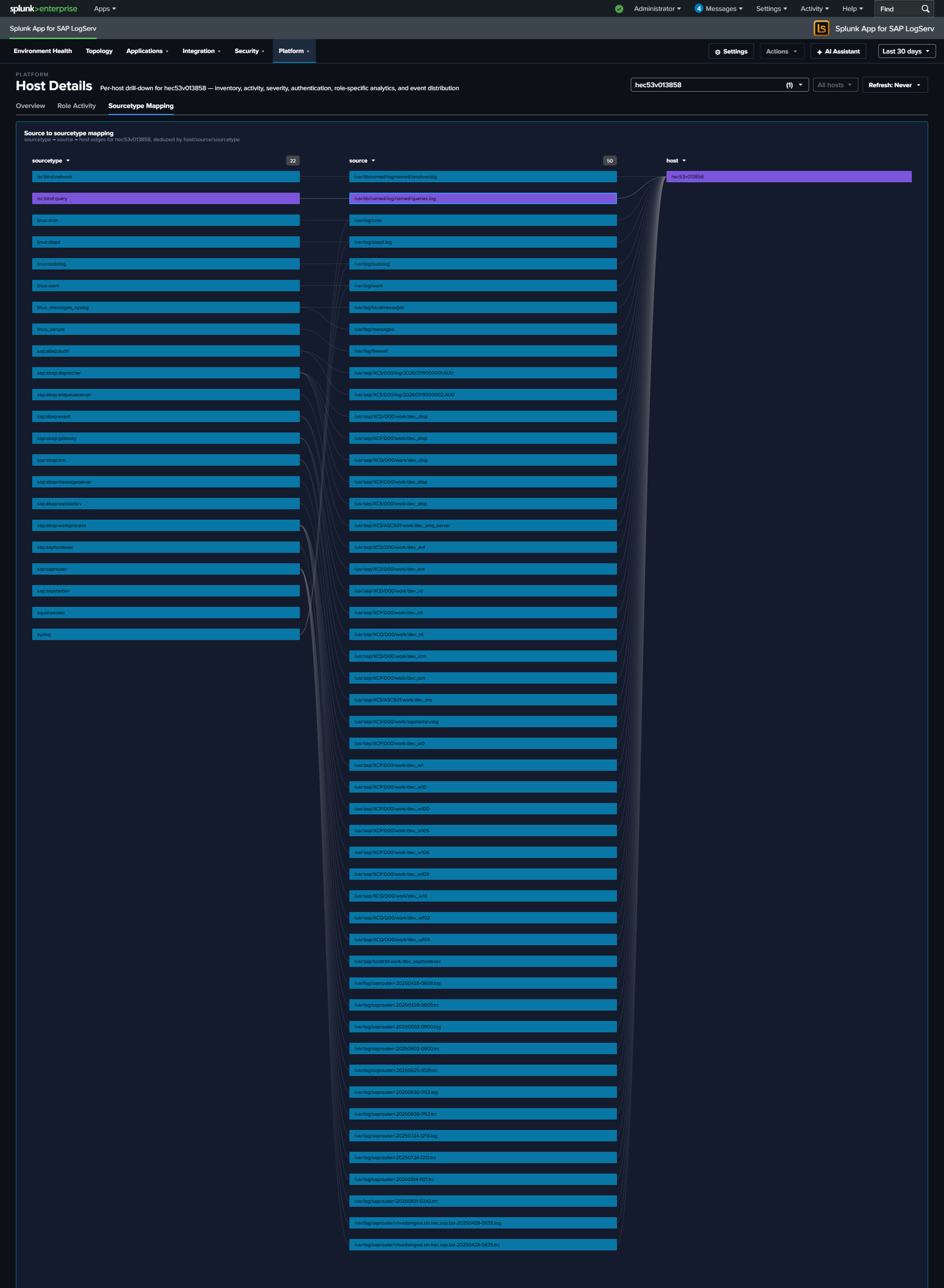

HostDetails multi-host filter + 3-tab layout — the Host Details dashboard’s host picker is now a

Multiselectwith filter input + Select-All-Matches semantics. Multi-host scope is reflected in URL (?hosts=h1,h2,h3) with localStorage persistence. SPL builders splice ahost IN (...)clause when 2+ hosts are selected. Three tabs: Overview (5 KPIs + charts + Host Inventory + Severity Timeline), Role Activity (7 role-specific panels withhideWhenNoData), Sourcetype Mapping (Sankey of source → sourcetype). -

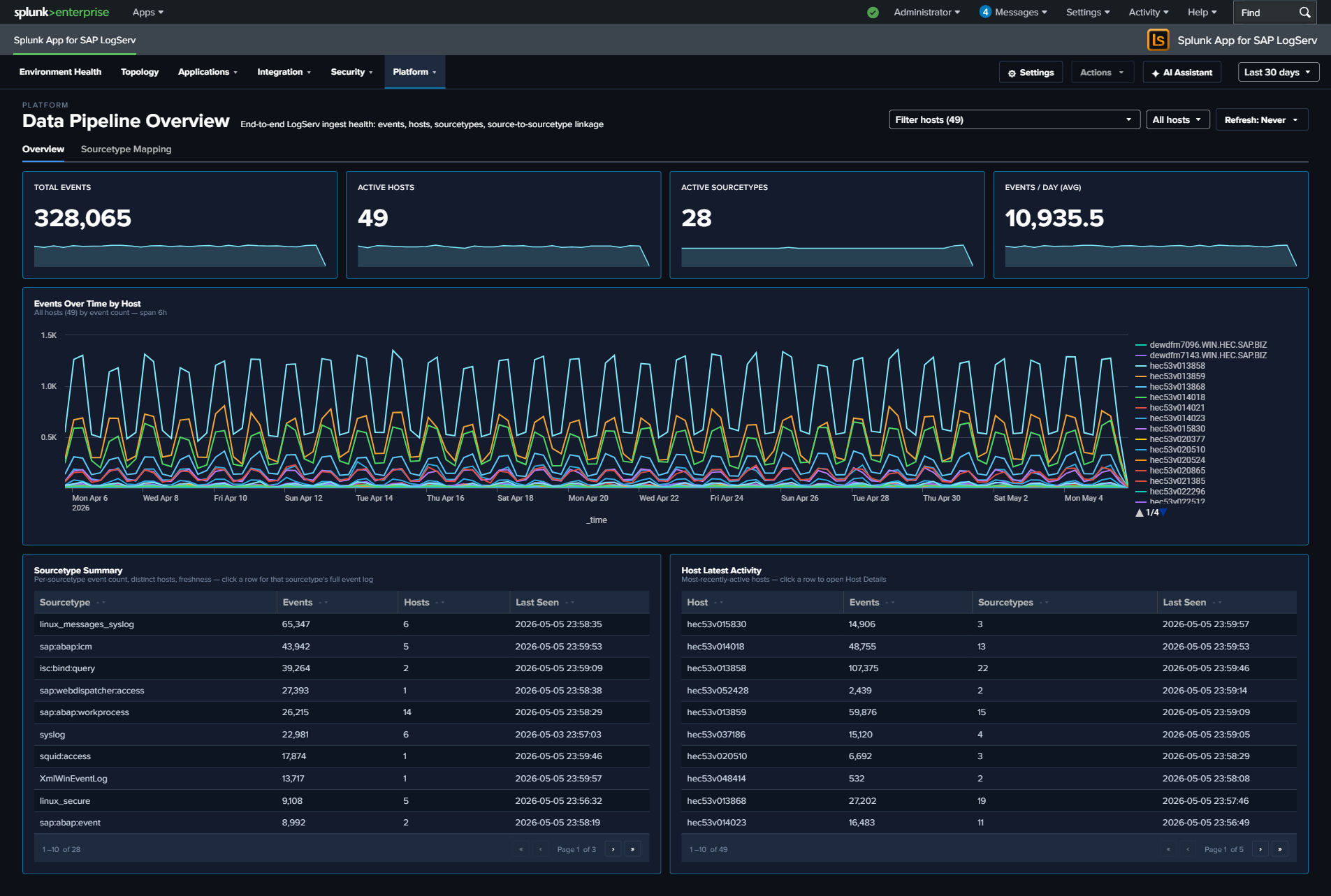

Data Pipeline Overview dashboard-wide host filter — Multiselect + Top-N picker lifted from the chart-level actions slot to the dashboard’s title row. Filter scope expanded from one chart to all 4 KPIs + 4 panels + the Sourcetype Mapping linked graph on the second tab.

-

Path B sourcetype migration — the legacy

[set_srctype_for_syslog]transform has been split into four dedicated routing transforms producing four new sourcetypes:linux:cron,linux:warn,linux:sudolog,linux:slapd. This clears the AppInspect pretrained-sourcetype warning and avoids field-extraction collisions withSplunk_TA_nix’s built-in[syslog]stanza. Existingsourcetype=syslogdata ages out per index retention; dashboards OR both old + new during the transition. -

Branded LS app icons — orange “LS” mark on a dark rounded-square frame. All three apps (UI App, Data TA, Index App) ship the same icon set at 36×36 + 72×72 in regular + Alt variants.

-

Splunk-pattern legal acknowledgement — two compile-time legal/liability modals gate the master

enabledtoggle and the audit-forwarder-disabled save (matching Splunk’ssplunk_instrumentationoptInVersionframework). User identity, Splunk-stamped IP, timestamp, and a SHA-256 of the disclaimer revision are recorded in the audit log so subsequent acknowledgement reviews can prove which revision was acknowledged.

Enhancements¶

- 20 React-rewritten dashboards plus the new Environment Topology view — every one of the 20 v0.0.4.2 dashboards is a fresh React implementation, and the Environment Topology view is a new graph-based surface unique to v0.0.5. All dashboards use the unified dark theme (

#0d1117page background,#141b2dpanel fill,#0877a6panel outline) and ship the per-dashboard auto-refresh picker. - Saved-Layout schema v4 — the topology view’s saved layouts now persist viewport (zoom + pan), enabled integration types, selected node, active right-sidebar tab, and snap-mode in addition to the v3 node + panel positions. Schema migration is in-memory: v1 / v2 / v3 records still load.

Multiselect+Top-Npicker as a reusable title-row pattern — labelless inline cluster matching the visual idiom across HostDetails and Data Pipeline Overview.- AI Assistant prompt browser tab persistence — the last selected pack tab is remembered across modal-open events, persisted per-tab via sessionStorage. Persists only when the user actually picked a prompt, not on casual tab-flipping.

- Static guidance card per canned prompt — each predefined prompt’s intent-map entry includes an

interpretationparagraph + bulletednextSteps. Surfaced as a “How to read this result” card after the tool tile lands. Skipped on the AI-driven path (the LLM writes its own commentary). 126 next-step entries split: 64 plain · 57 canned-prompt links · 5 custom-SPL links. - Dashboard Focused prompt browser tab — first-position tab in the prompt browser that filters the 48 prompts down to those mapped to the current dashboard. Auto-hides when no prompts match. Pack-origin chips on each card so users can find the prompt back in its home pack.

- Audit Log Settings tab — read-only browser of the

_ai_assistant_auditindex with time-range / category / user / limit filters; per-row JSON expand. Inline disclaimer covers the tamper-resistance threat model and recommends HEC-forwarder mitigation. 12 audit categories with distinct gradient-fill chip colors. - HEC audit forwarder — admin-configurable forwarding of audit events to a separate Splunk / SIEM / S3-with-Object-Lock destination. Browser-side dual-write at flush time. Failure events captured as a separate

audit_forwarder_failurecategory so disabled / down forwarders are visible in the audit log itself. Visible<T>brand types — outbound-message types are taggedVisibleand unwrap explicitly; the type system refuses to put aHidden<MCPToolResult>into an outbound vendor payload, mechanically enforcing the privacy boundary.- Dynamic timechart span — every time-series chart’s SPL passes a

timechartSpancomputed from the current time range so 30-day windows don’t render with 700 data points. Helper atutils/timechartSpan.ts.

Fixed issues¶

- Stale aggregate framing in AI Assistant top-N responses — the LLM previously cited cumulative aggregates (“4,799 failed authentications”) as if they were active rates, leading to misleading “lock the accounts today” recommendations. Build 171’s TIME-WINDOW REASONING primer rules now force a verify query before high-severity claims, and the same cumulative number gets correctly downgraded with explicit “stale long-window aggregate, not an active brute-force” framing.

- Splunk risky-command safeguard on

nextSteps.spl— two intent-map deep-dive strings used| map maxsearches=1 search="..."which Splunk flags as risky. Rewrote to first-class subsearch syntax. Intent map version bumped v0.0.8 → v0.0.9. - AZ field bleeding into next osquery section — the Host Inventory panel’s

zoneregex now stops at the#012osquery section separator ([^,#]+instead of[^,]+), so AZ values likeap-south-1ano longer carry trailing data from adjacent fields. - MCP cookie auth on same-session HTTP-only Splunk — verified empirically that the Splunk MCP Server v1.1.0 accepts cookie auth from the same Splunk Web session that’s serving the React app, so the default

mcp_server_urlworks on HTTP-only Splunk with no bearer token configured. The optional bearer token layers on top viaAuthorization: Bearerand is invalidated on 401 with one retry. - Splunk

services/authorization/rolesendpoint — Multiselect for the Power Users field reads roles from the correct path;services/authentication/roles(a common typo) silently 404s and produces a stuck “Loading roles…” UI. - Splunk Web static-asset cache busting — every meaningful code change bumps

[install] buildinapp.confso browsers don’t serve stale bytes after deploy. - Webpack

style-loaderrequirement — addingimport '@xyflow/react/dist/style.css'exposed a latent webpack-config gap where CSS was being compiled but never reaching the DOM. Bothstyle-loaderANDcss-loaderare now in the webpack rules.

Restyled (visual conventions)¶

- 20 React dashboards with the unified dark-theme card style:

#0d1117page,#141b2dpanel fill,#0877a6panel outline, 3 px rounded corners, 5 px inset, 12 px panel gaps. Equivalent to the v0.0.4.2 DS v2 look but rebuilt natively in styled-components. - Severity dots — chat findings render with a colored dot (yellow → orange → red → dark-red for low → medium → high → critical) using a radial gradient so they read as glossy beads matching the donut-chart palette aesthetic.

- Win11-style 8-dot loading spinner — replaces the prior cyan-arc indicator in AI Assistant streaming + tool-executing states. CSS-only via single keyframe + per-dot

--anglevariable + staggeredanimation-delay. Reused in the Topology canvas loading overlay (extracted to a sharedSpinnercomponent). - Cyan-light dotted-underline citation links — the AI’s

[→ saved_search]citations render as clickable scroll-to-tile spans; sibling↗ Dashboardand↗ Run SPLchips use the same visual idiom. - Compact Multiselect with Select-All-Matches — HostDetails + Data Pipeline Overview both use

@splunk/react-ui/Multiselectwithcompact + filter + selectAllAppearance="checkbox"so typing into the filter narrows the dropdown and the Select All control auto-renames to “Select all matches”. - Glossy severity-dot gradients —

radial-gradient(circle at 35% 30%, ...)so dots read as 3D beads not flat circles. - Audit-log filter chips with per-category gradients — 12 categories each get a distinct 3-stop linear gradient with mid-stop ~35–45% luminance for white-text readability, dim-when-unchecked via layered translucent-black wash so the text stays readable.

Known issues¶

- Tier 0 (Ollama, air-gapped) is not yet shipped. Tier 0 currently returns “not yet implemented” if selected. Planned for a future release.

- Auto-mint MCP token roadmap is not yet shipped. Bearer tokens for the Splunk MCP Server still require manual paste in Settings → Splunk MCP. Planned for a future release.

- Splunk MCP TA gate is bypassed because the dependent TA isn’t yet identified on Splunkbase. The gate will be restored when a real TA is published.

hideWhenNoDatapanel-disappearance behavior continues to apply on HostDetails Role Activity tab. Expected behavior, but empty tabs can feel sparse on hosts that only forward a single sourcetype.

Third-party software attributions¶

The v0.0.5.0 LogServ App ships with THIRD-PARTY-NOTICES.md at the root of the installed app directory (and at the root of the GitHub release source tree). The file lists all 1235 unique top-level npm packages bundled with the React app — names, versions, declared licenses, repository URLs, and full LICENSE / NOTICE / COPYING text where available. License posture: 1012 MIT, 64 ISC, 57 Apache-2.0, 46 BSD-3-Clause, 22 BSD-2-Clause, 11 @splunk/* (covered as a Splunk Extension under §1.C of Splunk General Terms), plus a long tail of permissive licenses. No GPL / AGPL / LGPL components. See Third-Party Software for the full license-distribution summary and refresh policy.

A CycloneDX 1.4 SBOM (SBOM.json) is also regenerated on every build and shipped inside the package alongside THIRD-PARTY-NOTICES.md.

Version 0.0.4.2-beta¶

Compatibility¶

| Splunk platform versions | 9.4.3 and later |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

New features¶

- 3 new SAP service sourcetypes —

sap:sapstartsrv(SAP Start Service / Host Control Agent with auth and SSL/TLS negotiation fields),sap:saphostexec(SAP Host Agent execution logs), andsap:saprouter(SAP Router connection and trace logs). These cover thesap/sapstartsrv,sap/saphostexec, andsap/saprouterlog types in the LogServ S3 bucket. - 28 total sourcetype routing transforms with

@logserv_filterannotations for index-time filter support. - ~176 total search-time directives (EXTRACT, EVAL, FIELDALIAS) across all SAP-specific sourcetypes in the LogServ App.

- 15 new dashboards in the LogServ App, bringing the total to 20. Dashboards are organized into 4 purpose-driven navigation groups plus a top-level Environment Health landing page (reorganized from the previous 3-group structure so that the top menu is balanced and each group answers a specific class of question):

- Top-level — Environment Health (default landing)

- Applications (5 dashboards) — the SAP app runtime itself: ABAP Network & Security, ABAP Operations, Work Process Performance (new), HANA Audit, HANA Trace

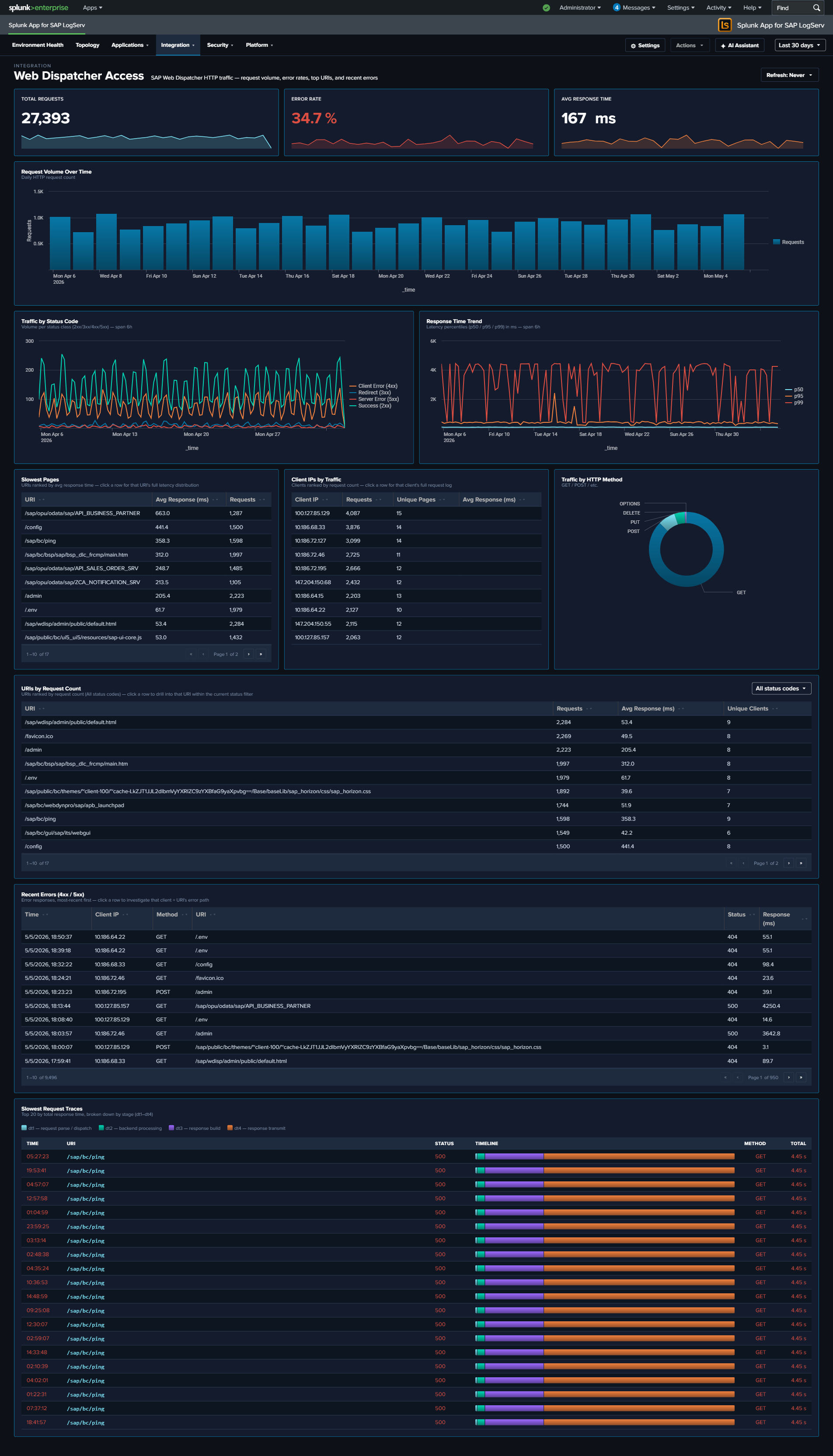

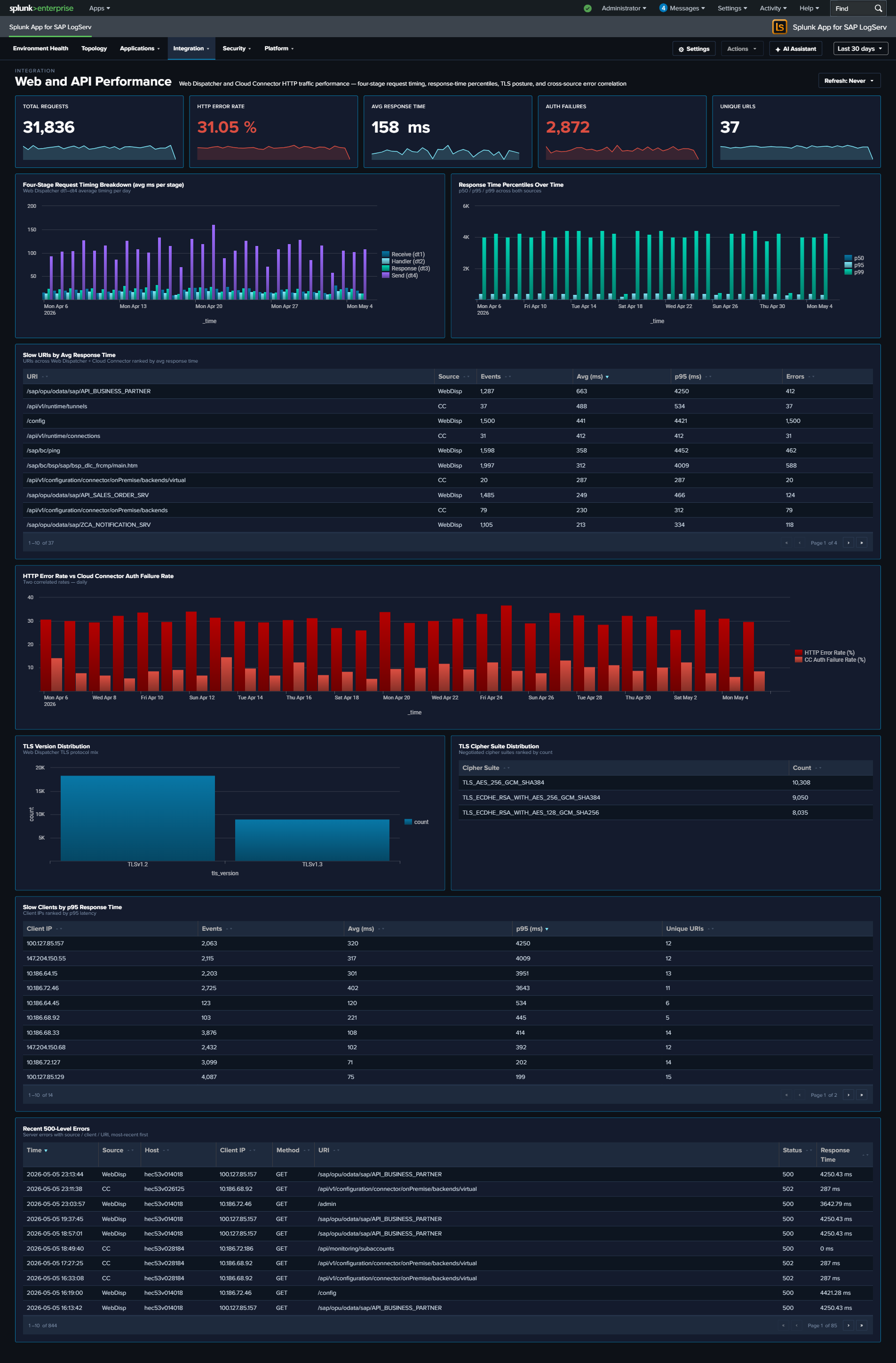

- Integration (5 dashboards) — how SAP talks to other systems: SAP Services, SAP Router (new), Cloud Connector, Web Dispatcher, Web and API Performance (new)

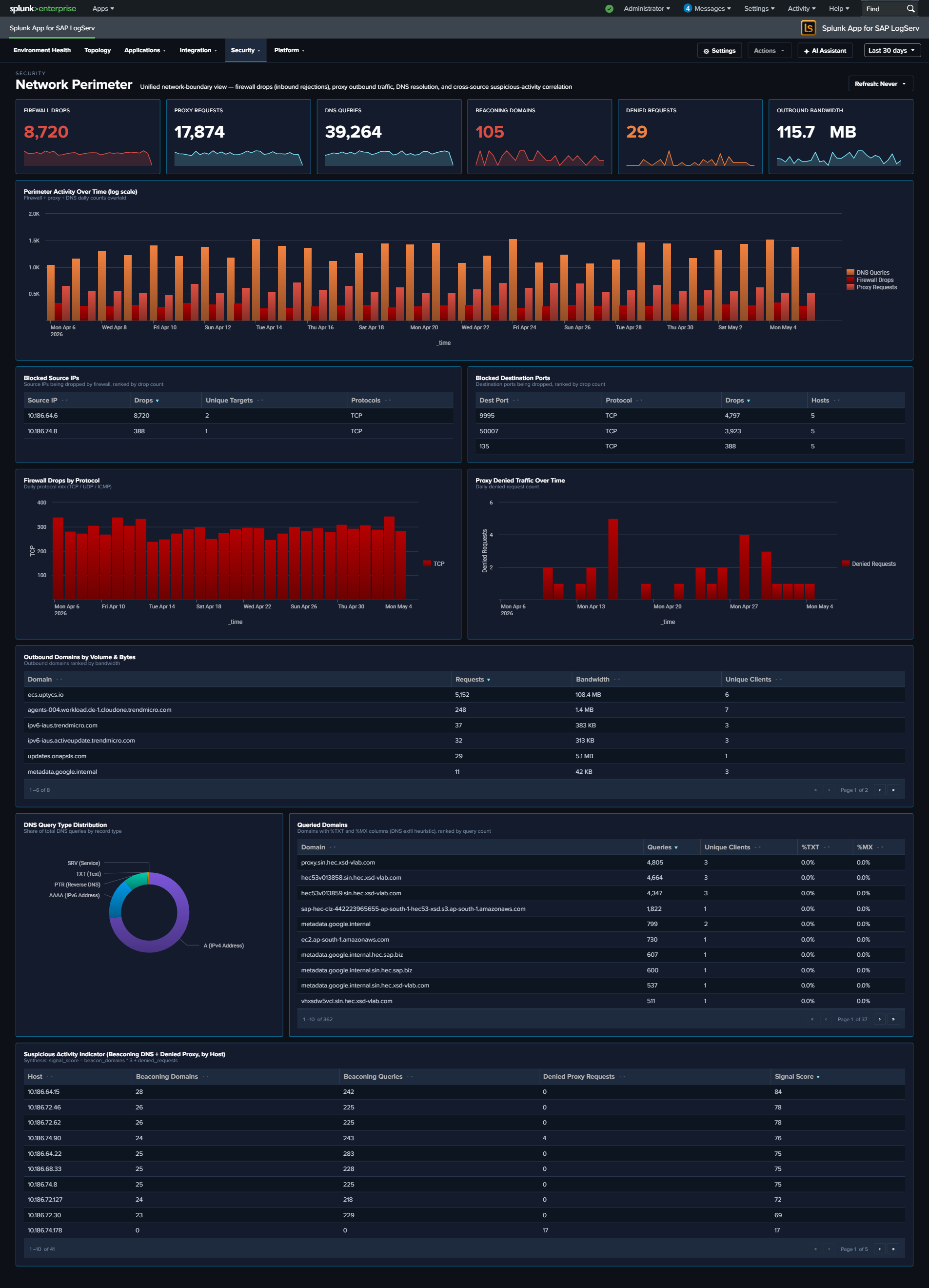

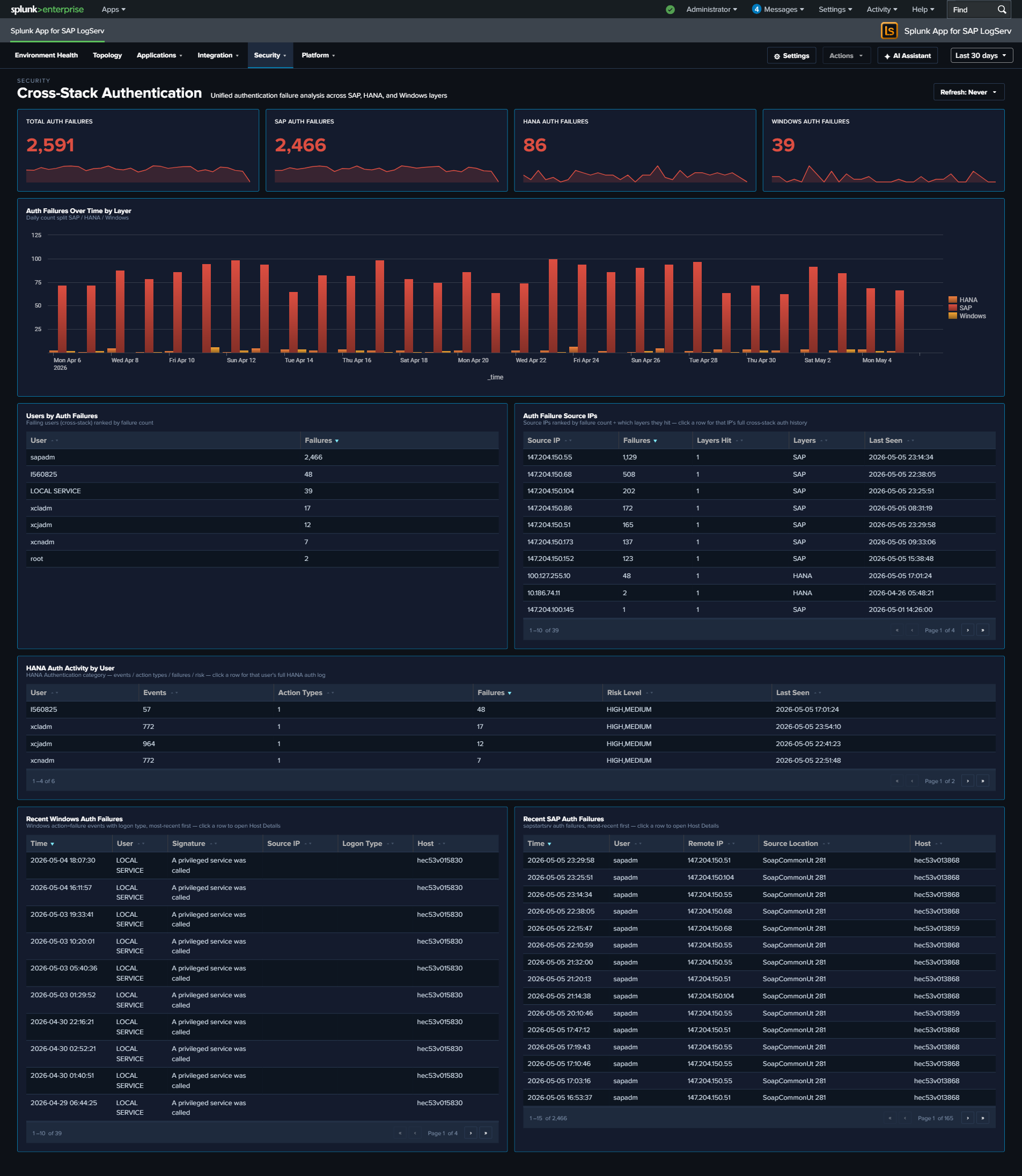

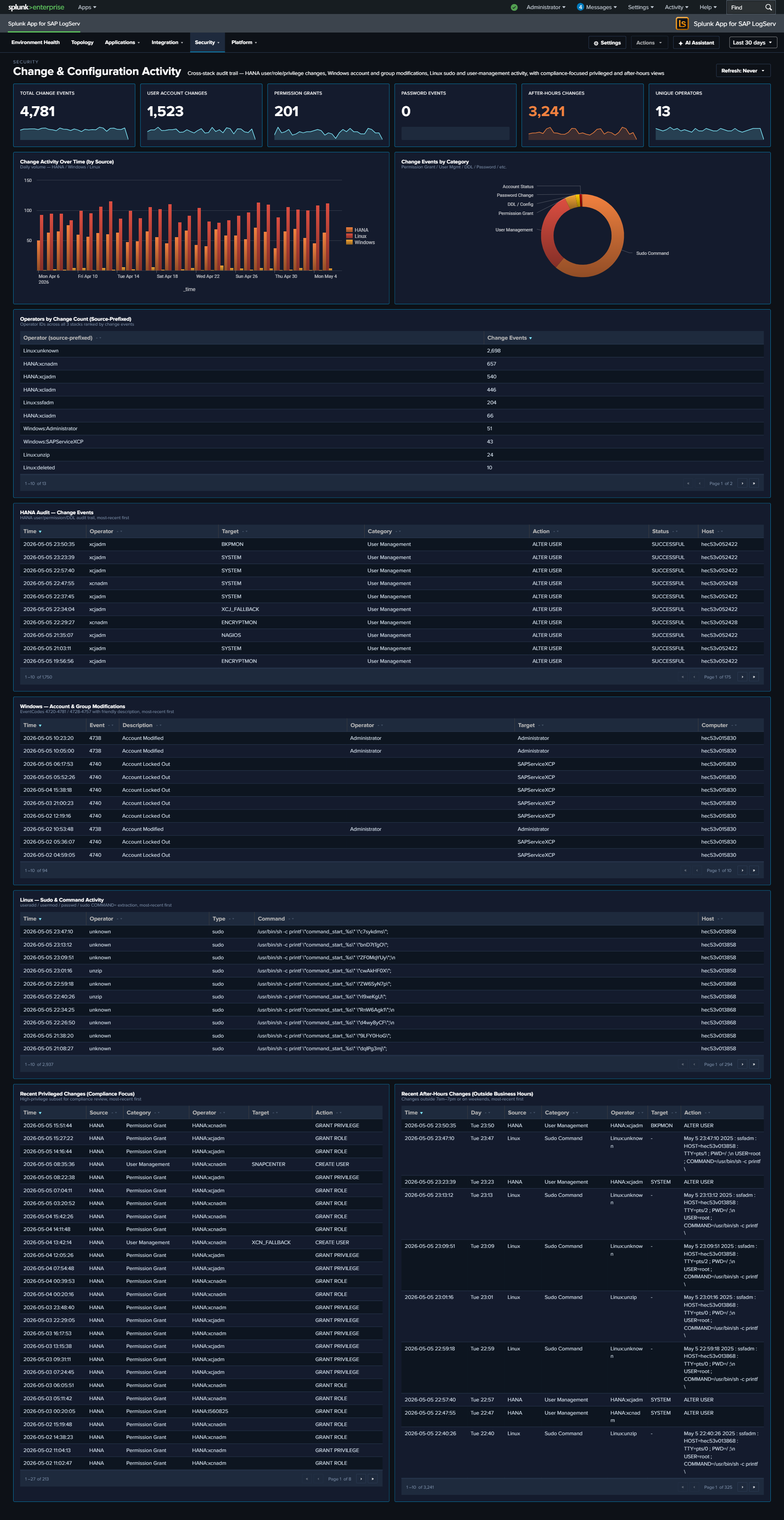

- Security (3 dashboards) — cross-source synthesis for security posture and compliance: Network Perimeter (new), Cross-Stack Authentication (new), Change & Configuration Activity (new)

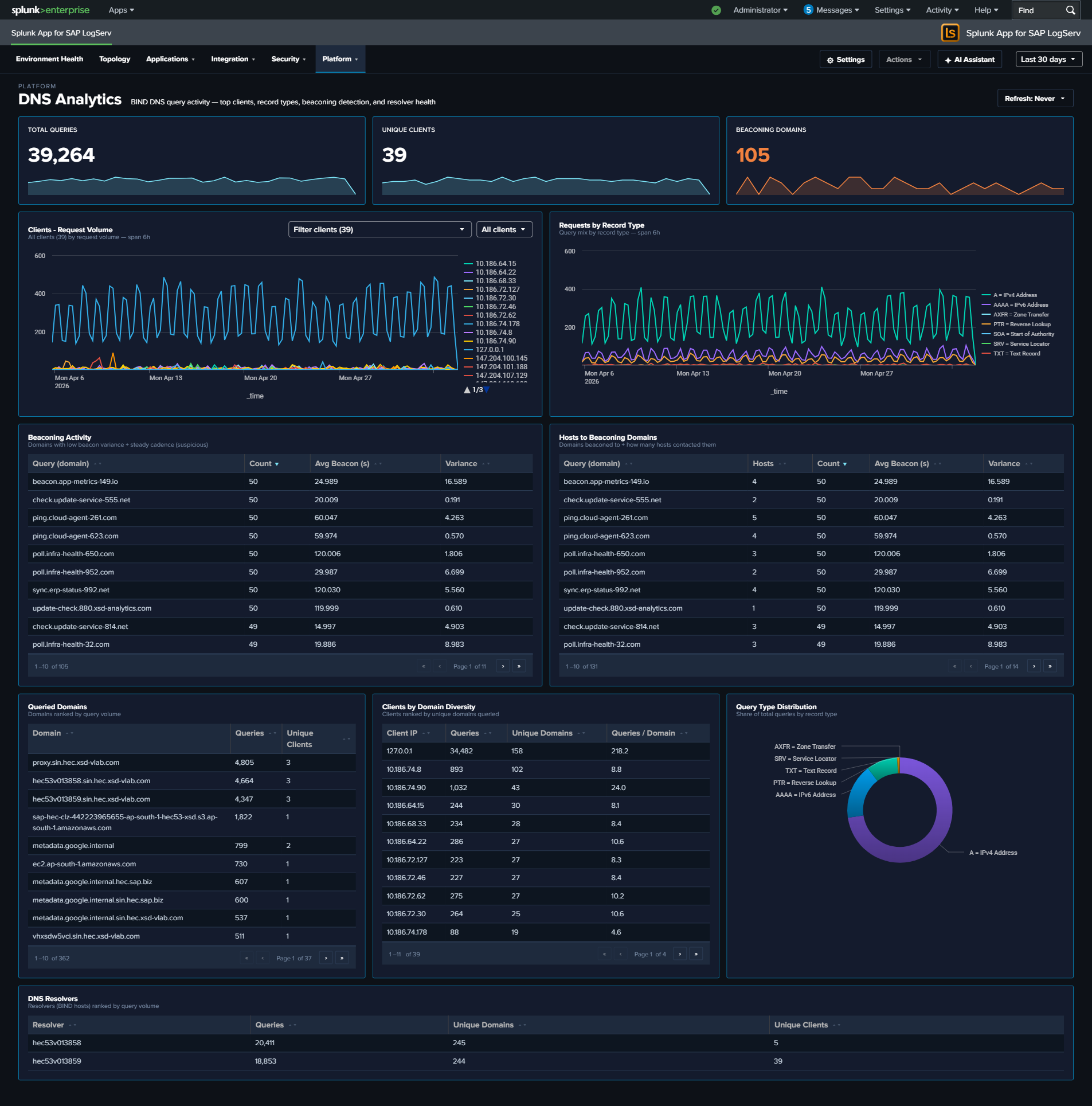

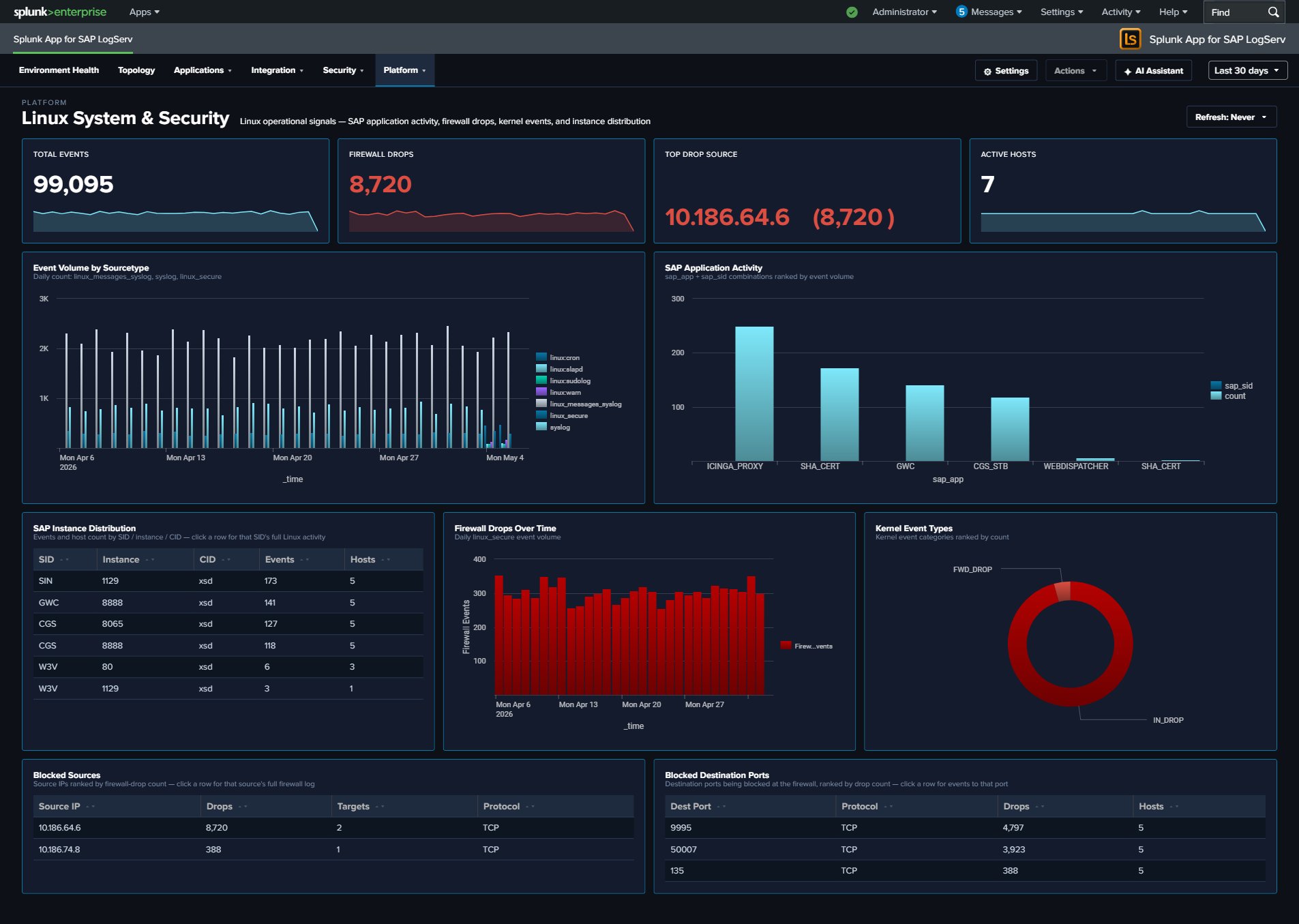

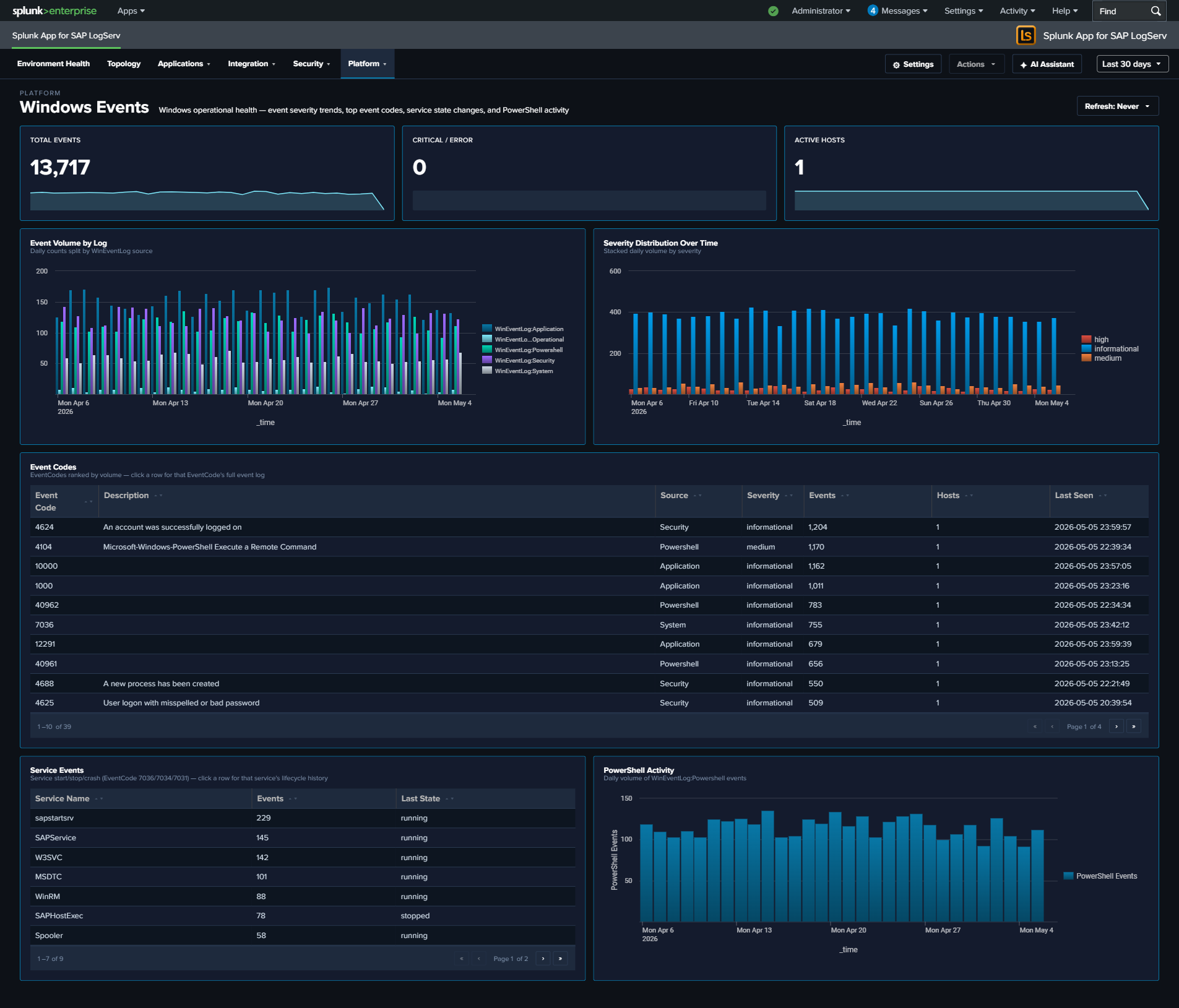

- Platform (6 dashboards) — infrastructure, ingest, and forensics: Data Pipeline Overview, DNS Analytics, Linux System & Security, Windows Events, Proxy Analytics, Host Details

- 6 new dashboards from Phase 2 (added after the original 14):

- Cross-Stack Authentication — unified authentication failure analysis across SAP, HANA, and Windows layers, with per-layer KPIs, source-IP aggregation, and per-layer recent-failure tables

- SAP Router — SAP Router connection activity, error analysis, and network boundary monitoring (separated out of SAP Services to give router its own investigation surface)

- Work Process Performance — SAP ABAP work process utilization with all 13 SAP-standard dev_w* trace category codes, dispatcher health, and function-level activity

- Web and API Performance — Web Dispatcher four-stage request timing (

dt1-dt4), response-time percentiles, TLS version and cipher-suite distributions, and a cross-source panel overlaying HTTP error rate against Cloud Connector auth failure rate - Network Perimeter — unified network-boundary view synthesizing firewall drops, proxy outbound traffic, and DNS resolution into one dashboard; includes firewall-drops-by-protocol, top outbound domains with byte volumes, and a cross-source Suspicious Activity Indicator table ranking internal hosts by combined beaconing-DNS + denied-proxy signal score

- Change & Configuration Activity — compliance-focused audit trail unifying HANA user/role/privilege/DDL changes, Windows account and group modifications (15 canonical security EventCodes), and Linux sudo + useradd/usermod/userdel/passwd activity; includes source-prefixed operator identities, a category taxonomy, and two compliance-focused “Recent” tables (Privileged Changes + After-Hours Changes)

- Environment Health dashboard — Cross-cutting operations view with 6 KPIs, 6 category-specific error trend charts (ABAP, HANA, Security, Web/Network, Cloud Connector, OS/Infra), critical events table, host error matrix, and performance panels. Every panel drills down to the relevant detailed dashboard. Now set as the default landing page.

- Tabbed Data Pipeline Overview — Two tabs: “Overview” (5 KPIs + 14-column Sourcetype Summary table + Host Latest Activity) and “Linked Graph” (full-width source-to-sourcetype link graph). The Sourcetype Summary table includes Status (Fresh/Stale/Very Stale), Trend sparkline, % of Total, Avg/Day, Volume, App Errors, Hosts, Sources, Events (1h), First Seen, Last Seen, and Lag columns.

- HANA Audit security panels — Three new panels surface the rich

sap:hana:auditfield set: Risk-Tiered Event Timeline (stacked column byrisk_level), After-Hours / Weekend Admin Activity (table filtered to admin users outside business hours), and High-Risk Events (table ofis_critical=trueevents with SQL Statement column). - KPI sparklines — ~75 KPIs across all 20 dashboards display an inline daily-trend sparkline below the headline number, using a single-source

timechart + eventstatspattern. Five flavors: count-based, distinct-count, rate, formatted-volume, and per-day re-detection. One acknowledged exception: the Linux “Top Drop Source” KPI is a string value (<IP> (<count>)) with no sparkline. - Click-through drilldowns — Most KPIs, table rows, and chart points open a filtered Splunk search. Clickable table cells carry a cyan accent so the drilldown affordance is visible.

- KPI single values added to DNS Analytics (Total Queries, Unique Clients, Beaconing Domains), HANA Audit (Total Events, Failed Operations, Active Users), and Web Dispatcher (Total Requests, Error Rate, Avg Response Time). Access Denied Events KPI added to Cloud Connector; Top Drop Source KPI added to Linux.

- Enhanced DNS Analytics — Top Queried Domains, Top Clients by Domain Diversity (DGA detection), Query Type Distribution, and Top DNS Resolvers table.

- Enhanced HANA Audit — Top Users by Activity, Activity by Hour of Day (after-hours detection), and Client IP Analysis.

- Enhanced Web Dispatcher — Request Volume Over Time, Top URIs by Request Count, and Recent Errors (4xx/5xx).

- Host Details — 3-tab expansion — The Host Details dashboard is now organized into three tabs. Overview shows a 5-KPI row (Total Events, Data Volume, Active Sourcetypes, Errors/Criticals, Auth Failures), the Host Event Count by Sourcetype timeline, a cross-source Severity Timeline, Host Inventory (CPU/RAM/EC2/OS/region from osquery), Recent Authentication Events + Recent Errors & Criticals cross-source tables, Top Sources, Activity by Hour of Day, and Data Freshness per sourcetype. Role Activity contains seven role-specific panels (HANA Audit Activity, ABAP Work Process Mix, Web Dispatcher Traffic by Status, SAP Router Peers, Windows Event Codes, Sudo Commands, DNS Top Queries) that auto-hide via

hideWhenNoDatawhen the selected host has no data for that component. Sourcetype Mapping houses the full-width Sankey chart that was previously inline. - Cross-dashboard navigation — Every dashboard includes a Navigate to Dashboard dropdown with Go button that preserves the selected time range when switching between dashboards.

- In-dashboard documentation link (“More Info” button) — A cyan More Info button in the top-right of every dashboard’s toolbar row opens the corresponding online-documentation section in a new browser tab. The link targets the dashboard’s section within the appropriate category page (

.../dashboards/applications/#<dashboard-slug>, etc.) so users can jump from a live dashboard to its narrative documentation in one click. For multi-tab dashboards (Data Pipeline Overview, Host Details) the button appears on every tab.

Enhancements (per-dashboard restructures)¶

- SAP Services — Removed the 4 router-related panels (now on the SAP Router dashboard); featured SSL Authentication Failure Sources full-width; replaced Event Volume by Service line chart with a stacked column chart showing Normal vs Errors per service.

- Windows Events — Removed Security Event Actions chart and Top Users table (now on Cross-Stack Authentication); featured Top Event Codes full-width with 7 enriched columns (Event Code, Description, Source log, Severity, Events, Hosts, Last Seen).

- Proxy Analytics — Replaced single-slice donuts (Content Types → Cache Action Distribution column; HTTP Methods → Top Clients by Domain Diversity bar). Added new bottom row: Top URL Domains by Bytes Out + Bandwidth Over Time by Domain.

- DNS Analytics — Replaced the uninterpretable Volume & Packet Size scatter plot with a Top DNS Resolvers table; restructured row 2 to 4 panels including Query Type Distribution donut moved up to pair with the trend chart.

- ABAP Operations — Work Process Categories donut widened to 836 px with bottom legend showing all 13 friendly category names (uses the shared

wp_category_nameprops.conf EVAL). - Cloud Connector — Renamed “Error Rate” → “HTTP Error Rate” to clarify scope; added Access Denied Events KPI (4th KPI in row).

- Linux System & Security — Added Top Drop Source KPI surfacing the highest single-source firewall drop count in

<IP> (<count>)format (4th KPI in row).

Fixed issues¶

- DNS Analytics beaconing panels now use correct

message_type="Query"case (was"QUERY"). - Web Dispatcher data source had hardcoded Unix timestamps; replaced with

$global_time.earliest$/$global_time.latest$tokens. - Work Process Categories labels — The Work Process Categories panel on the ABAP Operations dashboard now displays meaningful names for all 13 standard SAP dev_w* trace component codes (A = ABAP Processor, B = Database Interface, C = Communication, D = Dispatcher, M = Memory Management, N = Network (NI), O = Enqueue / Lock, Q = RFC Queue, R = Roll Area, S = SQL / Statistics, T = Task Handler, X = RFC / CPIC, Y = Dynpro / Screen). Previously only A/B/C/M were mapped and the rest appeared as single-letter codes. The same

wp_category_namemapping is now also used on the Work Process Performance dashboard. - KPI panel alignment — KPI single-value widgets on all three-KPI dashboards are evenly spaced with the rightmost KPI outline aligned to the right edge of panels below.

- Right-edge symmetry — All rows on width=1920 dashboards now cap at R=1910; width=1600 dashboards cap at R=1590. Symmetric 10 px padding on both sides.

- HANA Trace component noise filter — Top Components, Component by Severity, and Source File Hotspots panels now filter out parsing artifacts (“INFO”, “of”, “service:”) that previously diluted real component data.

- Ingest Errors KPI on Data Pipeline Overview — Refined to exclude ExecProcessor noise (which wraps all scheduled-script output as ERROR-level regardless of the script’s actual log level). Filters to real Python ERRORs only.

- SSL Authentication Failure Sources panel (SAP Services) — Replaced the previous Sapstartsrv SSL/TLS Events panel which showed empty columns due to mismatched field extractions. Now aggregates by source IP using fields that actually exist in the data (auth_user, remote_ip, remote_port) and provides row drilldown to the full event set per IP.

- Empty-safe KPI pattern — All count-based and dc-based KPIs now display

0instead of###when the underlying search returns no events (uses a synthetic-row appendpipe wrap).

Restyled (visual conventions)¶

- Dashboard “card” style — All 20 dashboards use a unified visual treatment:

#0d1117page background,#141b2dpanel fill,#0877a6panel outline, rounded corners, 5 px inset between rect frame and inner viz. - KPI typography standardized —

majorFontSize: 36, explicitlabelColor: #7b8ea8,labelFontSize: 13, semanticmajorColor(#dc4e41red for errors, white for neutral counts, orange for warnings, teal for positive signals). The Linux Top Drop Source KPI usesmajorFontSize: 28as an acknowledged exception for its long-text string display. - Standard red consolidated — All red color variants (

#e86c5d,#af575a,#ff3b30,#ff2d55) normalized to single hex#dc4e41. - Tables — Hardcoded header background (

#1e2a3d), zebra-stripe alternating rows (#0d1520/transparent), fixed header. Cyan accent on clickable cells indicates drilldown affordance. - 12 px panel gaps — Exact horizontal and vertical spacing between every panel border across all dashboards.

- Dashboard descriptions — Every dashboard now displays a 1-line description below its title.

- “Go >” navigation button — Standardized: 120×25 px at top-left of every dashboard with 10 px padding above and below; majorFontSize 16.

- “More Info” documentation button — Standardized: 140×25 px at top-right of every dashboard, aligned with the right edge of the canvas (10 px padding from the right; x = canvas_width − 150). Same cyan fill

#0877a6, white text, majorFontSize 16 as the Go button. Opens the dashboard’s online-documentation section in a new browser tab viadrilldown.customUrlwithnewTab: true.

Known issues¶

- The dashboards in the LogServ App use Dashboard Studio v2 format and require Splunk 9.4.3 or later.

- Several Host Details panels (Host Inventory, Recent Authentication Events, Recent Errors & Criticals, and all seven Role Activity panels) use

hideWhenNoDataand will disappear for hosts that lack the underlying sourcetype data. For example, a Windows host without osquery data will not show the Host Inventory panel; an ABAP-only host will not show the HANA Audit Activity panel on the Role Activity tab. This is the dashboard adapting to the selected host’s role — not a bug — but empty tabs can feel sparse on hosts that only forward a single sourcetype.

Third-party software attributions¶

Version 0.0.4.1-beta¶

Compatibility¶

| Splunk platform versions | 9.4.3 and later |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

New features¶

- 12 new SAP application sourcetypes — 9 SAP ABAP types (

sap:abap:audit,sap:abap:dispatcher,sap:abap:enqueueserver,sap:abap:event,sap:abap:gateway,sap:abap:icm,sap:abap:messageserver,sap:abap:sapstartsrv,sap:abap:workprocess), 1 HANA trace type (sap:hana:tracelogs), and 2 SAP Cloud Connector types (sap:scc:audit,sap:scc:http_access). - Compound lookahead routing — New routing pattern for log types where the same

clz_subdirvalue appears under multipleclz_dirpaths (e.g.,auditexists under bothabap/andscc/). Uses regex lookahead to match both fields simultaneously. - Search-time SID/instance extraction — ABAP and HANA sourcetypes extract

sap_sidandsap_instancefrom thesourcemetadata field usingEXTRACT ... in sourcedirectives in the LogServ App. - ~128 total search-time directives across all SAP-specific sourcetypes.

Fixed issues¶

Known issues¶

- The dashboards in the LogServ App use Dashboard Studio v2 format and require Splunk 9.4.3 or later.

Third-party software attributions¶

Version 0.0.3-beta¶

Compatibility¶

| Splunk platform versions | 9.4.3 and later |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

New features¶

- Two-package architecture — The solution is now split into two packages: the Data TA (

splunk_ta_sap_logserv) for data collection and index-time processing, and the LogServ App (splunk_app_sap_logserv) for dashboards and search-time field extractions. See Architecture for details. - Built-in index-time filtering — Configure include/exclude patterns and time-based filters directly through the Splunk Web UI. Filtered events never consume Splunk license. See Configuring Filters.

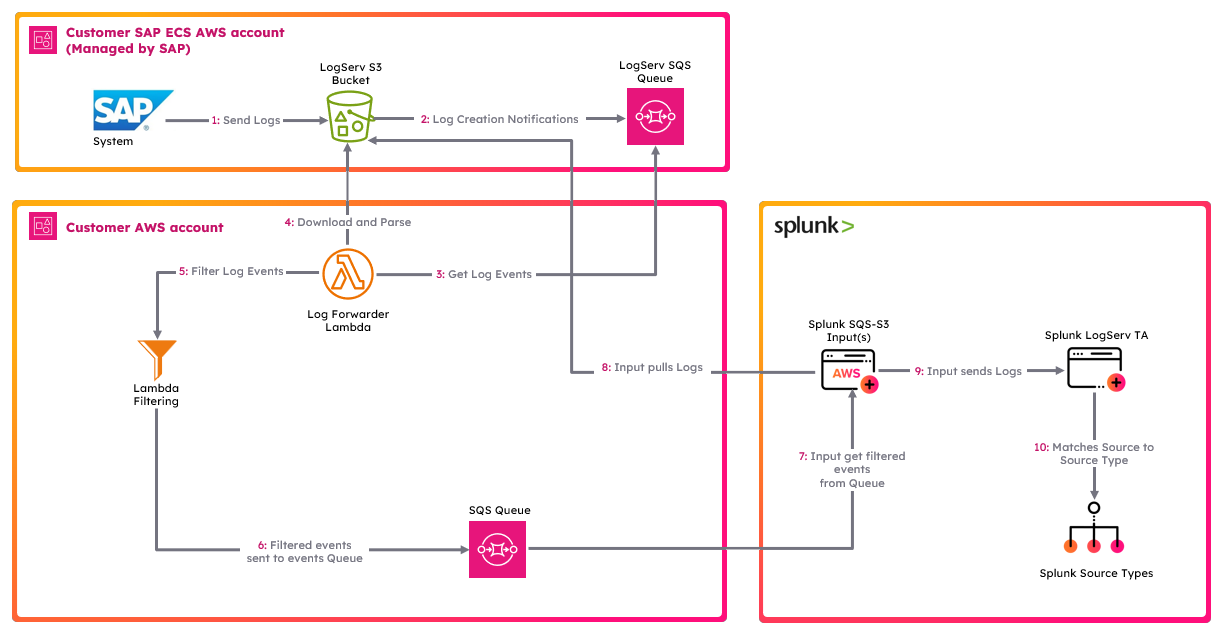

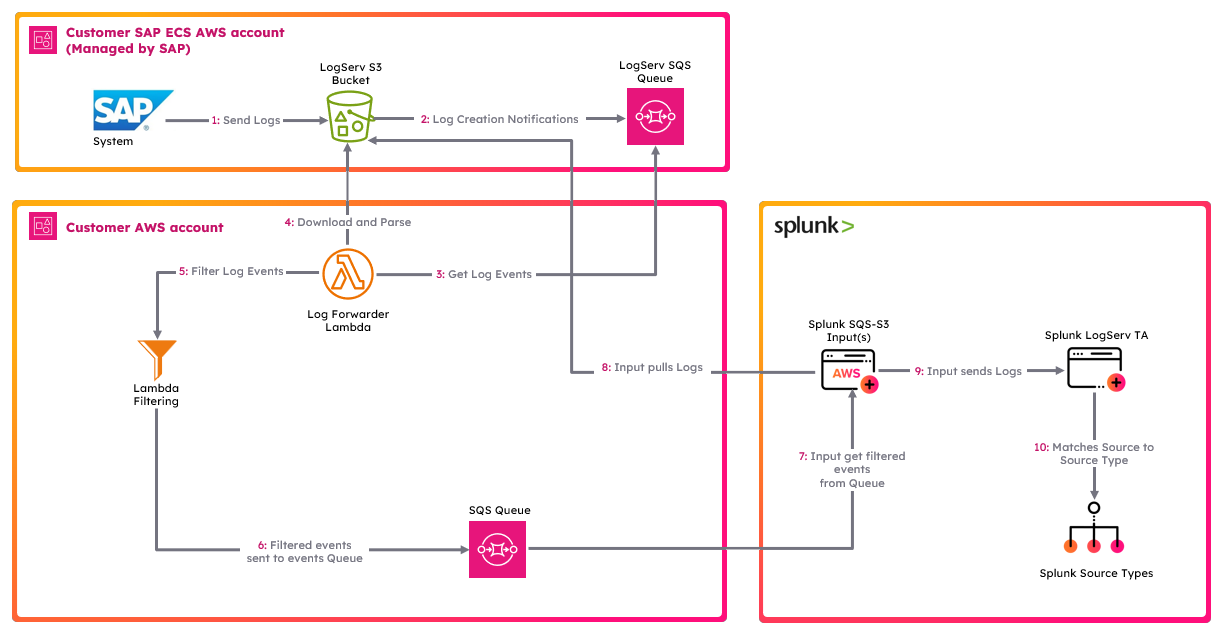

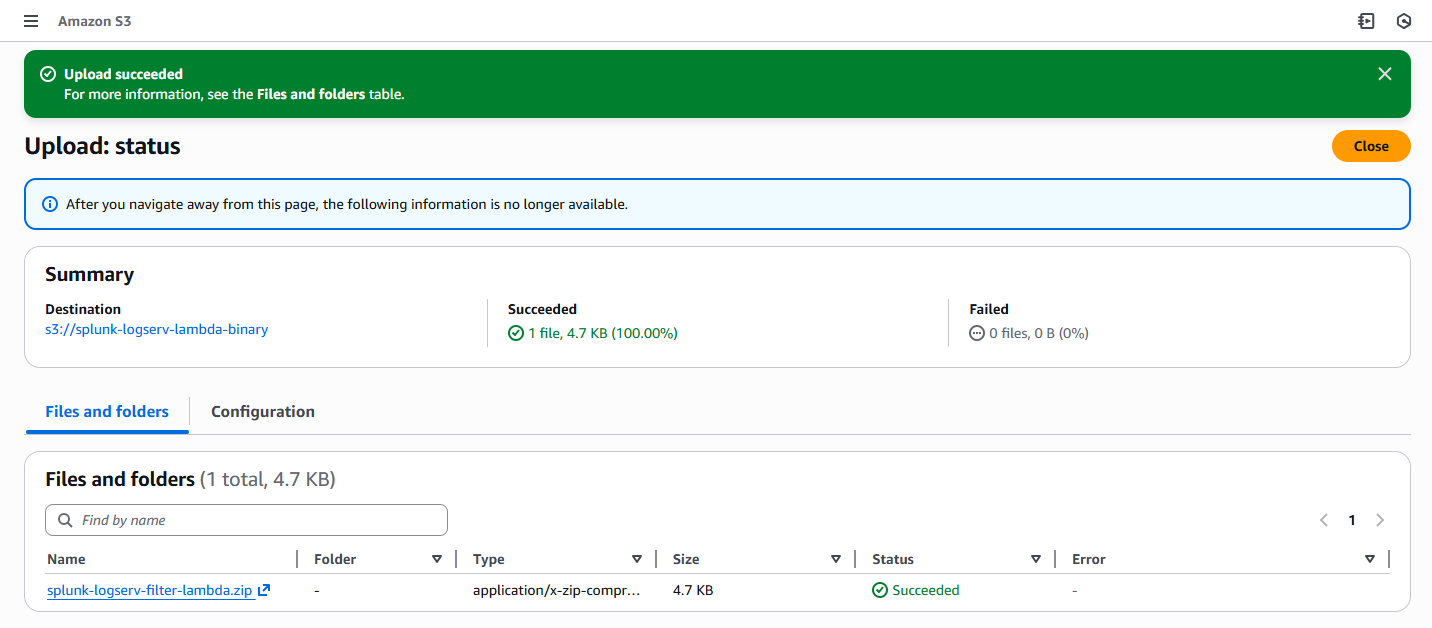

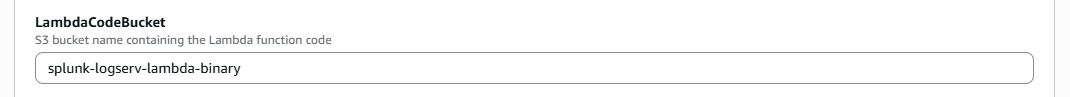

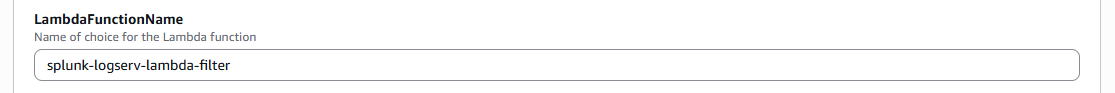

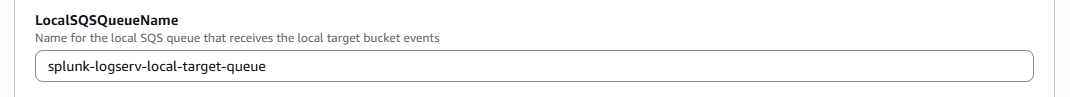

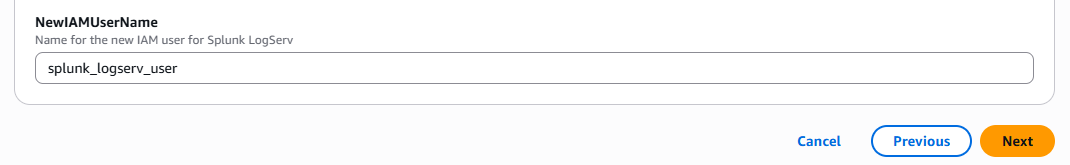

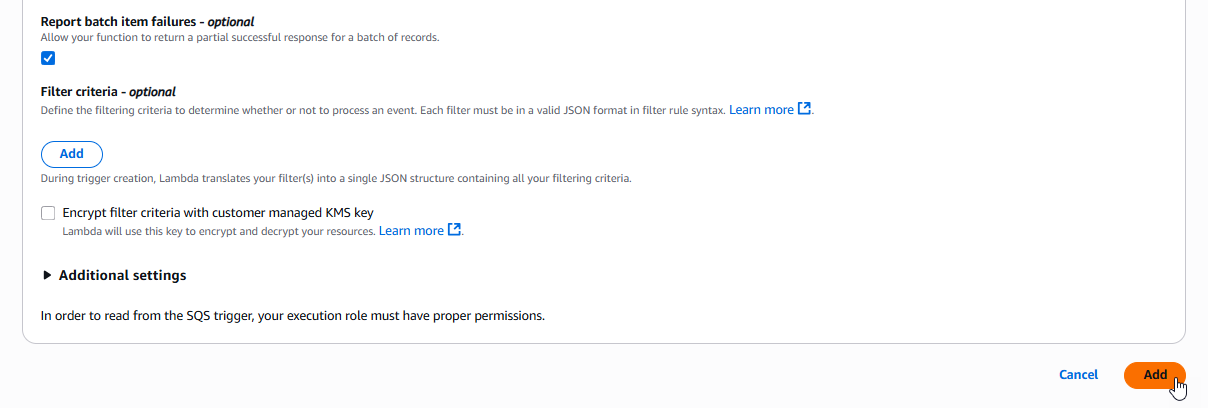

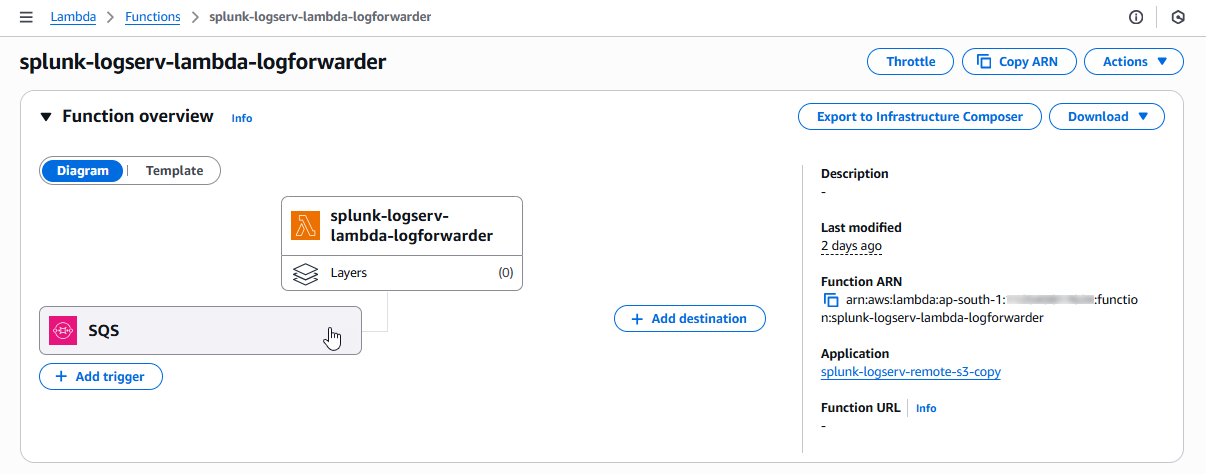

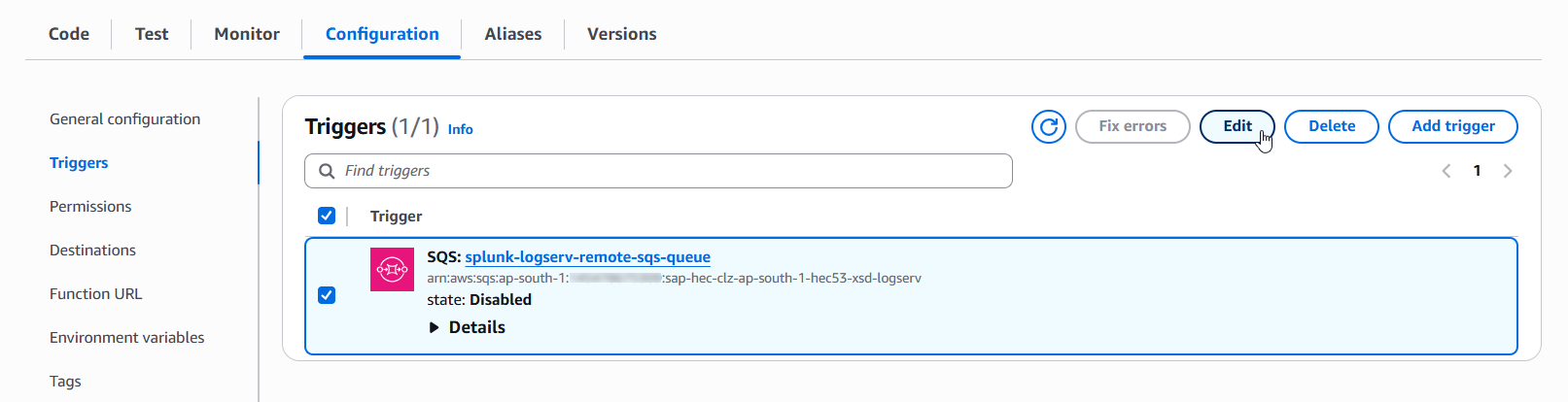

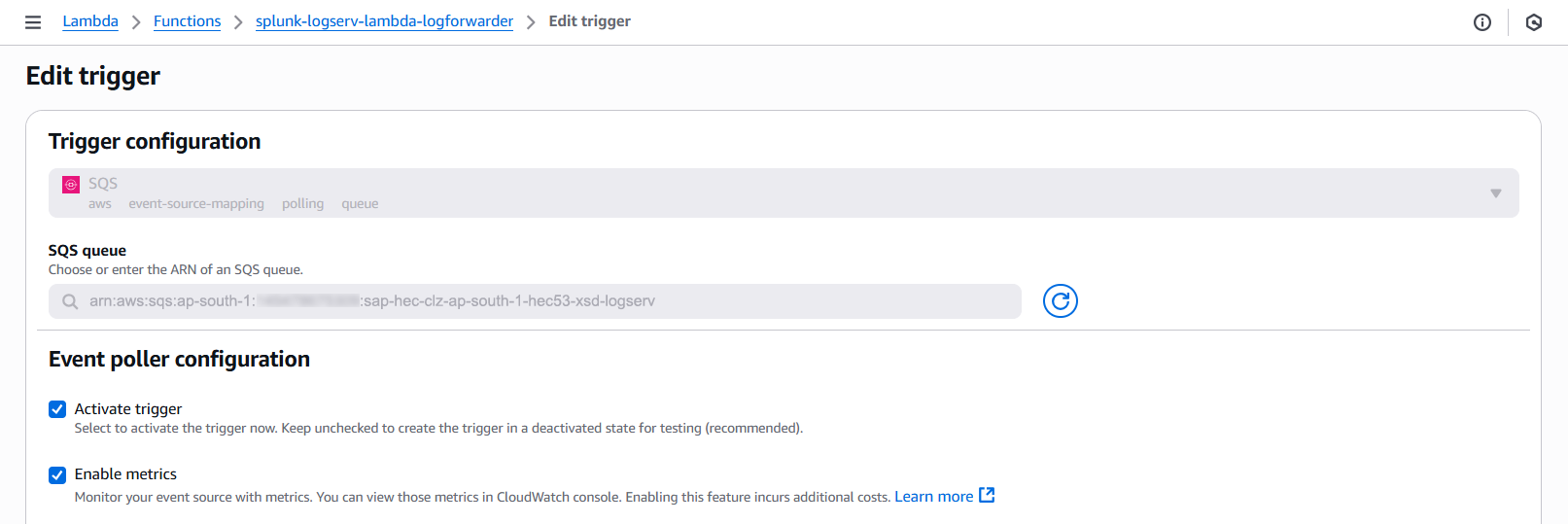

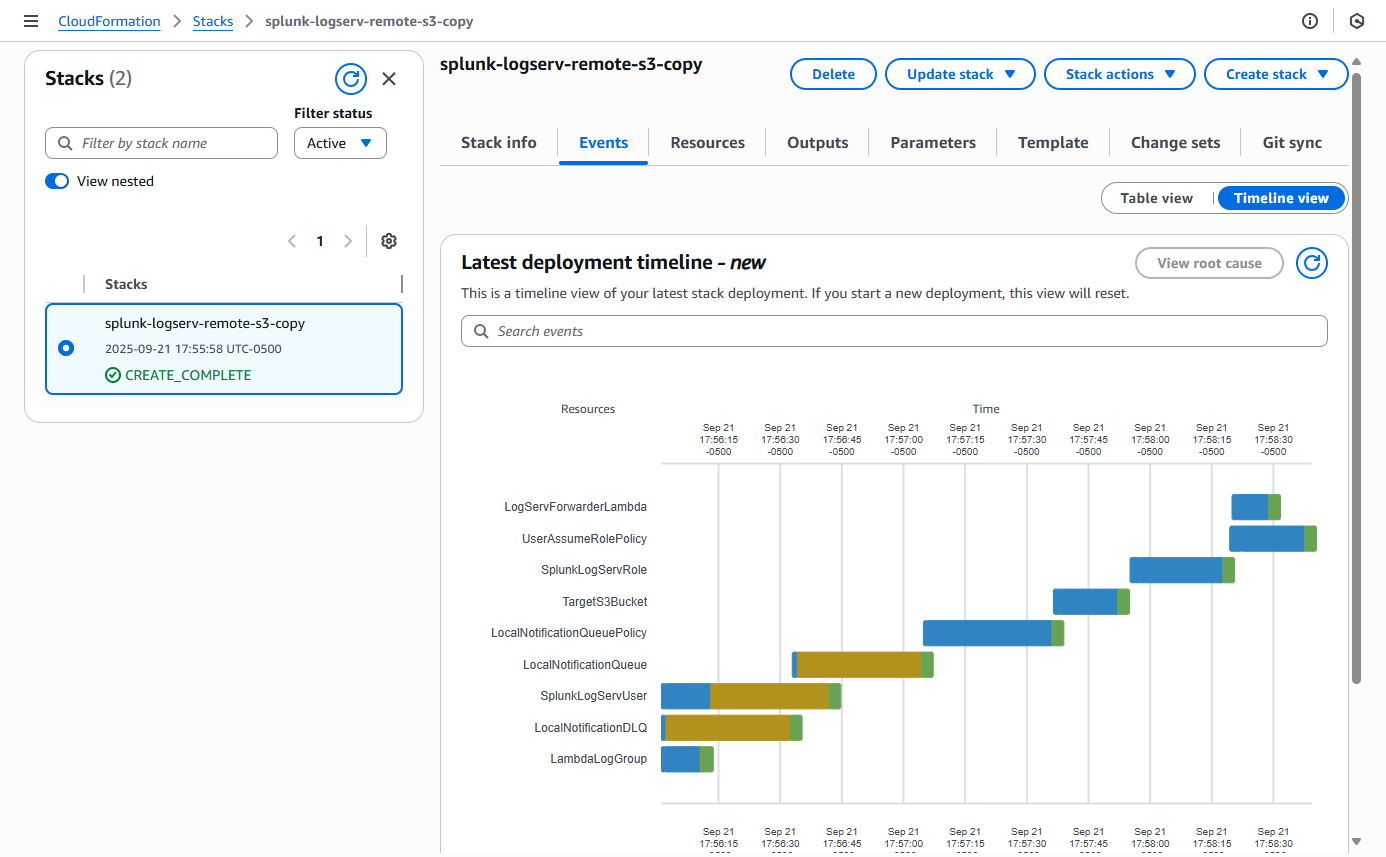

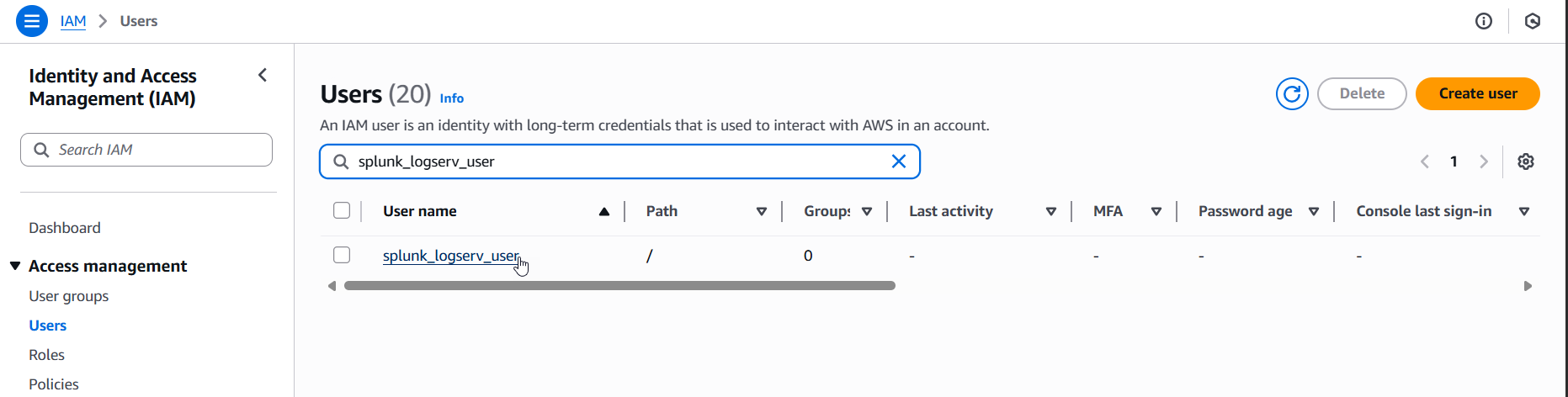

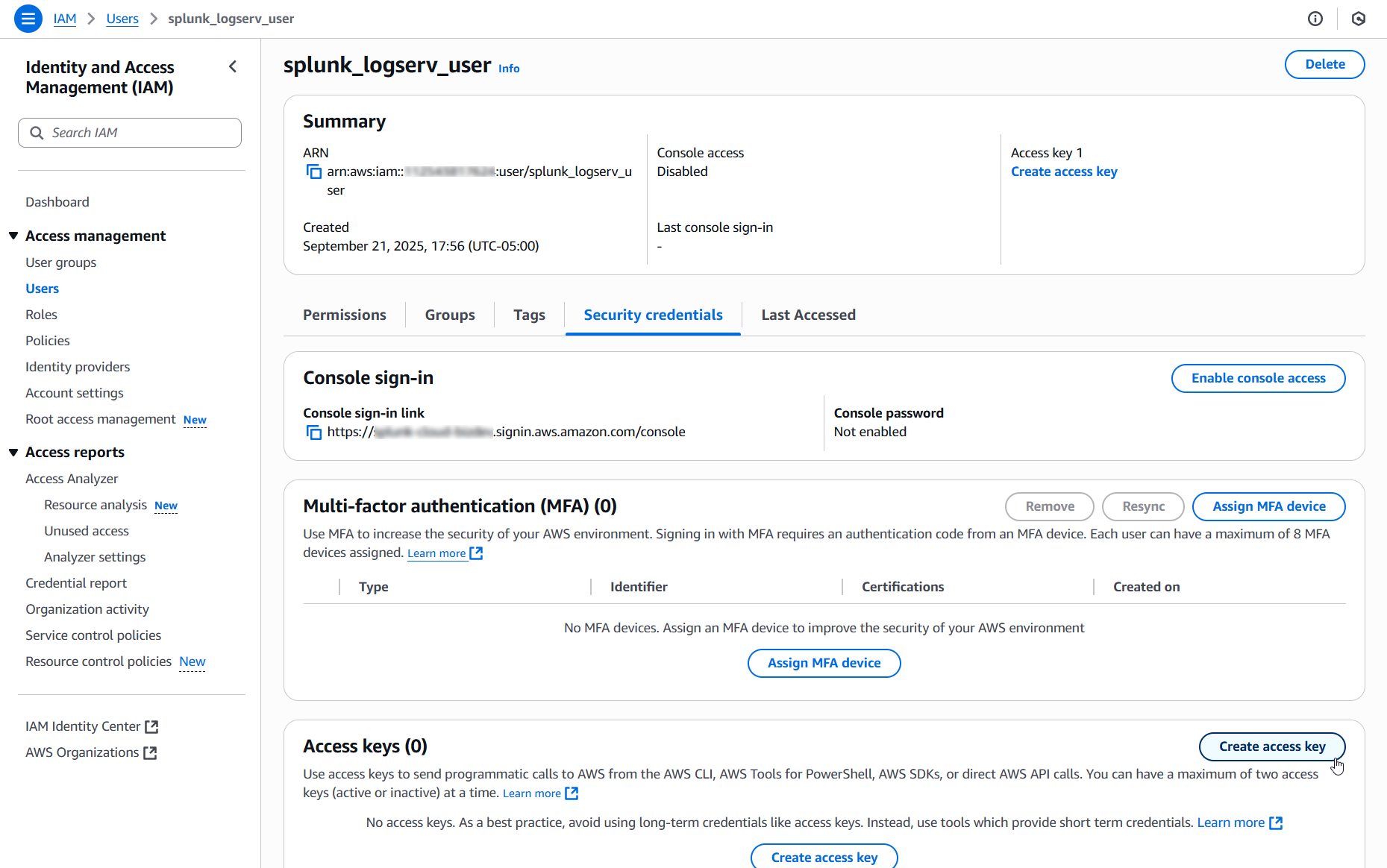

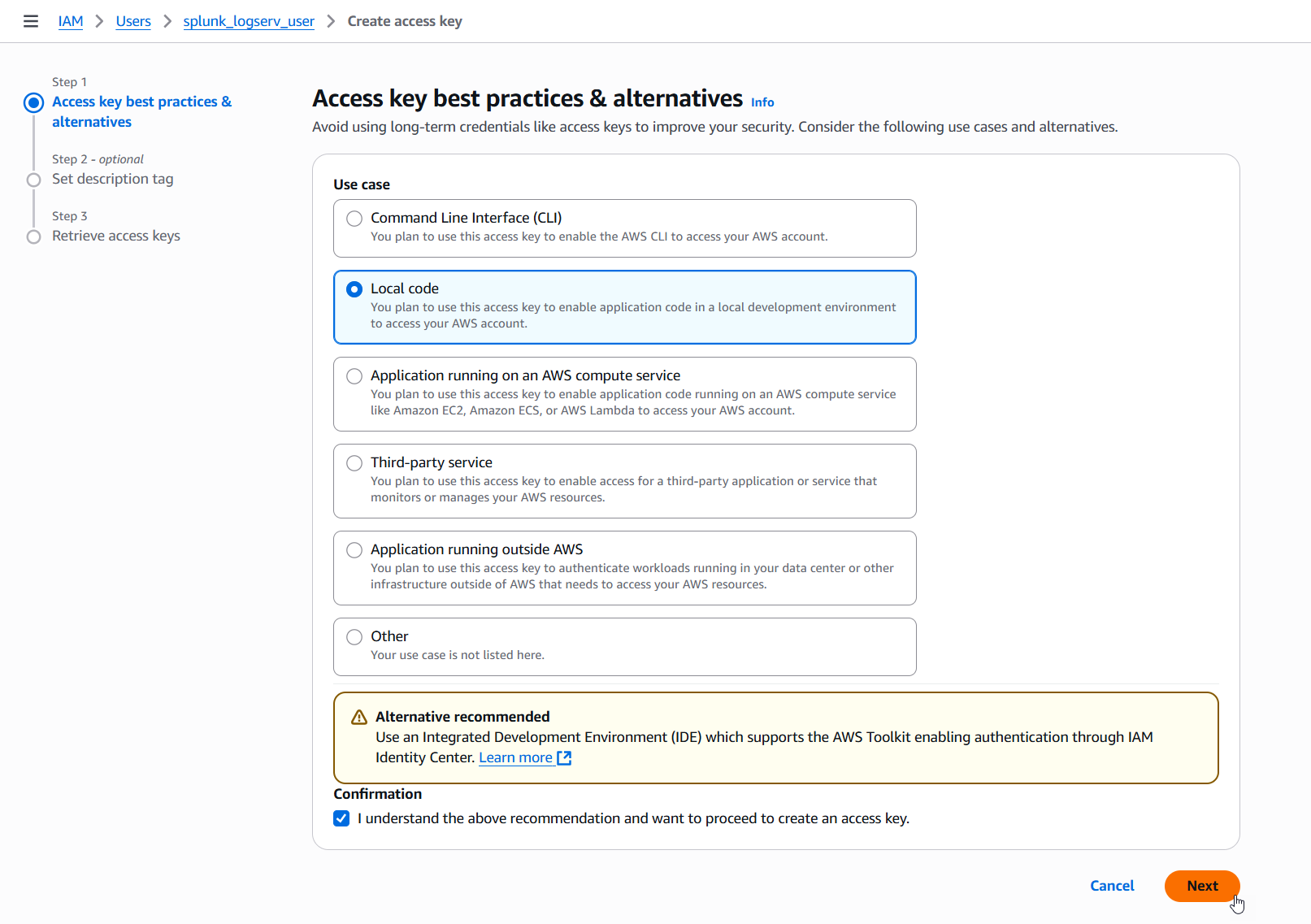

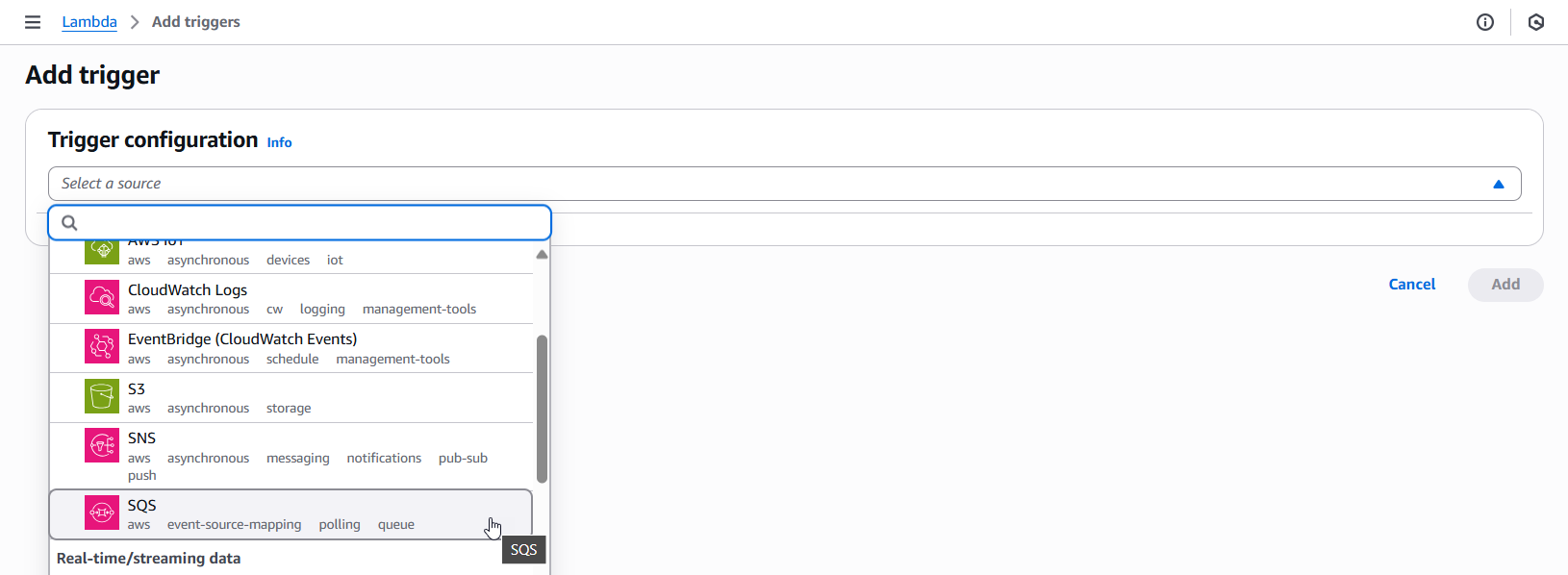

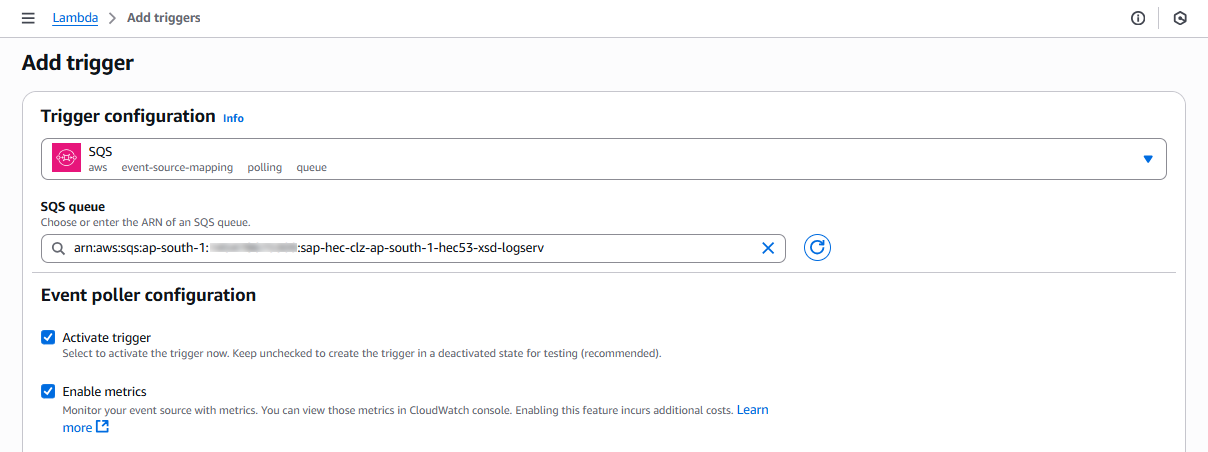

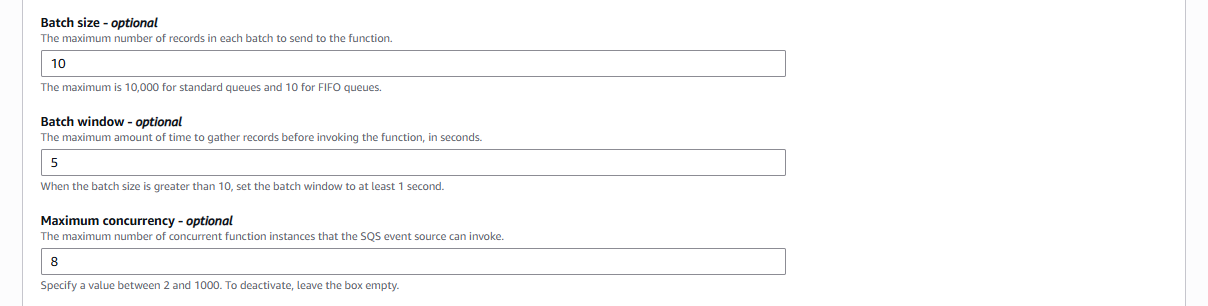

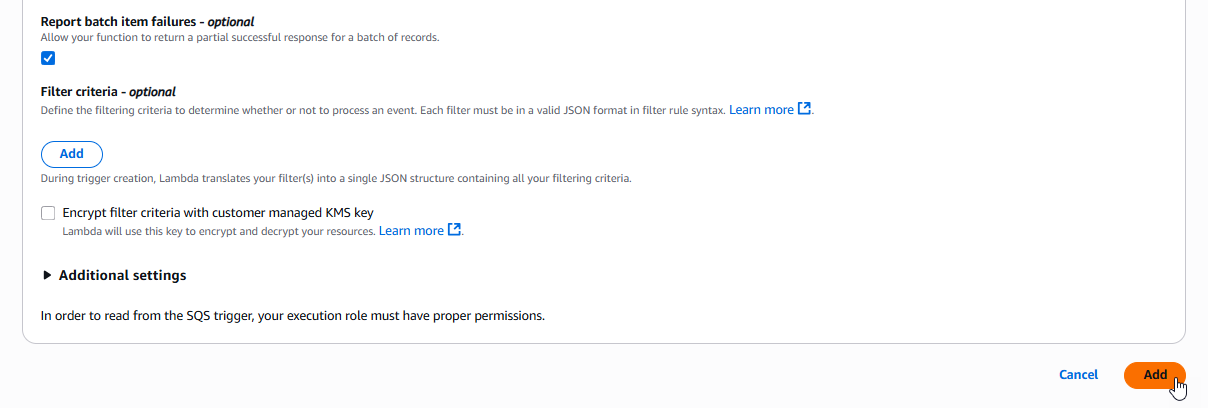

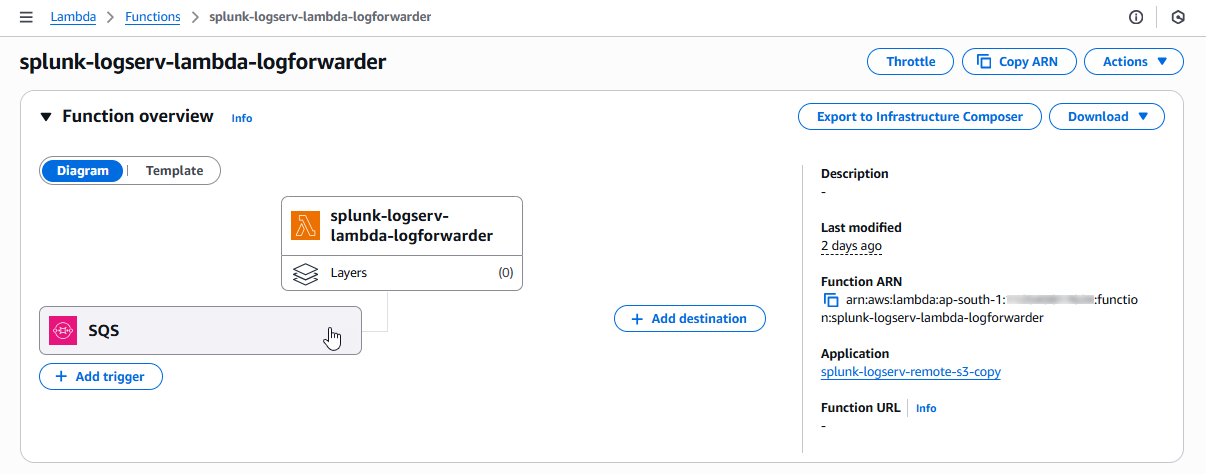

- AWS Lambda-based filtering — New deployment option that filters S3 event notifications in AWS before they reach Splunk, reducing S3 GET request costs and SQS message volume. Available via the AWS Remote S3 Filter Setup Walkthrough or the Connect to Filter Migration. Can be used alongside or independently of the native TA filtering.

- Deployment Server automation — When installed on a Deployment Server, the TA automatically stages filter configurations for distribution to Heavy Forwarders and provides a one-click “Deploy to Forwarders” button.

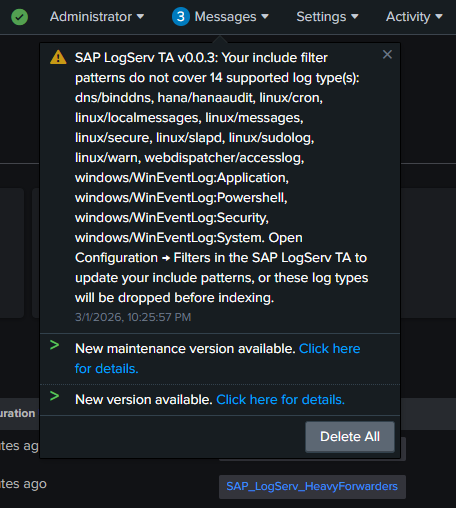

- Upgrade notifications — A system message banner alerts administrators when a TA upgrade adds support for new log types that are not covered by existing include filter patterns.

- Daily time filter refresh — A built-in scripted input automatically refreshes the time-based filter cutoff once per day to maintain accuracy of the rolling time window.

- SAP HANA Audit field extractions — 14 EXTRACT, 11 EVAL, and 16 FIELDALIAS directives for the

sap:hana:auditsourcetype. - SAP Web Dispatcher field extractions — 18 EXTRACT, 3 EVAL, and 6 FIELDALIAS directives for the

sap:webdispatcher:accesssourcetype.

Fixed issues¶

- Dashboards moved from Data TA to dedicated LogServ App package for proper distributed deployment support.

Known issues¶

- The dashboards in the LogServ App use Dashboard Studio v2 format and require Splunk 9.4.3 or later.

Third-party software attributions¶

Version 0.0.2-beta¶

Compatibility¶

| Splunk platform versions | 9.4.x, 10.0.x |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

New features¶

Fixed issues¶

- Drilldown on overview dashboard to host details dashboard had the wrong application name and displayed an error when clicking on the host name.

- Renamed the ‘logserv_web_dispatcher_access.xml’ dashboard to ‘logserv_web_dispatcher.xml’.

- Renamed the ‘sap_rise_host_details.xml’ dashboard to ‘logserv_host_details.xml’.

- Updated the ‘~/ui/nav/default.xml’ with updated dashboard names.

Known issues¶

- The dashboards included in this TA are Dashboard Studio dashboards that may not work with Splunk versions prior to 9.4.

Third-party software attributions¶

Version 0.0.1-beta¶

Compatibility¶

| Splunk platform versions | 9.4.x, 10.0.x |

| CIM | 5.1.1 and later |

| Supported OS for data collection | Platform independent |

| Vendor products | SAP LogServ for SAP ECS in Amazon Web Services (AWS) |

New features¶

Fixed issues¶

Known issues¶

-

Drilldown on overview dashboard to host details dashboard has the wrong application name and displays an error when clicking on the host name.

-

The dashboards included in this TA are Dashboard Studio dashboards that may not work with Splunk versions prior to 9.4.

Third-party software attributions¶

Third-Party Software Licenses¶

Splunk for SAP LogServ bundles open-source software from the npm ecosystem (the React-based LogServ UI App is a webpack-built single-page application). Each component carries its own license, and per those licenses we preserve attribution and bundle the full license text alongside the App.

Where to find the full notices¶

The complete list of bundled third-party packages — names, versions, declared licenses, and full license text — is delivered in two places:

- Inside the App tarball —

splunk_app_sap_logserv/THIRD-PARTY-NOTICES.md(the file is at the root of the installed app directory after extraction) - At the source-tree root — the same file lives at the root of the GitHub release source tarball

The file is generated deterministically from the resolved node_modules/ tree at build time, so its content matches exactly what shipped with the App version you have installed.

License-distribution summary (v0.0.5.0)¶

The v0.0.5.0 build includes 1235 unique top-level packages under node_modules/. The license breakdown:

| License (SPDX as declared) | Count | Inclusion obligation |

|---|---|---|

| MIT | 1012 | Copyright + license text (preserved in THIRD-PARTY-NOTICES.md) |

| ISC | 64 | Copyright + license text |

| Apache-2.0 | 57 | License text + NOTICE if present |

| BSD-3-Clause | 46 | Copyright + license text |

| BSD-2-Clause | 22 | Copyright + license text |

SEE LICENSE IN LICENSE.md (Splunk libs) |

11 | Covered as a Splunk Extension under §1.C of Splunk General Terms |

| BlueOak-1.0.0 | 4 | License text |

| CC0-1.0 / 0BSD / MIT-0 | 7 | Public-domain or near-public-domain (no obligation) |

| BSD-3-Clause AND Apache-2.0 | 1 | License text |

| MIT AND Zlib | 1 | License text |

| MIT OR Apache-2.0 | 1 | Either license; we attribute MIT |

| MIT OR CC0-1.0 | 1 | Either license; we attribute MIT |

| MPL-2.0 OR Apache-2.0 | 1 | We do not modify the file; either license is satisfied |

| MIT OR SEE LICENSE IN FEEL-FREE.md | 1 | MIT chosen |

| MPL-2.0 (axe-core) | 1 | License text only (we do not modify the source) |

| Python-2.0 | 1 | License text |

| CC-BY-4.0 / CC-BY-3.0 | 2 | Attribution preserved |

| (no declared license field) | 2 | See note below |

Notes¶

- No GPL/AGPL/LGPL components are included in the App. The license posture is fully compatible with commercial redistribution under the standard Splunkbase model.

- Two packages without a declared

licensefield —@mapbox/jsonlint-lines-primitives@2.0.2anduuid-v4@0.1.0— are present as transitive dependencies. Both are de-facto open-source and have been on the public npm registry for years; reviewers preparing legal attestations should consult the upstream repositories listed in the per-component entries ofTHIRD-PARTY-NOTICES.mdfor any final disposition. @splunk/*packages (11 total, declared asSEE LICENSE IN LICENSE.md) are covered as a Splunk Extension under §1.C of the Splunk General Terms — the standard mechanism for redistributing Splunk UI libraries inside a Splunkbase-distributed app.

Refresh policy¶

The THIRD-PARTY-NOTICES.md file is regenerated from the resolved node_modules/ tree at every release. v0.0.5.0 used a one-off generation pass; v0.1.1 onward auto-refreshes the file as part of the standard yarn build pipeline so the notices and the bundled JavaScript artifacts always agree on what was shipped.

Supported Log Types¶

Overview¶

SAP ECS environment logs are not a singular data source but a collection of OS-specific, SAP environment, database, and other application logs.

Due to the nature of this solution, the SAP LogServ packages are not standalone integrations. To take full advantage of their capabilities (like CIM mapping), you need to install additional TAs as specified in the Prerequisites.

For a streamlined data ingestion process, all selected logs are ingested under one sourcetype: sap_logserv_logs. They are then assigned to a final sourcetype during parsing/indexing on the Heavy Forwarder (or Indexer in single-instance mode), based on the source field.

All events are in NDJSON format with metadata (like _time, host, source, etc.) and the _raw field containing the event contents.

To limit index size, only the _raw field is ingested from each event – metadata fields are either mapped to Splunk’s native metadata fields or dropped.

However clz_dir and clz_subdir fields are preserved to maintain backtracking capabilities. These fields correspond to the directory tree of the original data in S3.

LogServ S3 Path Structure¶

The log files in the SAP LogServ S3 bucket follow this path pattern:

logserv/<clz_dir>/<clz_subdir>/<YYYY>/<MM>/<DD>/<filename>.json.gz

For example:

logserv/linux/messages/2025/09/15/messages-abc123.json.gz

logserv/hana/hanaaudit/2025/10/01/hana-xyz789.json.gz

logserv/dns/binddns/2025/11/20/dns-def456.json.gz

The clz_dir/clz_subdir values are used by the index-time filter to match include/exclude patterns. See Configuring Filters for details.

Sourcetype Mapping¶

SAP HANA Audit (LogServ App)¶

The LogServ App provides search-time field extractions for SAP HANA audit events, including 14 EXTRACT, 11 EVAL, and 16 FIELDALIAS directives.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| hana audit log | sap:hana:audit |

hana/hanaaudit |

SAP Web Dispatcher (LogServ App)¶

The LogServ App provides search-time field extractions for SAP Web Dispatcher access logs, including 18 EXTRACT, 3 EVAL, and 6 FIELDALIAS directives.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| web dispatcher access log | sap:webdispatcher:access |

webdispatcher/accesslog |

SAP ABAP Application Logs (LogServ App)¶

The LogServ App provides search-time field extractions for 9 SAP ABAP application log types. Each sourcetype includes sap_sid and sap_instance extraction from the source metadata field, plus type-specific field extractions.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| ABAP security audit log | sap:abap:audit |

abap/audit |

| ABAP dispatcher log | sap:abap:dispatcher |

abap/dispatcher |

| ABAP enqueue server log | sap:abap:enqueueserver |

abap/enqueueserver |

| ABAP event log | sap:abap:event |

abap/event |

| ABAP gateway log | sap:abap:gateway |

abap/gateway |

| ABAP ICM (Internet Communication Manager) log | sap:abap:icm |

abap/icm |

| ABAP message server log | sap:abap:messageserver |

abap/messageserver |

| ABAP sapstartsrv log | sap:abap:sapstartsrv |

abap/sapstartsrv |

| ABAP work process log | sap:abap:workprocess |

abap/workprocess |

SAP HANA Trace Logs (LogServ App)¶

The LogServ App provides search-time field extractions for HANA trace logs, including SID/instance extraction from the source path.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| HANA trace log | sap:hana:tracelogs |

hana/tracelogs |

SAP Cloud Connector (LogServ App)¶

The LogServ App provides search-time field extractions for SAP Cloud Connector audit and HTTP access logs.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| SCC audit log (CSV format) | sap:scc:audit |

scc/audit |

| SCC HTTP access log | sap:scc:http_access |

scc/tracelogs |

SAP Service Logs (LogServ App)¶

The LogServ App provides search-time field extractions for SAP host-level service logs. These are infrastructure services that run at the host control level (/usr/sap/hostctrl/) rather than within a specific SAP instance.

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| SAP Start Service log (auth, SSL/TLS) | sap:sapstartsrv |

sap/sapstartsrv |

| SAP Host Agent execution log | sap:saphostexec |

sap/saphostexec |

| SAP Router connection and trace log | sap:saprouter |

sap/saprouter |

SAP Service Log Details

sap:sapstartsrvincludes fields for OS authentication failures, SSL/TLS negotiation errors (protocol version, cipher suite, peer addresses), and webmethod invocation failures.sap:saproutercovers both.logfiles (CONNECT/DISCONNECT/INVAL DATA events with connection IDs and host addresses) and.trcfiles (NiBuf/NiI error traces with peer/local addresses and return codes) as a single sourcetype.

Splunk Add-on for Unix and Linux¶

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| /lastlog | lastlog | linux/linux_secure |

| /var/log/cron | syslog | linux/cron |

| /var/log/firewall | linux_secure | linux/linux_secure |

| /var/log/kernel | linux_secure | linux/linux_secure |

| /var/log/localmessages | linux_messages_syslog | linux/localmessages |

| /var/log/messages | linux_messages_syslog | linux/messages |

| /var/log/pacemaker(.log) | syslog | linux/warn |

| /var/log/slapd.log | syslog | linux/slapd |

| /var/log/sssd(.log) | linux_secure | linux/linux_secure |

| /var/log/sudolog | syslog | linux/sudolog |

| /var/log/warn | syslog | linux/warn |

| /who | who | linux/linux_secure |

Splunk Add-on for Microsoft Windows¶

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| WinEventLog:Application | XmlWinEventLog | windows/WinEventLog:Application |

| WinEventLog:(*.)Operational | XmlWinEventLog | windows/WinEventLog:Powershell |

| WinEventLog:Security | XmlWinEventLog | windows/WinEventLog:Security |

| WinEventLog:System | XmlWinEventLog | windows/WinEventLog:System |

Splunk Add-on for Squid Proxy¶

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| /var/log/squid/access.log | squid:access | proxy/squid |

| /var/log/squid/cache.log | squid:access | proxy/squid |

| /var/log/squid/store.log | squid:access | proxy/squid |

Splunk Add-on for ISC BIND¶

| Source field value | Sourcetype assigned | Filter path |

|---|---|---|

| /var/lib/named/log/named/default.log | isc:bind:query | dns/binddns |

| /var/lib/named/log/named/general.log | isc:bind:network | dns/binddns |

| /var/lib/named/log/named/lame-servers.log | isc:bind:lameserver | dns/binddns |

| /var/lib/named/log/named/network.log | isc:bind:network | dns/binddns |

| /var/lib/named/log/named/notify.log | isc:bind:transfer | dns/binddns |

| /var/lib/named/log/named/queries.log | isc:bind:query | dns/binddns |

| /var/lib/named/log/named/resolver.log | isc:bind:network | dns/binddns |

| /var/lib/named/log/named/update.log | isc:bind:transfer | dns/binddns |

| /var/lib/named/log/named/xfer-out.log | isc:bind:transfer | dns/binddns |

Filter Path Column

The Filter path column shows the clz_dir/clz_subdir value used in the index-time filter include/exclude patterns. A -- means the log type does not currently have a filter-eligible transform. See Configuring Filters for details.

Prerequisites Overview¶

Splunk for SAP LogServ ships as two separately installable packages with distinct prerequisites. Use this page to plan what you need before starting installation.

The Two Packages¶

| Package | App ID | Role | Where it installs |

|---|---|---|---|

| LogServ Data TA | splunk_ta_sap_logserv |

Data collection from S3, index-time filtering, deployment server automation, ships the indexes.conf for the two indexes the solution writes to |

Single instance, OR Deployment Server + each Heavy Forwarder + Indexer |

| LogServ UI App | splunk_app_sap_logserv |

Dashboards, AI Assistant chat panel, search-time field extractions | Single instance, OR the Search Head only |

For single-instance deployments, both packages install on the same instance. For distributed topologies, each package goes to its own tier — never SCP a Data TA file to the Search Head, and never SCP a UI App file to a Heavy Forwarder. The Data TA carries indexes.conf defining both sap_logserv_logs (SAP data) and _ai_assistant_audit (AI Assistant audit log); Splunk auto-creates them on indexer install, no separate Index App required.

Both indexes are macro-configurable — sap_logserv_idx_macro (SAP data, default index="sap_logserv_logs") and sap_logserv_audit_idx_macro (audit log, default index="_ai_assistant_audit"). Customers who rename either index update the matching macro definition under Settings → Advanced search → Search macros. See Renaming an index for the full procedure (READ + WRITE paths).

Common Prerequisites (both packages)¶

- Splunk Enterprise 9.4.3 or later, or Splunk Cloud Platform

Splunk 9.4.3 is the minimum because the LogServ App’s React stack (@splunk/react-ui, @splunk/visualizations, @xyflow/react) requires the React component versions shipped with that release.

Package-Specific Prerequisites¶

Each package has its own additional prerequisites — install Splunkbase add-ons appropriate to that tier.

- Data TA Prerequisites — CIM-aligned add-ons for the sourcetypes the Data TA produces (Unix/Linux, Windows, Squid, ISC BIND), plus the AWS Add-on for S3-based ingest. The Data TA also auto-creates the two indexes (

sap_logserv_logs+_ai_assistant_audit) from its bundleddefault/indexes.confon first startup — no separate prereq. - LogServ App Prerequisites — the Splunk MCP Server (Splunkbase App 7931) for the AI Assistant’s predefined-prompt dispatch path, plus the optional Splunk AI Assistant (App 200) recommended companion.

Decision Tree¶

| Your situation | What you need |

|---|---|

| Single Splunk instance running the full LogServ solution | Both prerequisite sets — Data TA + App |

| Distributed Splunk with on-prem Search Head | Data TA prereqs on DS + each HF + the indexer; App prereqs on the SH |

| Distributed Splunk with Splunk Cloud Search Head | Data TA prereqs on DS + each HF; App prereqs on the Splunk Cloud SH; Splunk Cloud admin handles the indexer tier (Data TA installed there provides the index defs) |

| Splunk Cloud Search Head only (no on-prem ingest tier) | App prereqs only — your Splunk Cloud admin handles the data tier and the indexer tier separately |

Next Steps¶

- Quick Install Reference — single-page matrix mapping every Splunkbase add-on + LogServ component to the tier(s) where each gets installed

- Data TA Prerequisites — for the data collection tier

- LogServ App Prerequisites — for the dashboards + AI Assistant tier

- The Data TA auto-creates the SAP data + AI Assistant audit indexes on first install — no separate Index App is required. See Indexes (auto-created on install).

- Architecture — full topology overview

Quick Install Reference¶

A single matrix mapping every Splunkbase add-on, prerequisite, and LogServ component to the tier(s) where each gets installed. Use this as a pre-install checklist; for full install steps see the per-package pages linked from each row.

Package Matrix¶

Single-instance Splunk

For a single-instance Splunk deployment (one host playing every role), install every required app on that one host. The matrix below is for distributed topologies — column headings refer to specific tiers. SH = Search Head, IDX = Indexer, HFs = Heavy Forwarders, DS = Deployment Server.

| App | Splunkbase | Required? | SH | IDX | HFs | DS |

|---|---|---|---|---|---|---|

LogServ Data TA (splunk_ta_sap_logserv) |

this repo | required | — | ✓ (indexes.conf) | ✓ (via DS) | ✓ (filter UI) |

LogServ App (splunk_app_sap_logserv) |

this repo | required | ✓ | — | — | — |

| Splunk Add-on for Unix and Linux | 833 | required (CIM) | ✓ | ✓ | ✓ | — |

| Splunk Add-on for Microsoft Windows | 742 | required (CIM) | ✓ | ✓ | ✓ | — |

| Splunk Add-on for Squid Proxy | 2965 | required (CIM) | ✓ | ✓ | ✓ | — |

| Splunk Add-on for ISC BIND | 2876 | required (CIM) | ✓ | ✓ | ✓ | — |

| Splunk Add-on for AWS | 1876 | required if SAP ECS in AWS | — | — | ✓ (S3 inputs) | — |

| Splunk MCP Server | 7931 v1.1.0+ | required for AI Assistant | ✓ | — | — | — |

| Splunk AI Assistant | 200 | recommended companion to 7931 | ✓ | — | — | — |

Notes¶

- Indexer rationale. The Data TA goes on the indexer because it bundles

indexes.conf. See Why does the Data TA need to go on the Indexer? for the trade-off + opt-out path. - CIM add-ons (Unix/Linux, Windows, Squid, ISC BIND). Install on every tier where the Data TA installs so sourcetype definitions resolve consistently. Splunkbase’s AppInspect rules require these as declared dependencies.

- AWS Add-on (1876). Only needed when SAP ECS data lives in AWS S3. The TA owns the SQS-based S3 inputs that pull data from the dest bucket; the LogServ Data TA then sourcetype-routes events as they’re parsed on HFs. The actual

index = sap_logserv_logssetting that sends events to the right place lives in this TA’s S3 input config — not in the LogServ Data TA. - MCP Server (7931). Required for the AI Assistant’s predefined-prompt path even when the LLM-driven path is disabled. Without it, the AI Assistant chat panel can’t dispatch saved searches.

- Splunk AI Assistant (200). The LogServ App uses only the core

splunk_run_saved_searchandsplunk_run_queryMCP tools (which work standalone against 7931), but App 200 follows Splunk’s documented co-install pattern and unlockssaia_*-prefixed MCP tools that may be used in future LogServ releases.

Per-Topology Checklists¶

Single Splunk instance¶

Install all required + recommended apps on the same instance. Splunk auto-creates both indexes (sap_logserv_logs + _ai_assistant_audit) when the Data TA loads on first start.

Distributed (DS + HFs + on-prem SH+IDX)¶

| Tier | Install |

|---|---|

| Search Head | LogServ App, MCP Server (7931), Splunk AI Assistant (200), CIM add-ons (Unix/Linux, Windows, Squid, ISC BIND) |

| Indexer | LogServ Data TA (provides indexes.conf for both indexes), CIM add-ons |

| Deployment Server | LogServ Data TA (manages filter UI + pushes Data TA to HFs), CIM add-ons |

| Heavy Forwarders | Receive LogServ Data TA via the DS automatically. Install the AWS add-on (1876) directly + CIM add-ons. |

Distributed (DS + HFs + Splunk Cloud SH)¶

| Tier | Install |

|---|---|

| Splunk Cloud Search Head | LogServ App, MCP Server (7931), Splunk AI Assistant (200), CIM add-ons |

| Splunk Cloud Indexer tier | Splunk Cloud admin handles. Either (a) install the LogServ Data TA there to use the bundled index defs, OR (b) the Cloud admin manually creates sap_logserv_logs and _ai_assistant_audit via the Splunk Cloud UI — see Why does the Data TA need to go on the Indexer?. |

| Deployment Server | LogServ Data TA, CIM add-ons |

| Heavy Forwarders | Receive LogServ Data TA via the DS. Install AWS add-on (1876) directly + CIM add-ons. |

Next Steps¶

- Architecture — full topology diagram + the why behind the package split

- Data TA Prerequisites — Splunkbase TA prereq detail (which CIM add-on covers which sourcetype)

- LogServ App Prerequisites — MCP Server + AI Assistant prereq detail

- Installing the Data TA — full install procedure including the indexer-tier rationale

- Installing the LogServ App

Ended: Getting Started

LogServ Data TA ↵

Data TA Prerequisites¶

This page covers the prerequisites for the LogServ Data TA (splunk_ta_sap_logserv) — the data-collection + index-time-filtering side. For the LogServ App’s prerequisites (the AI Assistant’s MCP Server dependency), see LogServ App Prerequisites.

Splunk Platform Requirements¶

- Splunk Enterprise 9.4.3 or later, or Splunk Cloud Platform

Required Splunk Add-ons¶

The SAP LogServ packages depend on several additional Splunk Technical Add-ons for sourcetype definitions and CIM mapping. Install these from Splunkbase before proceeding:

- Splunk Add-on for Unix and Linux

- Splunk Add-on for Microsoft Windows

- Splunk Add-on for Squid Proxy

- Splunk Add-on for ISC BIND

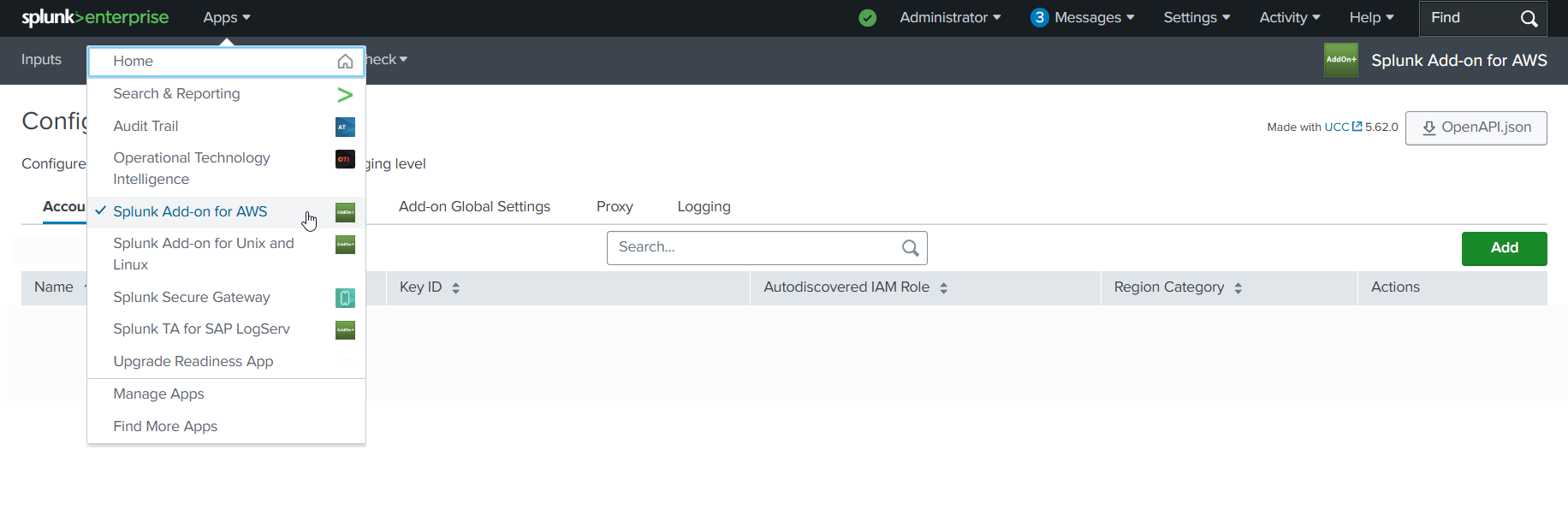

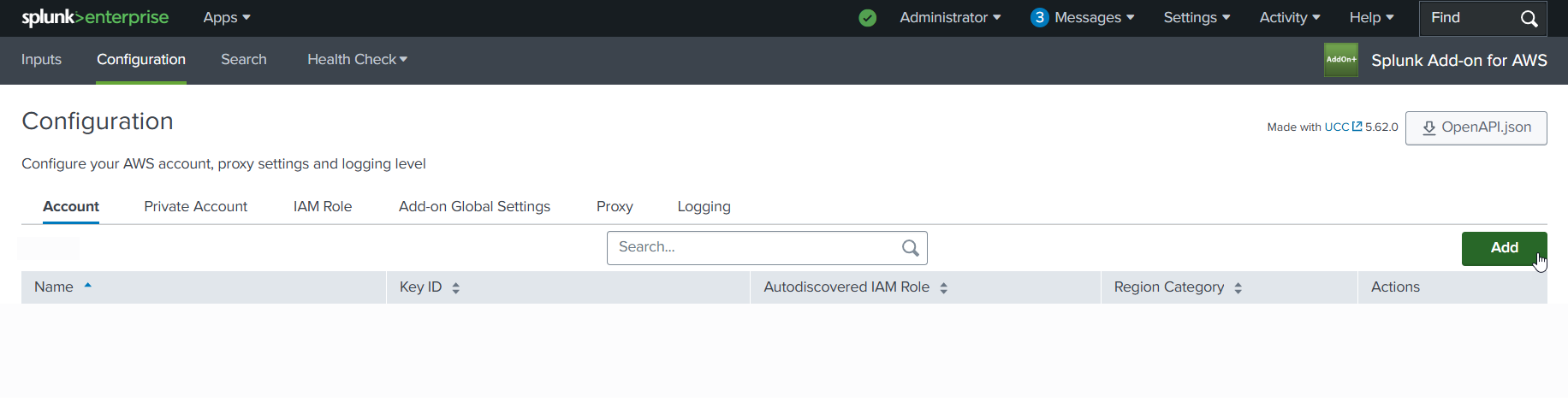

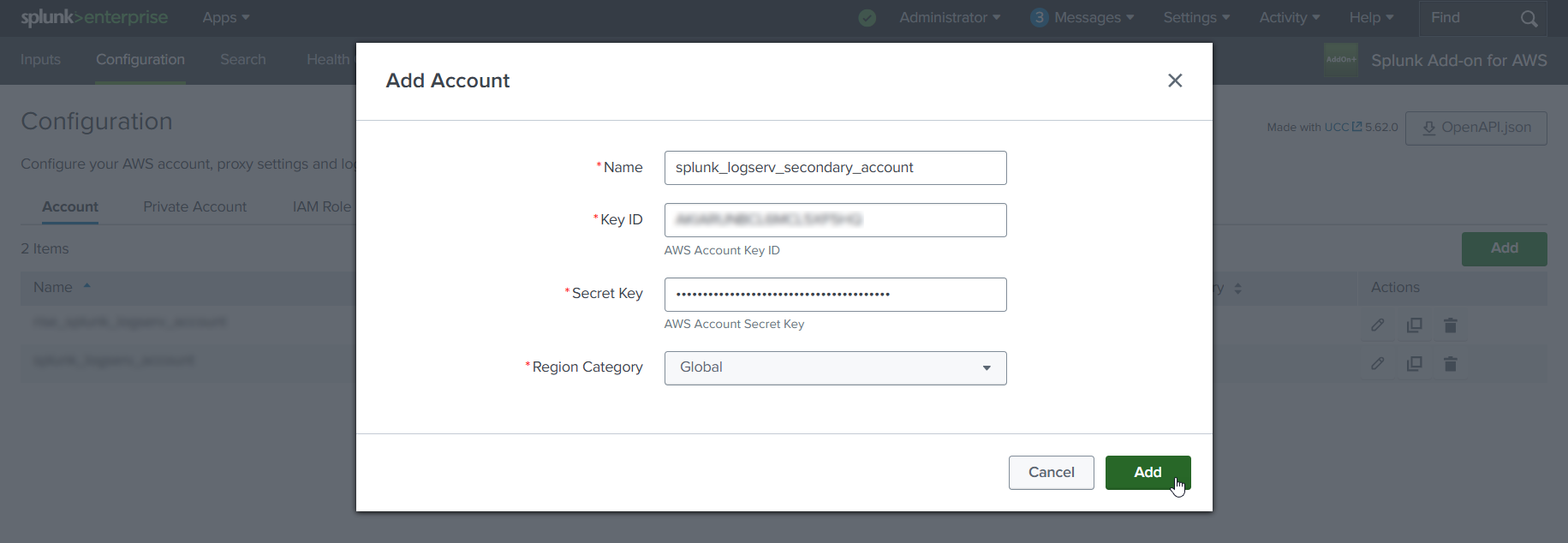

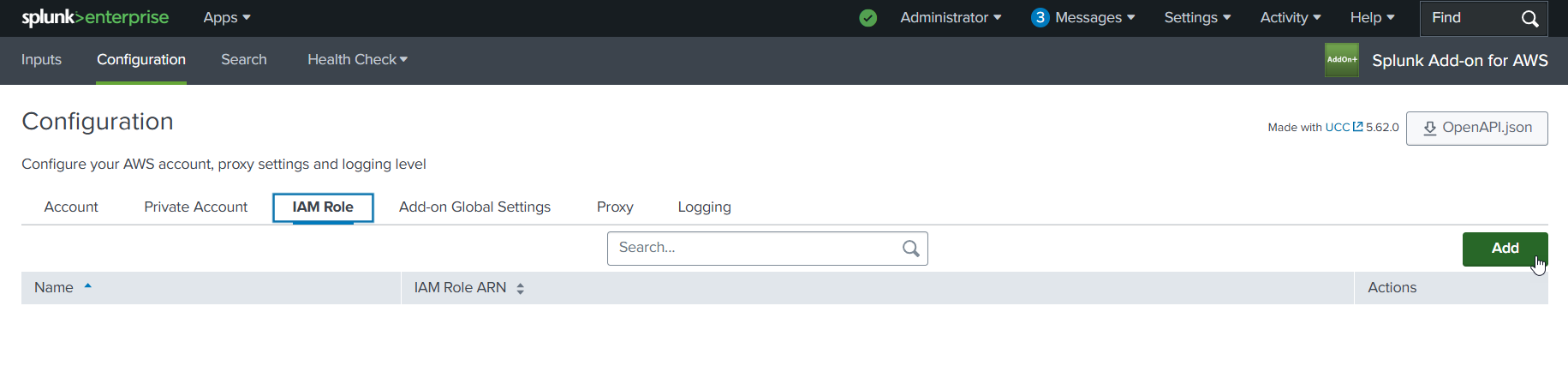

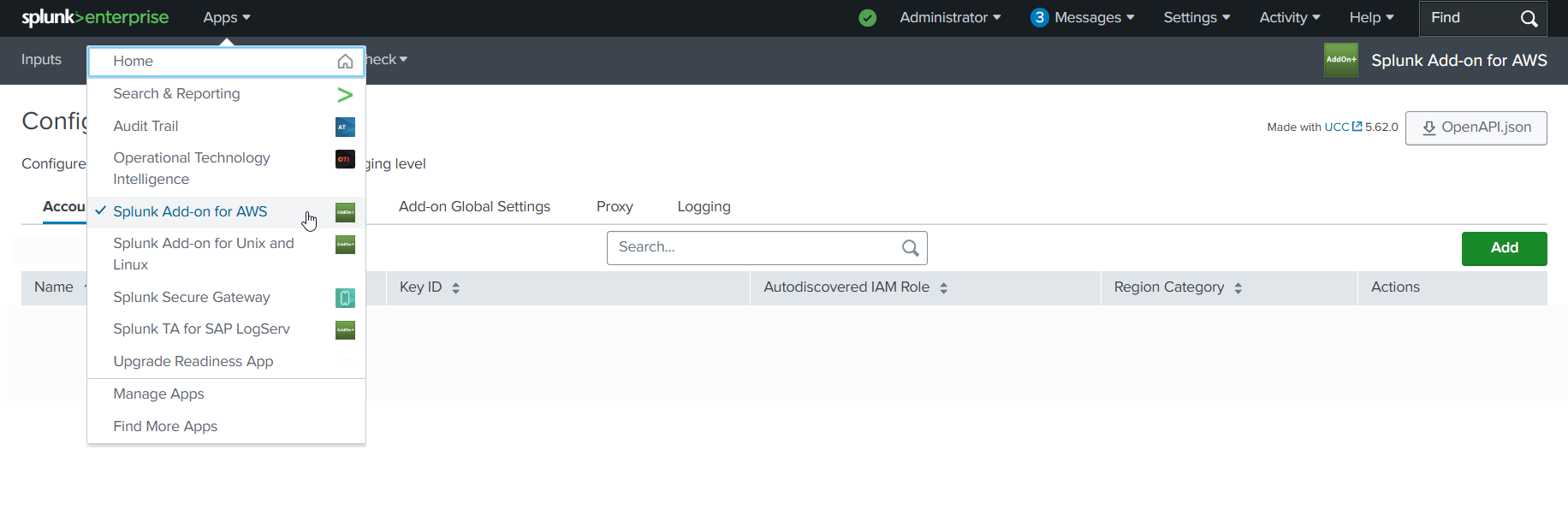

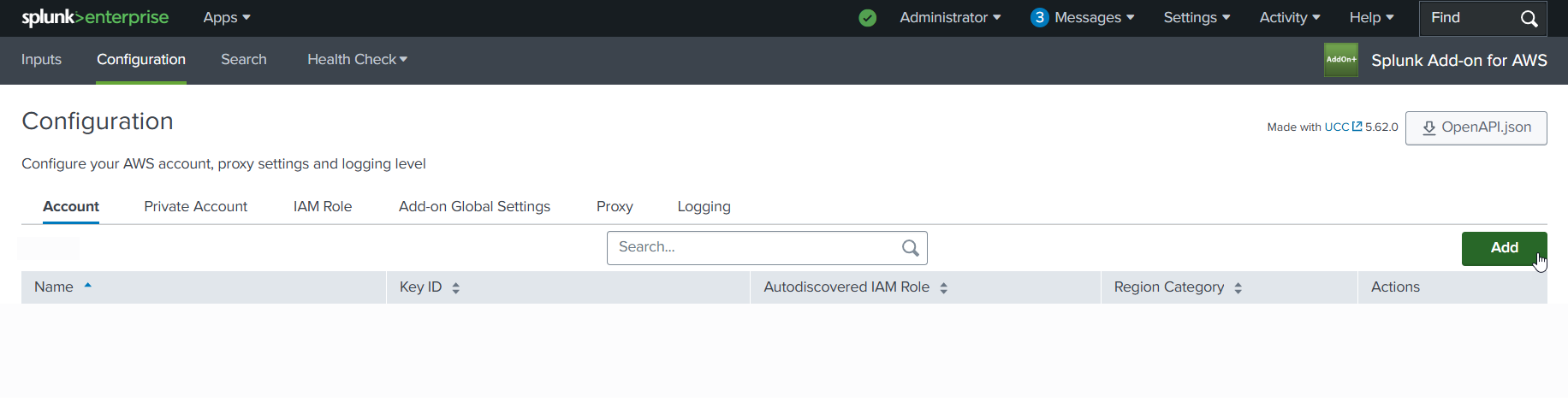

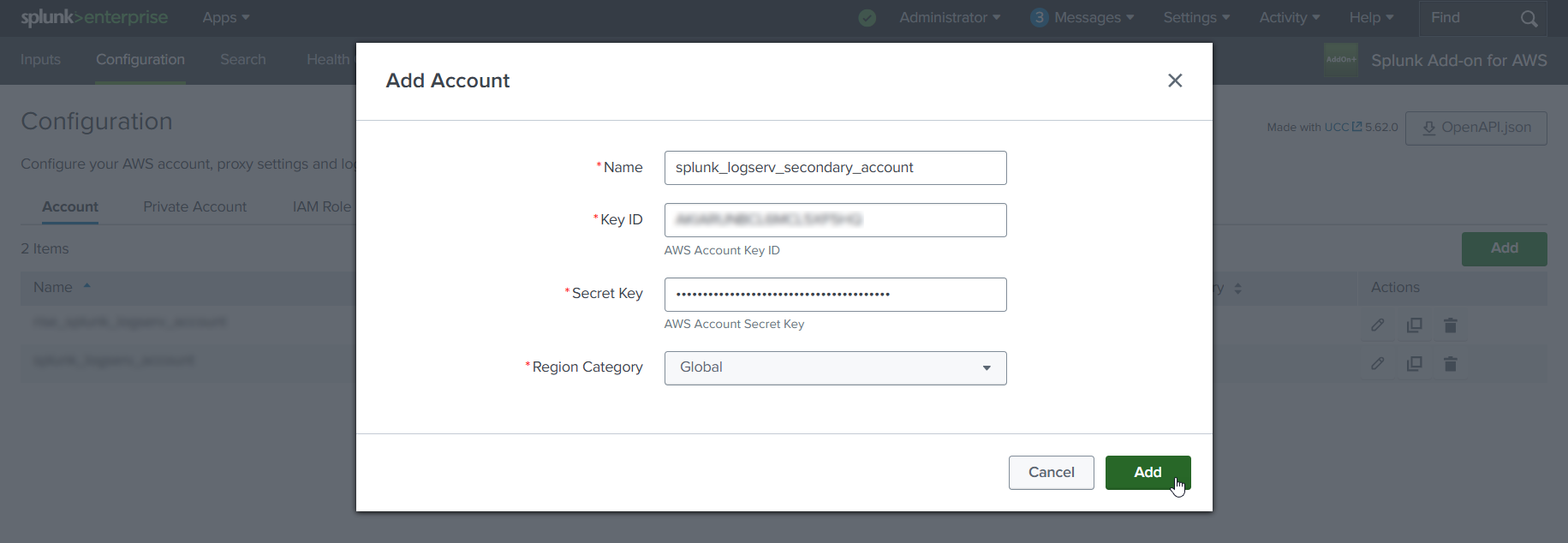

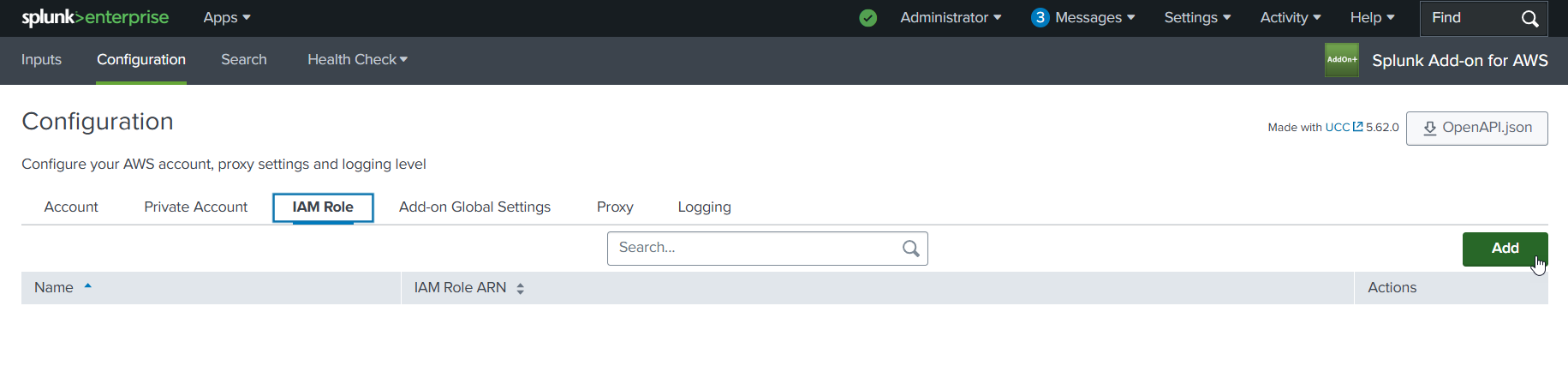

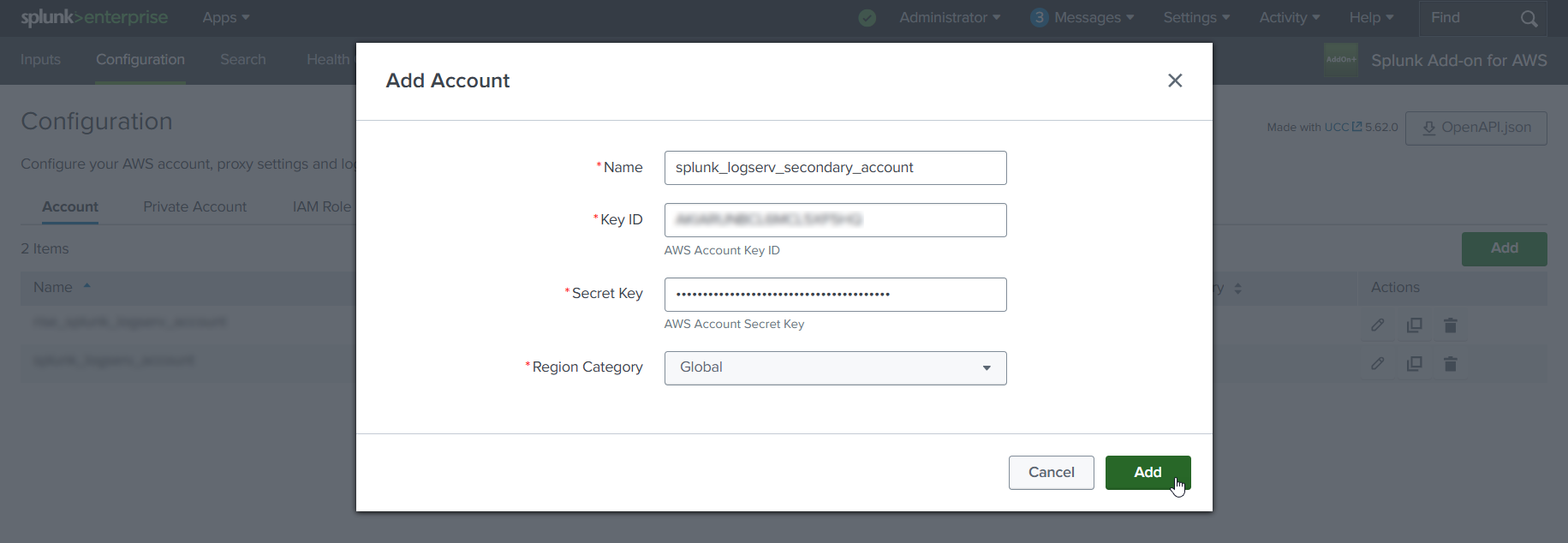

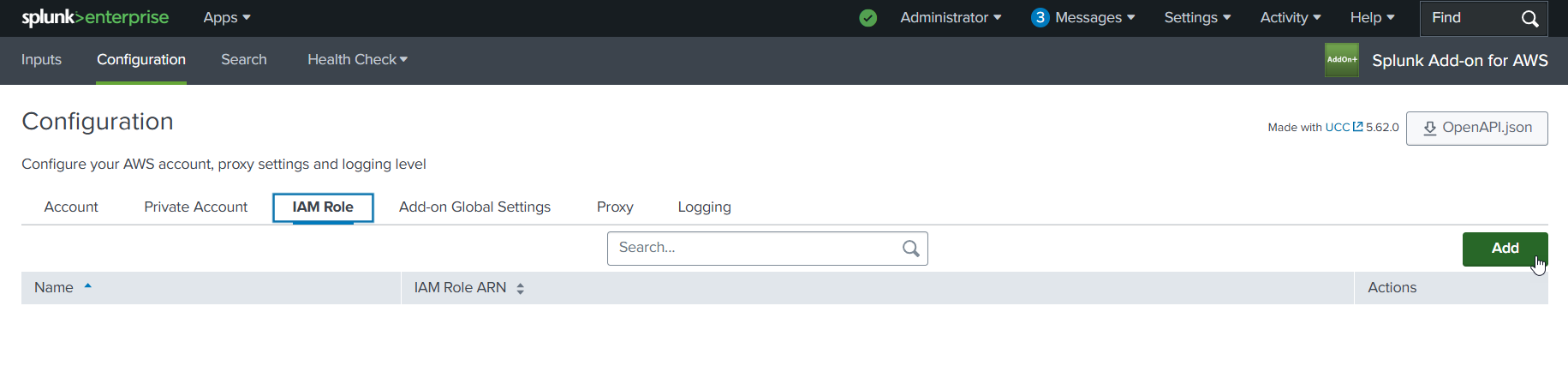

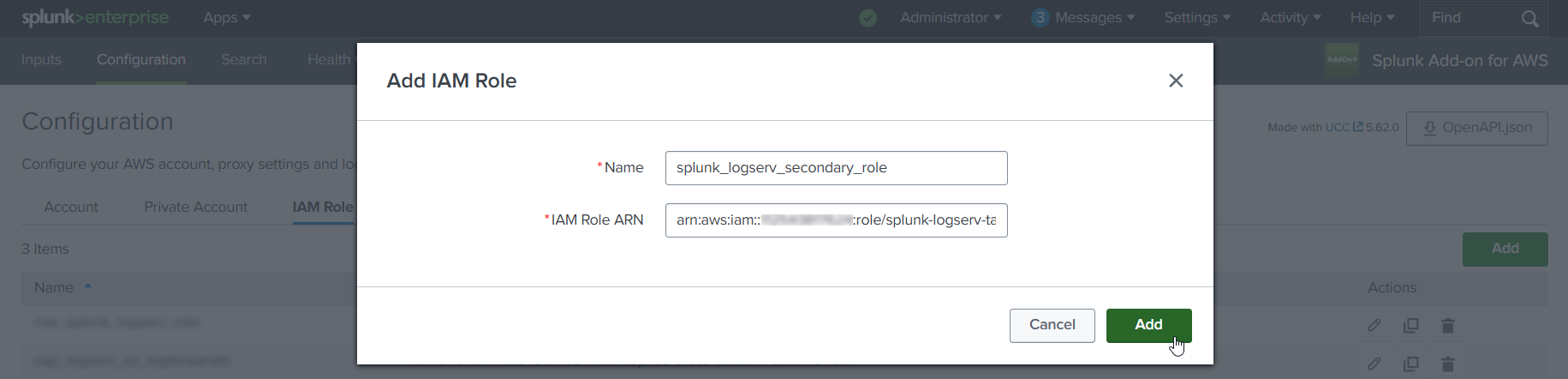

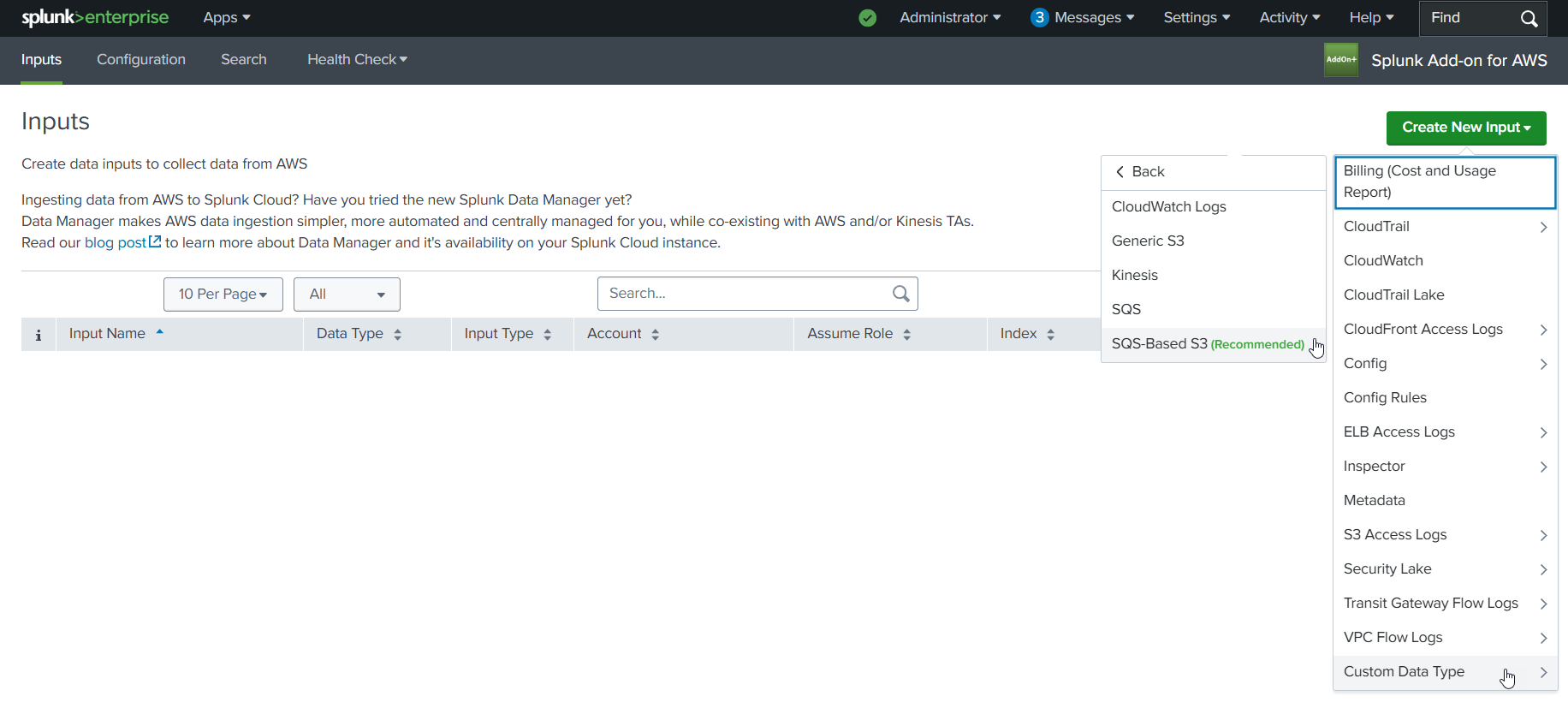

SAP ECS running in Amazon Web Services (AWS)¶

If you have SAP ECS running in Amazon Web Services (AWS) you need to install this additional Splunk Technical Add-on as well.

- Splunk Add-on for Amazon Web Services (AWS) Download

- Splunk Add-on for Amazon Web Services (AWS) Documentation

Additional configuration instructions for the Splunk Add-on for Amazon Web Services (AWS) are provided in the Setup walkthroughs after the prerequisite steps have been completed so just install for now.

Next Steps¶

Next steps:

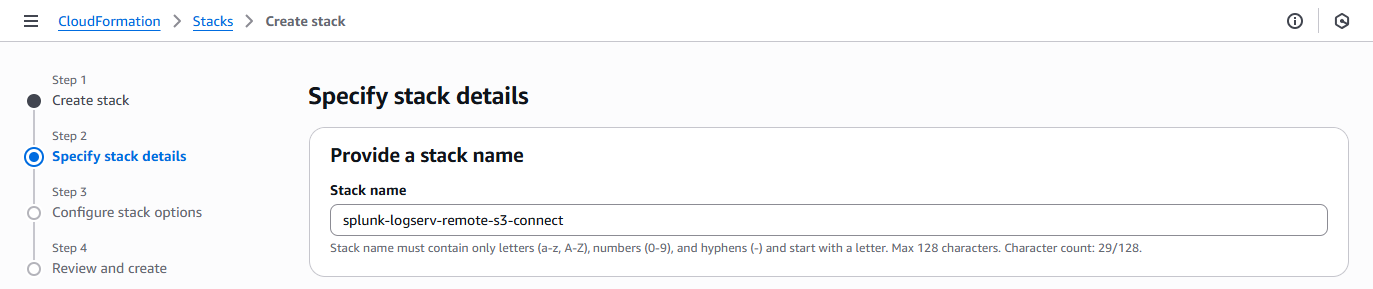

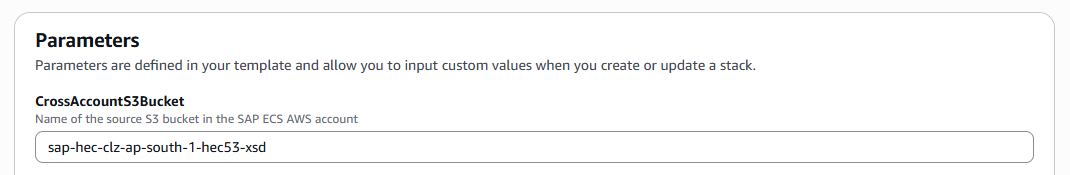

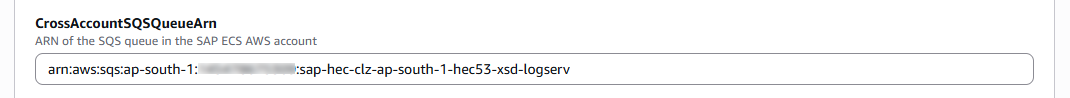

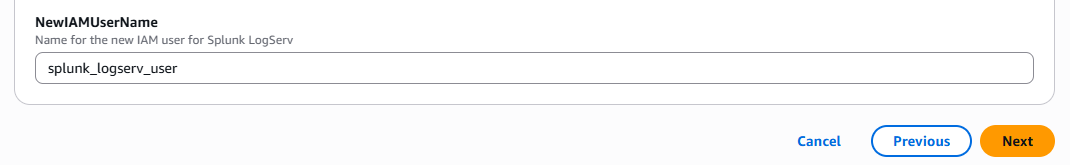

Installing the Data TA¶

This page covers installing the Data TA (splunk_ta_sap_logserv). For the LogServ App installation, see Installing the LogServ App.

High Level Steps¶

Below are the high level steps for installing the Data TA. Follow them in order.

Steps 4 and 5 are alternative paths — complete the one that matches your Splunk environment.

- Create a default events index

- Download the Data TA

- Identify where to install the Data TA based on your topology

- Install the Data TA in Splunk Cloud (if applicable)

- Install the Data TA in Splunk Enterprise (if applicable)

1. Indexes (auto-created on install)¶

The Data TA ships with default/indexes.conf defining two indexes that Splunk auto-creates the first time the Data TA loads on an indexer:

| Index | Purpose | Default name | Macro |

|---|---|---|---|

| SAP data index | Receives every event the Data TA forwards (logs ingested from S3 and routed to the appropriate sourcetype) | sap_logserv_logs |

sap_logserv_idx_macro |

| AI Assistant audit index | Receives every audit event the AI Assistant writes — canned-prompt dispatches, free-form vendor calls (when LLM path is enabled), security blocks, privacy-tier elevations, legal acknowledgements | _ai_assistant_audit |

sap_logserv_audit_idx_macro |

No customer-side index provisioning is required for the default install.

Note

Both the Data TA and the LogServ App include a macro named sap_logserv_idx_macro that resolves to index="sap_logserv_logs". The LogServ App also includes sap_logserv_audit_idx_macro for the audit index. If you use a different index name, follow the Renaming an index procedure below.

Renaming an Index¶

Both indexes are macro-configurable, so customers who need different names (e.g., a corporate naming convention) don’t have to fork the app — they update the macros (and, for the audit index, one config field).

To rename the SAP data index¶

- Pick a new name (e.g.,

splunk_audit_my_org_sap). - Create the index under that new name. Either:

- Add a custom

local/indexes.confto the Data TA with a stanza for your new name ([my_new_index_name]plus the samehomePath/coldPath/thawedPathsettings), OR - Create the index manually through Splunk Web’s Settings → Indexes → New Index UI. (See Splunk Cloud or Splunk Enterprise docs.)

- Add a custom

- Update the macro definition. Open Settings → Advanced search → Search macros, find

sap_logserv_idx_macro, and edit the definition fromindex="sap_logserv_logs"toindex="my_new_index_name". - Redirect the ingest pipeline to the new index name. The actual